Which is Better for AI Development: Apple M2 Pro 200gb 16cores or NVIDIA 3080 10GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is booming, and with it, the need for powerful hardware to run these models locally is growing. But choosing the right hardware for your AI development workflow can be tricky. You need to consider factors like processing speed, memory capacity, and cost. Today, we'll be comparing two popular contenders: the Apple M2 Pro 200GB with 16 cores and NVIDIA 3080 10GB to see which one comes out on top for local LLM token speed generation.

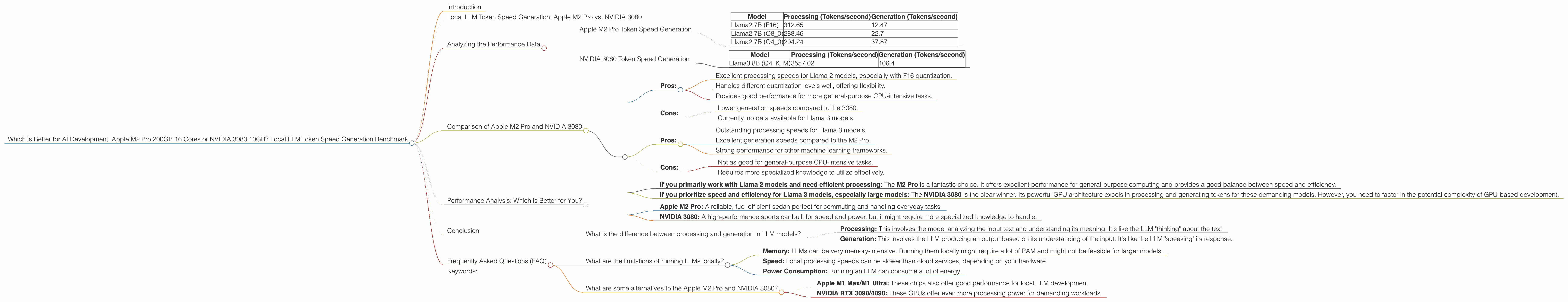

Local LLM Token Speed Generation: Apple M2 Pro vs. NVIDIA 3080

We'll be focusing on the speed at which these devices can generate tokens when running popular LLM models like Llama 2 and Llama 3. Token generation is the process of converting text into a sequence of numbers that the LLM can understand and process.

Think of it like this: imagine you're trying to teach a robot how to speak. But instead of teaching it words, you have to break down those words into individual sounds. That's what tokenization does for LLMs!

The faster your device can generate tokens, the faster your LLM will be able to process text, generate responses, and complete tasks.

Analyzing the Performance Data

Let's dive into the numbers!

Apple M2 Pro boasts a strong 200GB bandwidth and 16 cores (we'll be focusing on the 16-core version for this comparison), while the NVIDIA 3080 boasts 10GB of VRAM and 8960 CUDA cores.

Benchmarking: We'll be using data from two sources, one by ggerganov (https://github.com/ggerganov/llama.cpp/discussions/4167) and another by XiongjieDai (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference). These sources provide token speed figures for various models and configurations.

Apple M2 Pro Token Speed Generation

The Apple M2 Pro exhibits impressive performance when running Llama 2 models. Here's a breakdown:

| Model | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama2 7B (F16) | 312.65 | 12.47 |

| Llama2 7B (Q8_0) | 288.46 | 22.7 |

| Llama2 7B (Q4_0) | 294.24 | 37.87 |

Key takeaways:

- Faster Processing: The M2 Pro excels in processing tasks, delivering speeds of up to 312.65 tokens/second with Llama 2 7B (F16). This is fantastic for efficient model execution.

- Solid Generation: The generation speed is adequate, reaching up to 37.87 tokens/second with Llama 2 7B (Q4_0). While not as fast as some dedicated GPUs, it's still respectable for local development.

- Quantization: The M2 Pro shows good performance across different quantization levels (F16, Q80, Q40). This allows for flexibility in optimizing your model for size versus speed.

Quantization? Think of it like compressing a file - it reduces the amount of data needed to represent the model, making it smaller and potentially faster, but maybe with a bit of loss in accuracy.

NVIDIA 3080 Token Speed Generation

The NVIDIA 3080 shines when running Llama 3 models, particularly in processing tasks. Here's the breakdown:

| Model | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama3 8B (Q4KM) | 3557.02 | 106.4 |

Important: Data is currently unavailable for Llama 3 8B (F16), Llama 3 70B (Q4KM), and Llama 3 70B (F16) on the NVIDIA 3080. We'll update this section once the data becomes available.

Key Takeaways:

- Dominating Processing: The 3080's performance on Llama 3 8B (Q4KM) is phenomenal, reaching incredible speeds of 3557.02 tokens/second for processing. This indicates a huge advantage in executing the model efficiently.

- Solid Generation: The 3080 also delivers respectable generation speeds at 106.4 tokens/second for Llama 3 8B (Q4KM). This is significantly higher than the M2 Pro's best generation speed.

*Remember: * The availability of performance benchmarks for different models, configurations, and devices is always evolving. Always refer to up-to-date resources for the most accurate information.

Comparison of Apple M2 Pro and NVIDIA 3080

Now, let's compare the two contenders head-to-head:

Apple M2 Pro:

- Pros:

- Excellent processing speeds for Llama 2 models, especially with F16 quantization.

- Handles different quantization levels well, offering flexibility.

- Provides good performance for more general-purpose CPU-intensive tasks.

- Cons:

- Lower generation speeds compared to the 3080.

- Currently, no data available for Llama 3 models.

NVIDIA 3080:

- Pros:

- Outstanding processing speeds for Llama 3 models.

- Excellent generation speeds compared to the M2 Pro.

- Strong performance for other machine learning frameworks.

- Cons:

- Not as good for general-purpose CPU-intensive tasks.

- Requires more specialized knowledge to utilize effectively.

Performance Analysis: Which is Better for You?

So, the million-dollar question: which device reigns supreme? The answer depends on your specific needs and use case.

If you primarily work with Llama 2 models and need efficient processing: The M2 Pro is a fantastic choice. It offers excellent performance for general-purpose computing and provides a good balance between speed and efficiency.

If you prioritize speed and efficiency for Llama 3 models, especially large models: The NVIDIA 3080 is the clear winner. Its powerful GPU architecture excels in processing and generating tokens for these demanding models. However, you need to factor in the potential complexity of GPU-based development.

An analogy: Imagine you have two cars:

- Apple M2 Pro: A reliable, fuel-efficient sedan perfect for commuting and handling everyday tasks.

- NVIDIA 3080: A high-performance sports car built for speed and power, but it might require more specialized knowledge to handle.

Ultimately, the best choice depends on your individual requirements and the specific models you want to run.

Conclusion

It's clear that both the Apple M2 Pro and NVIDIA 3080 offer unique advantages for local LLM token generation. The M2 Pro excels in processing tasks for Llama 2 models, while the NVIDIA 3080 shines in handling larger models like Llama 3. The key is to choose the device that best aligns with your specific workflow and development goals.

Frequently Asked Questions (FAQ)

What is the difference between processing and generation in LLM models?

- Processing: This involves the model analyzing the input text and understanding its meaning. It's like the LLM "thinking" about the text.

- Generation: This involves the LLM producing an output based on its understanding of the input. It's like the LLM "speaking" its response.

What are the limitations of running LLMs locally?

- Memory: LLMs can be very memory-intensive. Running them locally might require a lot of RAM and might not be feasible for larger models.

- Speed: Local processing speeds can be slower than cloud services, depending on your hardware.

- Power Consumption: Running an LLM can consume a lot of energy.

What are some alternatives to the Apple M2 Pro and NVIDIA 3080?

- Apple M1 Max/M1 Ultra: These chips also offer good performance for local LLM development.

- NVIDIA RTX 3090/4090: These GPUs offer even more processing power for demanding workloads.

Keywords:

Apple M2 Pro, NVIDIA 3080, LLM, Large Language Model, Local LLM, Token Speed, Token Generation, Llama 2, Llama 3, AI Development, Benchmark, Processing Speed, Generation Speed, Quantization, Performance, GPU, VRAM, CUDA Cores, Bandwidth, CPU, Use Case, FAQ, Comparison, Performance Analysis