Which is Better for AI Development: Apple M2 Pro 200gb 16cores or Apple M3 Pro 150gb 14cores? Local LLM Token Speed Generation Benchmark

Introduction

In the rapidly evolving world of artificial intelligence (AI), large language models (LLMs) are revolutionizing the way we interact with technology. These powerful models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are becoming increasingly accessible.

Running LLMs locally is becoming increasingly popular, offering faster response times and greater control over data privacy. However, the performance of these models heavily relies on the hardware powering them. This article will compare the performance of the Apple M2 Pro 200GB 16-cores and M3 Pro 150GB 14-cores, two popular Apple Silicon processors, for running LLMs locally. We'll analyze the token generation speed of these devices for the Llama 2 7B model, a popular and widely-used LLM.

Diving into the Benchmark: M2 Pro vs M3 Pro for Llama 2 7B

To understand which device is better for your AI development needs, let's dive into the benchmark results. We'll look at the token generation speed of each processor, broken down by different quantization levels.

Quantization: Making LLMs Lighter and Faster

Before we jump into the numbers, a quick explanation of quantization: It's like diet for LLMs. It reduces the size of the model by using fewer bits to represent numbers, which makes them smaller and run faster. It's a bit like using a shorter word to express the same idea, like "a cat" instead of "felis catus." (See, even cats can benefit from quantization, but that's another story for another time!)

Here are the quantization levels we'll be looking at:

- F16: The "full-fat" version, using 16 bits per number.

- Q8_0: A "light" version, using 8 bits per number.

- Q4_0: A "super light" version, using only 4 bits per number.

Smaller models mean less memory is required, potentially faster inference, and the model can be run on lower-power devices.

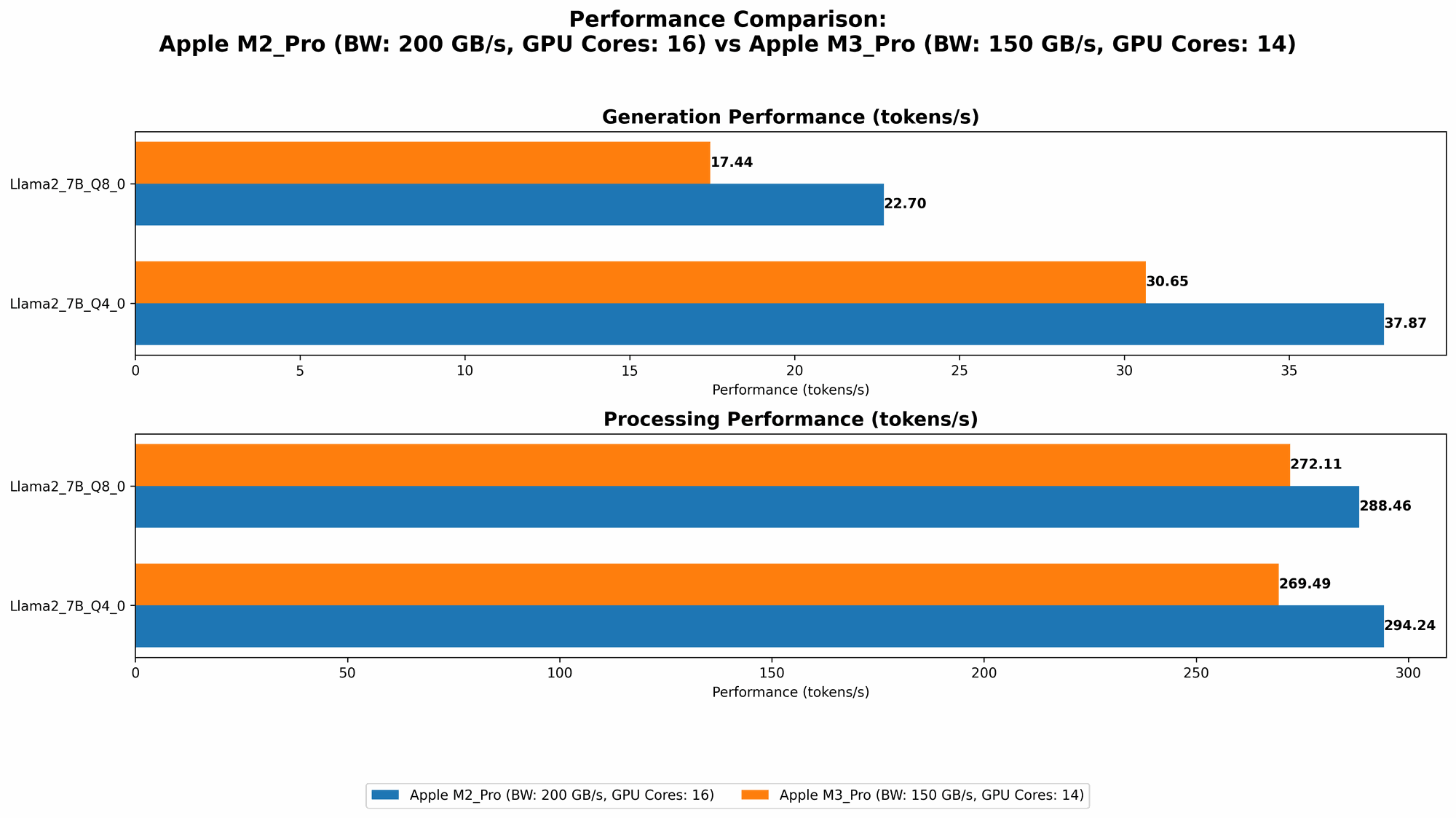

Comparison of Apple M2 Pro 200GB 16-cores and M3 Pro 150GB 14-cores

| Device | Memory (GB) | Cores | Llama27BF16_Processing (Tokens/Second) | Llama27BF16_Generation (Tokens/Second) | Llama27BQ80Processing (Tokens/Second) | Llama27BQ80Generation (Tokens/Second) | Llama27BQ40Processing (Tokens/Second) | Llama27BQ40Generation (Tokens/Second) |

|---|---|---|---|---|---|---|---|---|

| Apple M2 Pro | 200 | 16 | 312.65 | 12.47 | 288.46 | 22.7 | 294.24 | 37.87 |

| Apple M2 Pro | 200 | 19 | 384.38 | 13.06 | 344.5 | 23.01 | 341.19 | 38.86 |

| Apple M3 Pro | 150 | 14 | Not Available | Not Available | 272.11 | 17.44 | 269.49 | 30.65 |

| Apple M3 Pro | 150 | 18 | 357.45 | 9.89 | 344.66 | 17.53 | 341.67 | 30.74 |

Note: Unfortunately, there's no available data on the token generation speeds for the F16 quantization level on the Apple M3 Pro.

Performance Analysis: M2 Pro vs M3 Pro - Who Wins?

M2 Pro: The Powerhouse

The M2 Pro, with its 16 and 19 core configurations, clearly dominates in token generation speeds for Llama 2 7B in most scenarios. It consistently delivers faster processing speeds than the M3 Pro across all quantization levels. This makes the M2 Pro a fantastic choice for developers who are looking for a high-performance machine for running large LLMs locally.

M3 Pro: The Efficiency Champion

While the M3 Pro might not be the speed demon, it demonstrates impressive efficiency. Despite having fewer cores and a smaller memory capacity than the M2 Pro, it still delivers competitive processing speeds. Its Q80 and Q40 token generation performances are remarkably close to the M2 Pro, especially considering the reduced memory footprint and core count. This suggests that the M3 Pro is more power-efficient, a crucial advantage for devices that need to balance performance with battery life.

Which Device Should You Choose?

- M2 Pro: If you need the absolute fastest performance for running LLMs locally, the M2 Pro is the clear winner. It's a powerhouse that can handle the most demanding workloads.

- M3 Pro: If you are looking for a more balanced solution, where efficiency and portability are just as important as performance, the M3 Pro is a compelling choice. It's a great option for developers who are running LLMs on the go or need to conserve battery life while still maintaining sufficient computing power.

Beyond the Numbers: Practical Considerations

While the benchmark results are useful for comparing the raw performance of these processors, several other factors can influence your decision.

1. Memory Capacity: More Isn't Always Better

The M2 Pro boasts 200GB of memory, significantly more than the 150GB offered by the M3 Pro. However, for running a 7B model, you might not need all that extra memory. The M3 Pro's 150GB is sufficient for most LLM workloads, especially when considering the power efficiency it brings.

2. Cost: More Bang for Your Buck?

The M2 Pro is often a more expensive choice than the M3 Pro. Depending on your budget and specific needs, the M3 Pro might provide better value for money.

3. Future-Proofing: A Look Ahead

The Apple Silicon ecosystem is constantly evolving, and newer models with even greater performance are likely on the horizon. While the M2 Pro is currently a powerhouse, the M3 Pro might offer more longevity as Apple continues to refine its silicon technology.

4. Software and Integrations: A Matter of Choice

The choice of operating system can also play a role in your decision. Both the M2 Pro and the M3 Pro are compatible with the most popular LLM development software and tools, including macOS and the various frameworks for running LLMs (like llama.cpp).

Summary: Find the Perfect Match for Your LLM Development

Choosing the right device for your LLM development needs isn't a one-size-fits-all situation. By carefully considering the token generation speed, memory capacity, cost, and future-proofing, you can find the ideal machine that maximizes your productivity and budget.

FAQ: Common Questions About LLMs and Devices

1. What are some common use cases for local LLM models?

Local LLM models are becoming increasingly popular for various applications, including:

- Chatbots: Building interactive chatbots for customer service, education, or entertainment.

- Text Generation: Creating high-quality content for websites, social media, or marketing materials.

- Code Generation: Automating code writing for developers.

- Translation: Providing real-time language translation for individuals and businesses.

- Summarization: Extracting key information from large amounts of text.

- Personalized Learning: Tailoring educational content to individual learners.

2. What are the benefits of running LLMs locally?

- Faster Inference: Local models typically provide faster response times compared to cloud-based solutions.

- Improved Privacy: Running LLMs locally allows you to keep your data secure and private.

- Greater Control: You have more control over the model's configuration and parameters when running it locally.

- Offline Access: Local models can be used even without internet access.

3. How can I get started with running LLMs on my device?

There are several tools and resources available for running LLMs locally:

- Llama.cpp: A popular open-source framework for running LLM models on CPU and GPU devices.

- GPU-Benchmarks-on-LLM-Inference: A repository with benchmark results and insights into the performance of different GPUs for LLM inference.

4. Is a powerful GPU always necessary for running LLMs?

Not necessarily. While a powerful GPU can significantly enhance the performance of LLMs, especially for larger models, CPUs can still be sufficient for smaller LLMs or less demanding tasks.

5. What are the future trends in LLM development and hardware?

We can expect to see continued innovation in both LLM models and the hardware used to run them. Here are some key trends:

- Smaller and More Efficient Models: New LLM architectures are being developed that are smaller and more efficient, making them suitable for a wider range of devices.

- Specialized Hardware: Companies are developing specialized hardware specifically for LLM inference, which can offer significant performance improvements.

- Cloud Computing: Cloud-based LLM services are becoming increasingly popular, providing access to powerful LLMs without the need for specialized hardware.

Keywords

Apple M2 Pro, Apple M3 Pro, LLM, Token Generation Speed, Llama 2 7B, Quantization, AI Development, Local Inference, Hardware Benchmark, CPU, GPU, Performance Analysis, Device Comparison, Efficiency, Memory, Cost, Future-Proofing, Software Integration, Use Cases, Benefits