Which is Better for AI Development: Apple M2 Pro 200gb 16cores or Apple M2 Ultra 800gb 60cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of artificial intelligence (AI) is rapidly evolving, driven by groundbreaking advancements in large language models (LLMs). These sophisticated AI models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, require significant computational power. As developers dive deeper into the realm of LLMs, the choice of hardware becomes crucial for achieving optimal performance.

This article delves into the performance comparison of two powerful Apple silicon chips, the M2 Pro and M2 Ultra, specifically in the context of running LLMs locally. We'll evaluate their capabilities in generating tokens, the fundamental building blocks of text, and provide practical recommendations for developers based on their specific needs.

Understanding Token Generation Speed

Before we dive into the numbers, let's understand why token generation speed matters. Imagine LLMs as sophisticated word processors that can predict and generate text based on the input provided. Tokens represent these individual words or parts of words. The faster a device generates these tokens, the quicker an LLM can process text, analyze data, and deliver responses.

Think of it like typing: the faster your keyboard, the quicker you can write. In the world of LLMs, token generation speed is like that "typing speed" on steroids. The faster the token generation, the faster the LLM can "think" and deliver results.

Comparing Apple M2 Pro 200GB 16 Cores vs. Apple M2 Ultra 800GB 60 Cores

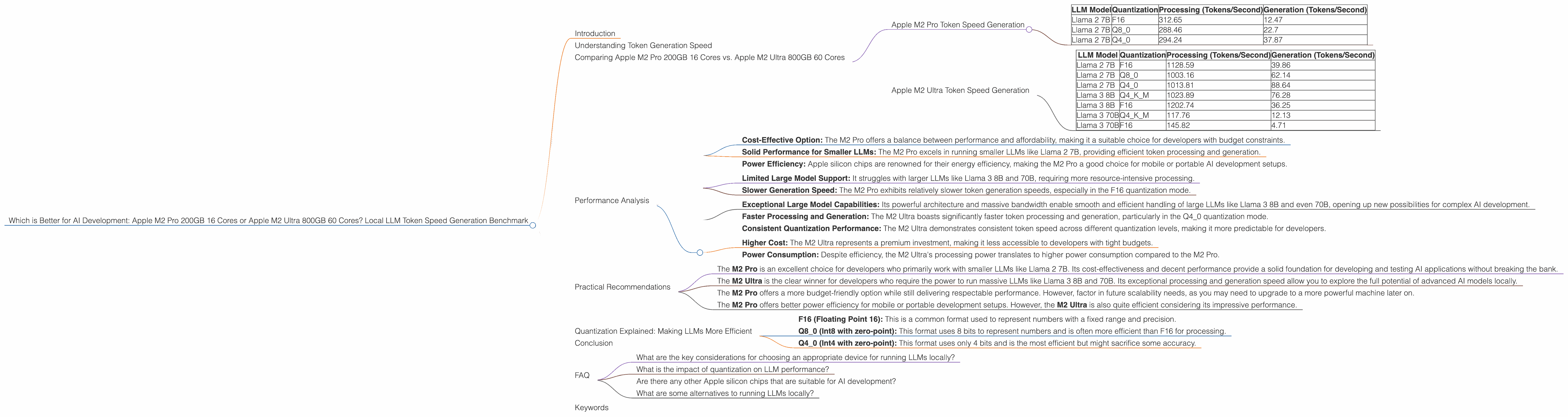

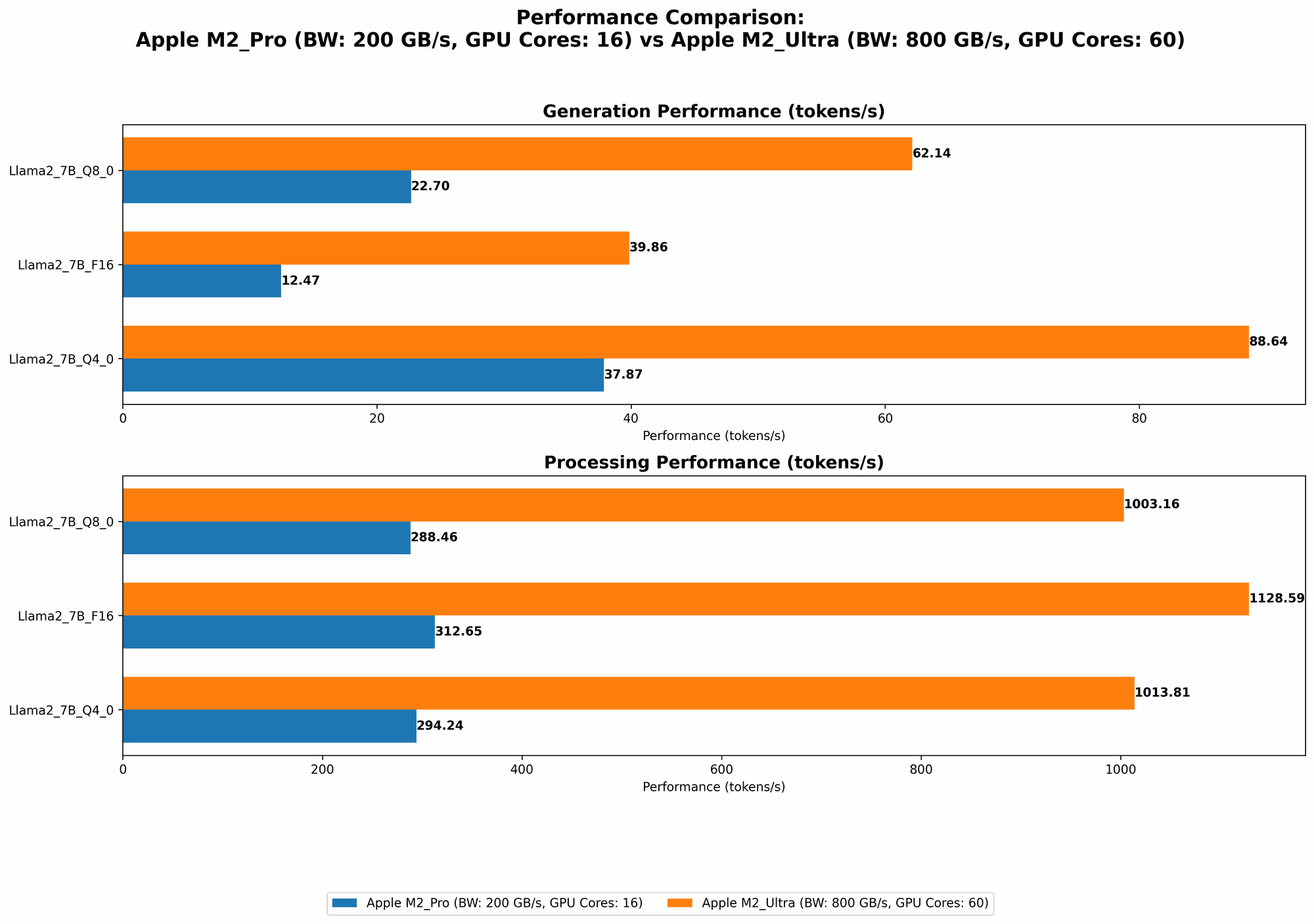

Apple M2 Pro Token Speed Generation

The Apple M2 Pro, with its 16 cores and 200GB of bandwidth, offers solid performance for running smaller LLMs. Let's analyze the token speed generation based on our data:

| LLM Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | F16 | 312.65 | 12.47 |

| Llama 2 7B | Q8_0 | 288.46 | 22.7 |

| Llama 2 7B | Q4_0 | 294.24 | 37.87 |

Key Observations:

- Processing Speed: The M2 Pro handles text processing efficiently, especially for the Llama 2 7B model.

- Generation Speed: The M2 Pro exhibits slightly slower token generation, particularly in F16 quantization.

Apple M2 Ultra Token Speed Generation

The Apple M2 Ultra, with its impressive 60 cores and 800GB of bandwidth, truly unleashes the potential for running larger LLMs locally. Here's a breakdown of its performance:

| LLM Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | F16 | 1128.59 | 39.86 |

| Llama 2 7B | Q8_0 | 1003.16 | 62.14 |

| Llama 2 7B | Q4_0 | 1013.81 | 88.64 |

| Llama 3 8B | Q4KM | 1023.89 | 76.28 |

| Llama 3 8B | F16 | 1202.74 | 36.25 |

| Llama 3 70B | Q4KM | 117.76 | 12.13 |

| Llama 3 70B | F16 | 145.82 | 4.71 |

Key Observations:

- Significant Performance Boost: The M2 Ultra showcases a substantial improvement in both processing and generation speed, particularly for the Llama 2 7B model.

- Large Model Handling: The M2 Ultra effortlessly handles larger models like Llama 3 8B and Llama 3 70B, a significant advantage for advanced AI development.

- Quantization Impact: While the M2 Pro exhibits a noticeable difference in performance across various quantization levels, the M2 Ultra shows a more consistent performance, especially on the Llama 2 7B model.

Performance Analysis

Strengths of Apple M2 Pro:

- Cost-Effective Option: The M2 Pro offers a balance between performance and affordability, making it a suitable choice for developers with budget constraints.

- Solid Performance for Smaller LLMs: The M2 Pro excels in running smaller LLMs like Llama 2 7B, providing efficient token processing and generation.

- Power Efficiency: Apple silicon chips are renowned for their energy efficiency, making the M2 Pro a good choice for mobile or portable AI development setups.

Weaknesses of Apple M2 Pro:

- Limited Large Model Support: It struggles with larger LLMs like Llama 3 8B and 70B, requiring more resource-intensive processing.

- Slower Generation Speed: The M2 Pro exhibits relatively slower token generation speeds, especially in the F16 quantization mode.

Strengths of Apple M2 Ultra:

- Exceptional Large Model Capabilities: Its powerful architecture and massive bandwidth enable smooth and efficient handling of large LLMs like Llama 3 8B and even 70B, opening up new possibilities for complex AI development.

- Faster Processing and Generation: The M2 Ultra boasts significantly faster token processing and generation, particularly in the Q4_0 quantization mode.

- Consistent Quantization Performance: The M2 Ultra demonstrates consistent token speed across different quantization levels, making it more predictable for developers.

Weaknesses of Apple M2 Ultra:

- Higher Cost: The M2 Ultra represents a premium investment, making it less accessible to developers with tight budgets.

- Power Consumption: Despite efficiency, the M2 Ultra's processing power translates to higher power consumption compared to the M2 Pro.

Practical Recommendations

For Developers Working with Smaller LLMs:

- The M2 Pro is an excellent choice for developers who primarily work with smaller LLMs like Llama 2 7B. Its cost-effectiveness and decent performance provide a solid foundation for developing and testing AI applications without breaking the bank.

For Developers Working with Large LLMs:

- The M2 Ultra is the clear winner for developers who require the power to run massive LLMs like Llama 3 8B and 70B. Its exceptional processing and generation speed allow you to explore the full potential of advanced AI models locally.

For Developers on a Budget:

- The M2 Pro offers a more budget-friendly option while still delivering respectable performance. However, factor in future scalability needs, as you may need to upgrade to a more powerful machine later on.

For Developers Prioritizing Power Efficiency:

- The M2 Pro offers better power efficiency for mobile or portable development setups. However, the M2 Ultra is also quite efficient considering its impressive performance.

Quantization Explained: Making LLMs More Efficient

Quantization is a technique used to make LLMs more efficient by representing their weights (numbers that determine the model's behavior) with fewer bits. Think of it like compressing a file: it makes the model smaller and faster to load and run.

- F16 (Floating Point 16): This is a common format used to represent numbers with a fixed range and precision.

- Q8_0 (Int8 with zero-point): This format uses 8 bits to represent numbers and is often more efficient than F16 for processing.

- Q4_0 (Int4 with zero-point): This format uses only 4 bits and is the most efficient but might sacrifice some accuracy.

The choice of quantization depends on the trade-off between accuracy and efficiency. In general, lower quantization (like Q4_0) sacrifices some accuracy to gain more speed and efficiency, while higher quantization (like F16) maintains accuracy but might be slower.

Conclusion

Both the M2 Pro and M2 Ultra are powerful chips, but the choice ultimately depends on your specific AI development needs and budget. The M2 Pro offers a balance of performance and affordability, while the M2 Ultra unleashes the full potential of larger LLMs. By understanding the strengths and weaknesses of each chip, developers can choose the ideal device for their AI journey.

FAQ

What are the key considerations for choosing an appropriate device for running LLMs locally?

Consider the size of the LLM you're working with, your budget, and your specific requirements for processing and generation speed.

What is the impact of quantization on LLM performance?

Quantization can significantly improve LLM performance by reducing model size and enhancing efficiency. However, it might involve some trade-offs in accuracy.

Are there any other Apple silicon chips that are suitable for AI development?

Yes, other Apple silicon chips like the M1 and M2 Max also offer solid performance for AI development.

What are some alternatives to running LLMs locally?

You can also utilize cloud-based solutions like Google Colab and Amazon SageMaker for running LLMs, providing access to powerful GPUs and infrastructure.

Keywords

Apple M2 Pro, Apple M2 Ultra, LLMs, Large Language Models, Token Speed Generation, AI Development, Quantization, F16, Q80, Q40 , Llama 2 7B, Llama 3 8B, Llama 3 70B, GPUCores, Bandwidth, Processing Speed, Generation Speed, Performance Analysis, Recommendations, Cost-Effective, Powerful, Efficient, Local AI, Cloud-Based AI, Google Colab, Amazon SageMaker,