Which is Better for AI Development: Apple M2 Max 400gb 30cores or NVIDIA 3080 10GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is buzzing with excitement, fueled by the incredible capabilities of these AI models. Developers and researchers are exploring ways to run LLMs locally on their machines, enabling faster experimentation and personalized AI applications. Two popular contenders for this task are the powerful Apple M2 Max chip and the NVIDIA 3080 graphics card. In this article, we'll dive deep into the performance of these devices when it comes to running LLMs, comparing their strengths and weaknesses. By analyzing real-world benchmarks, we'll offer insights to help you decide which device is better suited for your AI development needs.

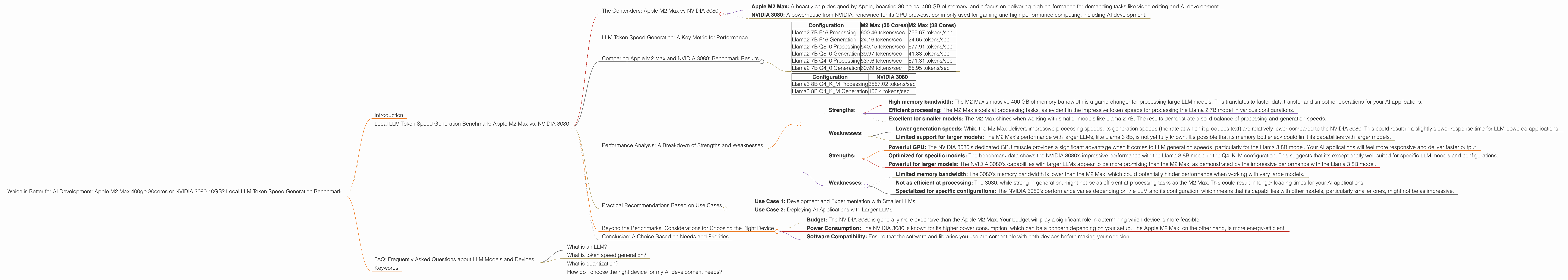

Local LLM Token Speed Generation Benchmark: Apple M2 Max vs. NVIDIA 3080

The Contenders: Apple M2 Max vs NVIDIA 3080

Let's introduce our contenders:

- Apple M2 Max: A beastly chip designed by Apple, boasting 30 cores, 400 GB of memory, and a focus on delivering high performance for demanding tasks like video editing and AI development.

- NVIDIA 3080: A powerhouse from NVIDIA, renowned for its GPU prowess, commonly used for gaming and high-performance computing, including AI development.

In this showdown, we'll examine their performance in the crucial aspect of LLM token speed generation, which directly impacts the responsiveness and efficiency of your LLM applications.

LLM Token Speed Generation: A Key Metric for Performance

Before we dive into the benchmark results, let's understand what LLM token speed generation entails. Imagine an LLM like ChatGPT. It receives a prompt, processes the input, and generates text in response. This text is broken down into individual units called tokens, which are the building blocks of language.

Token speed generation refers to the rate at which an LLM can process these tokens, transforming raw input into meaningful text. When a device can generate tokens faster, your LLM applications feel snappier and more responsive.

Comparing Apple M2 Max and NVIDIA 3080: Benchmark Results

Okay, let's get to the heart of the matter - the benchmark data. We'll break down the performance of each device for various LLM models and configurations.

Note: The benchmark data provided is for the Llama 2 and Llama 3 models, and the performance figures are measured in tokens per second (tokens/sec).

Apple M2 Max

- Llama2 7B: This 7 billion parameter model is a popular choice for experimenting with LLMs, due to its balance of size and performance.

| Configuration | M2 Max (30 Cores) | M2 Max (38 Cores) |

|---|---|---|

| Llama2 7B F16 Processing | 600.46 tokens/sec | 755.67 tokens/sec |

| Llama2 7B F16 Generation | 24.16 tokens/sec | 24.65 tokens/sec |

| Llama2 7B Q8_0 Processing | 540.15 tokens/sec | 677.91 tokens/sec |

| Llama2 7B Q8_0 Generation | 39.97 tokens/sec | 41.83 tokens/sec |

| Llama2 7B Q4_0 Processing | 537.6 tokens/sec | 671.31 tokens/sec |

| Llama2 7B Q4_0 Generation | 60.99 tokens/sec | 65.95 tokens/sec |

NVIDIA 3080

- Llama3 8B: This 8 billion parameter Llama model is known for its advanced capabilities, and we'll focus on its performance using the Q4KM (quantization) configuration.

| Configuration | NVIDIA 3080 |

|---|---|

| Llama3 8B Q4KM Processing | 3557.02 tokens/sec |

| Llama3 8B Q4KM Generation | 106.4 tokens/sec |

Important Note: We don't have benchmark data for the NVIDIA 3080 using F16 or other quantization levels for the Llama 3 8B model, nor for the Llama 3 70B models.

Performance Analysis: A Breakdown of Strengths and Weaknesses

Let's analyze the benchmark results and understand the strengths and weaknesses of each device in the context of LLM performance.

Apple M2 Max:

Strengths:

- High memory bandwidth: The M2 Max's massive 400 GB of memory bandwidth is a game-changer for processing large LLM models. This translates to faster data transfer and smoother operations for your AI applications.

- Efficient processing: The M2 Max excels at processing tasks, as evident in the impressive token speeds for processing the Llama 2 7B model in various configurations.

- Excellent for smaller models: The M2 Max shines when working with smaller models like Llama 2 7B. The results demonstrate a solid balance of processing and generation speeds.

Weaknesses:

- Lower generation speeds: While the M2 Max delivers impressive processing speeds, its generation speeds (the rate at which it produces text) are relatively lower compared to the NVIDIA 3080. This could result in a slightly slower response time for LLM-powered applications.

- Limited support for larger models: The M2 Max's performance with larger LLMs, like Llama 3 8B, is not yet fully known. It's possible that its memory bottleneck could limit its capabilities with larger models.

NVIDIA 3080:

Strengths:

- Powerful GPU: The NVIDIA 3080's dedicated GPU muscle provides a significant advantage when it comes to LLM generation speeds, particularly for the Llama 3 8B model. Your AI applications will feel more responsive and deliver faster output.

- Optimized for specific models: The benchmark data shows the NVIDIA 3080’s impressive performance with the Llama 3 8B model in the Q4KM configuration. This suggests that it's exceptionally well-suited for specific LLM models and configurations.

- Powerful for larger models: The NVIDIA 3080's capabilities with larger LLMs appear to be more promising than the M2 Max, as demonstrated by the impressive performance with the Llama 3 8B model.

Weaknesses:

- Limited memory bandwidth: The 3080's memory bandwidth is lower than the M2 Max, which could potentially hinder performance when working with very large models.

- Not as efficient at processing: The 3080, while strong in generation, might not be as efficient at processing tasks as the M2 Max. This could result in longer loading times for your AI applications.

- Specialized for specific configurations: The NVIDIA 3080’s performance varies depending on the LLM and its configuration, which means that its capabilities with other models, particularly smaller ones, might not be as impressive.

Practical Recommendations Based on Use Cases

Use Case 1: Development and Experimentation with Smaller LLMs

For developers who are experimenting with smaller LLMs like Llama 2 7B, the Apple M2 Max is a fantastic choice. Its high memory bandwidth ensures a smooth workflow, and its good processing speeds make it efficient for training and fine-tuning. Even though its generation speeds are a bit slower, it's a well-rounded option for initial experimentation.

Use Case 2: Deploying AI Applications with Larger LLMs

If you need to deploy AI applications that require the speed and responsiveness of a powerful LLM like Llama 3 8B, the NVIDIA 3080 is the clear winner. Its blazing-fast generation speeds make it ideal for scenarios where quick responses are crucial. However, keep in mind that the 3080's performance is highly dependent on the chosen LLM model and configuration.

Beyond the Benchmarks: Considerations for Choosing the Right Device

While the benchmark data offers valuable insights, it's crucial to consider other factors when choosing the right device for your AI development needs.

- Budget: The NVIDIA 3080 is generally more expensive than the Apple M2 Max. Your budget will play a significant role in determining which device is more feasible.

- Power Consumption: The NVIDIA 3080 is known for its higher power consumption, which can be a concern depending on your setup. The Apple M2 Max, on the other hand, is more energy-efficient.

- Software Compatibility: Ensure that the software and libraries you use are compatible with both devices before making your decision.

Conclusion: A Choice Based on Needs and Priorities

The choice between an Apple M2 Max and NVIDIA 3080 for running LLMs locally ultimately boils down to your specific needs and priorities. The M2 Max excels in memory bandwidth, making it ideal for smaller LLMs and development workflows. The NVIDIA 3080 delivers powerhouse generation speeds, suited for deploying applications with larger LLMs where response time is critical.

FAQ: Frequently Asked Questions about LLM Models and Devices

What is an LLM?

An LLM is a large language model, a type of artificial intelligence that excels at understanding and generating human-like text. Think of LLMs as incredibly intelligent chatbots powered by vast datasets of text and code. Examples include ChatGPT, Bard, and various open-source models like Llama.

What is token speed generation?

Token speed generation refers to the rate at which an LLM can process individual units of text called tokens. The faster the token speed, the more responsive and efficient your LLM application will be.

What is quantization?

Quantization is a technique used to reduce the size of LLM models, making them more memory-efficient and potentially faster to run. It involves converting the model's parameters (numbers that represent the model's knowledge) into a smaller format, often using fewer bits. This trade-off between accuracy and memory efficiency allows you to run larger models on devices with limited memory.

How do I choose the right device for my AI development needs?

Consider the size of the LLMs you plan to work with, your budget, power consumption requirements, and software compatibility. The M2 Max is great for smaller LLMs and development, while the NVIDIA 3080 shines for larger models and deployment scenarios.

Keywords

LLM, large language model, Apple M2 Max, NVIDIA 3080, token speed generation, benchmark, performance, processing, generation, Llama 2, Llama 3, quantization, AI development, GPU, CPU, memory bandwidth, developer, geek, local LLM, AI application, deployment, budget, power consumption, software compatibility.