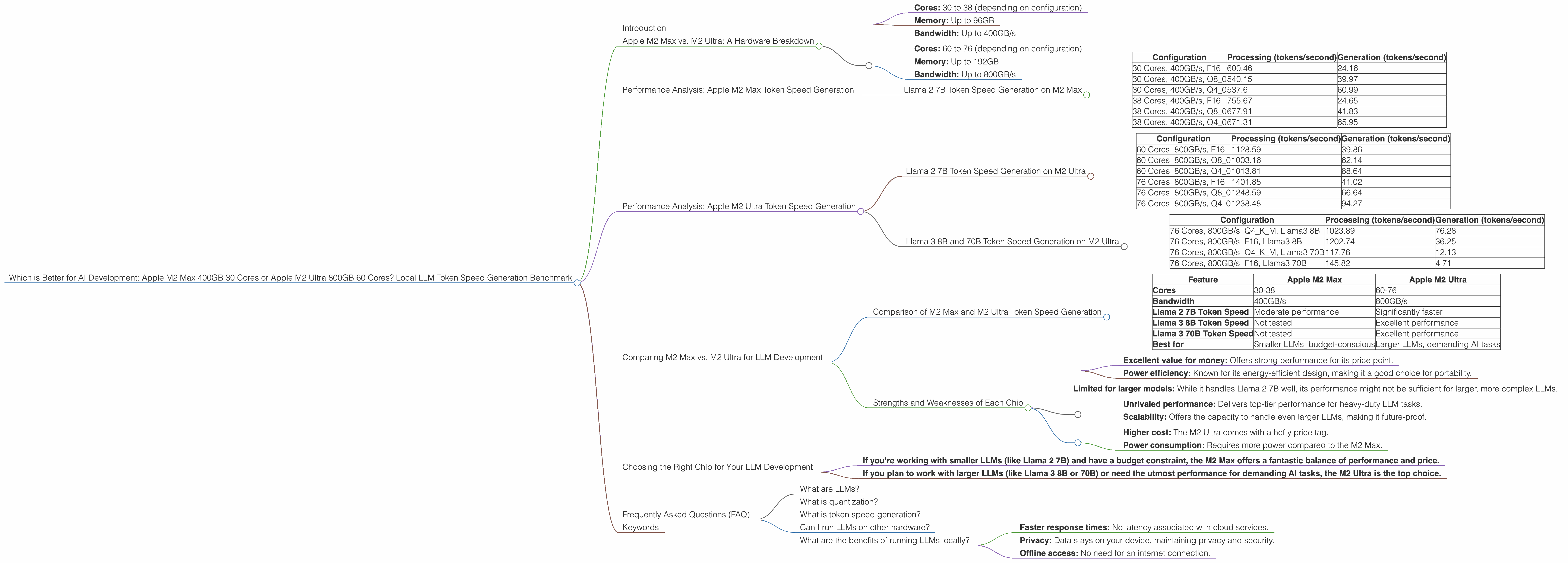

Which is Better for AI Development: Apple M2 Max 400gb 30cores or Apple M2 Ultra 800gb 60cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of Artificial Intelligence (AI) is rapidly evolving, and large language models (LLMs) are at the forefront of this revolution. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But training and running LLMs require significant computational resources. This is where powerful hardware like Apple's M2 Max and M2 Ultra chips come into play.

This article explores the performance of these two top-tier Apple silicon chips for running LLMs locally. We'll delve into the token speed generation benchmark of different LLM models on both devices, highlighting their strengths and weaknesses. This comparison will assist developers in choosing the optimal hardware for their AI development projects.

Apple M2 Max vs. M2 Ultra: A Hardware Breakdown

Before diving into the benchmark results, let's understand the key differences between the M2 Max and M2 Ultra chips.

Apple M2 Max:

- Cores: 30 to 38 (depending on configuration)

- Memory: Up to 96GB

- Bandwidth: Up to 400GB/s

Apple M2 Ultra:

- Cores: 60 to 76 (depending on configuration)

- Memory: Up to 192GB

- Bandwidth: Up to 800GB/s

The M2 Ultra is essentially two M2 Max dies connected together, offering a significant jump in processing power and memory bandwidth. This duality makes the M2 Ultra ideal for tasks demanding massive parallel processing, like training and running large language models.

Performance Analysis: Apple M2 Max Token Speed Generation

Llama 2 7B Token Speed Generation on M2 Max

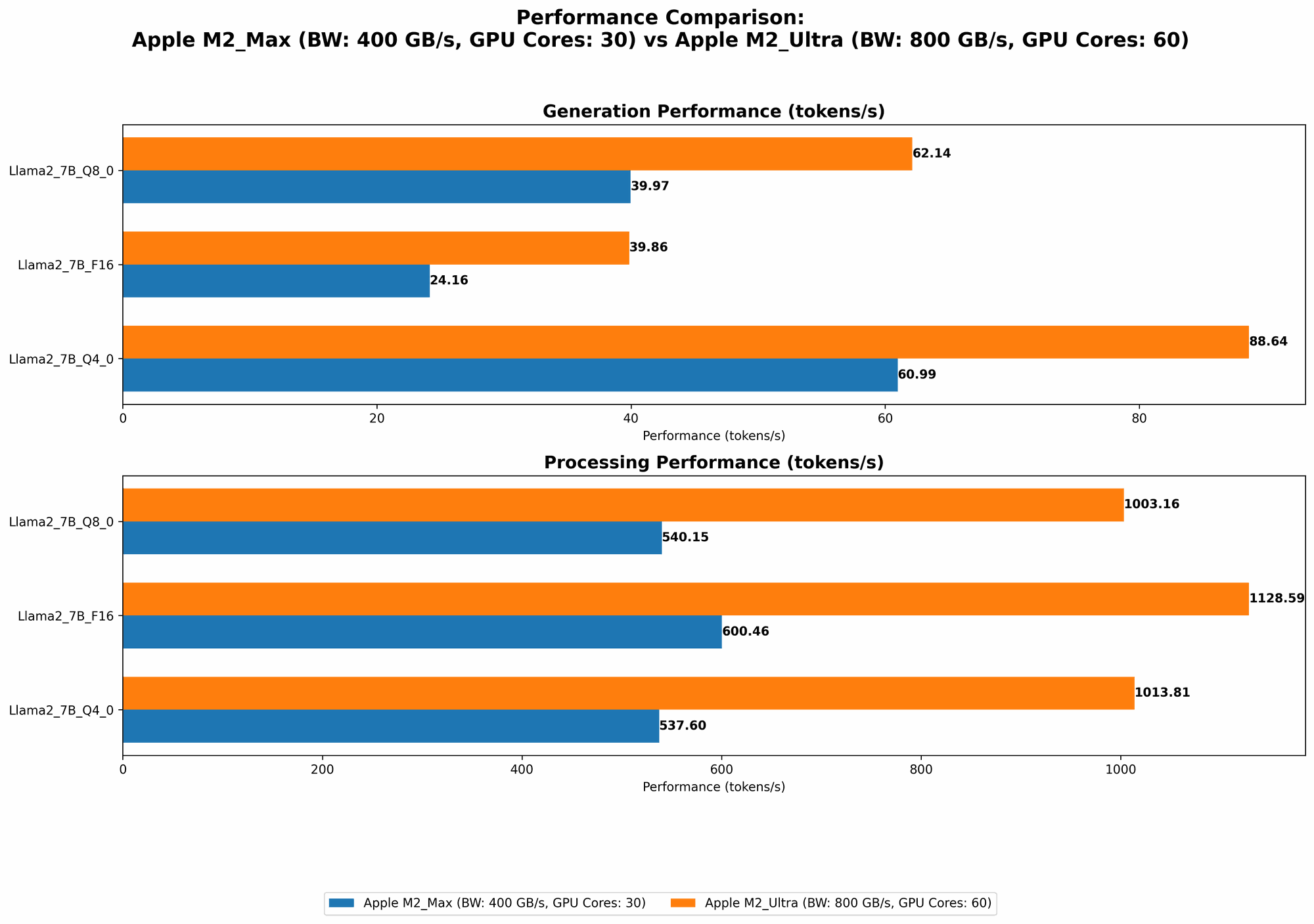

The Apple M2 Max boasts impressive performance for running LLMs locally. The token speed generation, which measures the speed at which the model processes and generates individual tokens (words or punctuation marks), is a crucial metric for developers.

Here's a breakdown of token speed generation for Llama 2 7B on the M2 Max (in tokens per second):

| Configuration | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| 30 Cores, 400GB/s, F16 | 600.46 | 24.16 |

| 30 Cores, 400GB/s, Q8_0 | 540.15 | 39.97 |

| 30 Cores, 400GB/s, Q4_0 | 537.6 | 60.99 |

| 38 Cores, 400GB/s, F16 | 755.67 | 24.65 |

| 38 Cores, 400GB/s, Q8_0 | 677.91 | 41.83 |

| 38 Cores, 400GB/s, Q4_0 | 671.31 | 65.95 |

Key Observations:

- Quantization Benefits: The M2 Max showcases the advantages of quantization, a technique that reduces the size of the model by representing numbers with fewer bits.

- As you can see from the data above, Q80 (8-bit quantization) and Q40 (4-bit quantization) show significant improvements in token speed for generation, compared to F16 (half-precision floating point).

- Performance Scaling: The M2 Max with 38 cores shows a substantial performance gain over the 30-core version, particularly in processing. This highlights the impact of core count on performance.

Performance Analysis: Apple M2 Ultra Token Speed Generation

Llama 2 7B Token Speed Generation on M2 Ultra

The Apple M2 Ultra, with its double the core count and bandwidth compared to the M2 Max, is a powerhouse for running LLMs. Let's examine how it performs with Llama 2 7B:

| Configuration | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| 60 Cores, 800GB/s, F16 | 1128.59 | 39.86 |

| 60 Cores, 800GB/s, Q8_0 | 1003.16 | 62.14 |

| 60 Cores, 800GB/s, Q4_0 | 1013.81 | 88.64 |

| 76 Cores, 800GB/s, F16 | 1401.85 | 41.02 |

| 76 Cores, 800GB/s, Q8_0 | 1248.59 | 66.64 |

| 76 Cores, 800GB/s, Q4_0 | 1238.48 | 94.27 |

Key Observations:

- Double the Speed: The M2 Ultra consistently delivers approximately double the token speed compared to the M2 Max, as expected.

- Quantization Advantage: Similar to the M2 Max, quantization proves beneficial for both processing and generation speed on the M2 Ultra.

Llama 3 8B and 70B Token Speed Generation on M2 Ultra

The M2 Ultra's power unleashes its true potential when dealing with larger LLMs, like Llama 3 8B and 70B.

| Configuration | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| 76 Cores, 800GB/s, Q4KM, Llama3 8B | 1023.89 | 76.28 |

| 76 Cores, 800GB/s, F16, Llama3 8B | 1202.74 | 36.25 |

| 76 Cores, 800GB/s, Q4KM, Llama3 70B | 117.76 | 12.13 |

| 76 Cores, 800GB/s, F16, Llama3 70B | 145.82 | 4.71 |

Key Observations:

- Larger Model Performance: The M2 Ultra handles the larger Llama 3 8B and 70B models with impressive speed, offering substantial performance for both processing and generation.

- Quantization Impact: Quantization continues to play a crucial role in boosting token speed, especially for larger models.

- Q4KM (4-bit quantization with kernel fusion) shows a remarkable performance improvement over F16 for Llama 3 70B.

Think of it this way: The M2 Ultra is like a super-fast race car, while the M2 Max is a highly capable sports car. Both are excellent performers, but if you need top-tier speed and the capacity to pull off complex maneuvers, the M2 Ultra is the clear winner.

Comparing M2 Max vs. M2 Ultra for LLM Development

Comparison of M2 Max and M2 Ultra Token Speed Generation

Here's a quick comparison summary, highlighting the key takeaways based on the data:

| Feature | Apple M2 Max | Apple M2 Ultra |

|---|---|---|

| Cores | 30-38 | 60-76 |

| Bandwidth | 400GB/s | 800GB/s |

| Llama 2 7B Token Speed | Moderate performance | Significantly faster |

| Llama 3 8B Token Speed | Not tested | Excellent performance |

| Llama 3 70B Token Speed | Not tested | Excellent performance |

| Best for | Smaller LLMs, budget-conscious | Larger LLMs, demanding AI tasks |

Strengths and Weaknesses of Each Chip

Apple M2 Max:

Strengths:

- Excellent value for money: Offers strong performance for its price point.

- Power efficiency: Known for its energy-efficient design, making it a good choice for portability.

Weaknesses:

- Limited for larger models: While it handles Llama 2 7B well, its performance might not be sufficient for larger, more complex LLMs.

Apple M2 Ultra:

Strengths:

- Unrivaled performance: Delivers top-tier performance for heavy-duty LLM tasks.

- Scalability: Offers the capacity to handle even larger LLMs, making it future-proof.

Weaknesses:

- Higher cost: The M2 Ultra comes with a hefty price tag.

- Power consumption: Requires more power compared to the M2 Max.

Choosing the Right Chip for Your LLM Development

The choice between the M2 Max and M2 Ultra boils down to your specific needs and budget.

- If you're working with smaller LLMs (like Llama 2 7B) and have a budget constraint, the M2 Max offers a fantastic balance of performance and price.

- If you plan to work with larger LLMs (like Llama 3 8B or 70B) or need the utmost performance for demanding AI tasks, the M2 Ultra is the top choice.

Remember, the M2 Ultra is a significant investment, so carefully assess your needs before making a decision.

Frequently Asked Questions (FAQ)

What are LLMs?

LLMs are a type of AI model that specializes in understanding and generating human-like text. They have revolutionized various fields, including language translation, content creation, and customer support.

What is quantization?

Quantization is a technique that reduces the size of a model by representing numbers with fewer bits. This results in smaller models with improved performance on certain hardware. Imagine it like compressing a large photo so it takes up less space on your phone, but still looks good enough.

What is token speed generation?

Token speed generation refers to the speed at which an LLM processes and generates individual tokens. It's like the number of words a person can type per minute, but for AI models.

Can I run LLMs on other hardware?

Yes! There are various options for running LLMs, including GPUs (Graphics Processing Units) from NVIDIA and AMD, as well as cloud computing services like Google Cloud and AWS.

What are the benefits of running LLMs locally?

- Faster response times: No latency associated with cloud services.

- Privacy: Data stays on your device, maintaining privacy and security.

- Offline access: No need for an internet connection.

Keywords

Apple M2 Max, Apple M2 Ultra, LLM, Large Language Models, Token Speed Generation, Llama 2, Llama 3, Quantization, F16, Q80, Q40, Q4KM, AI Development, Performance Benchmark, Local LLM, AI Hardware, GPU, CPU, GPU Benchmarks, AI Performance, Inference, Processing, Generation, Model Size, Bandwidth, Cores.