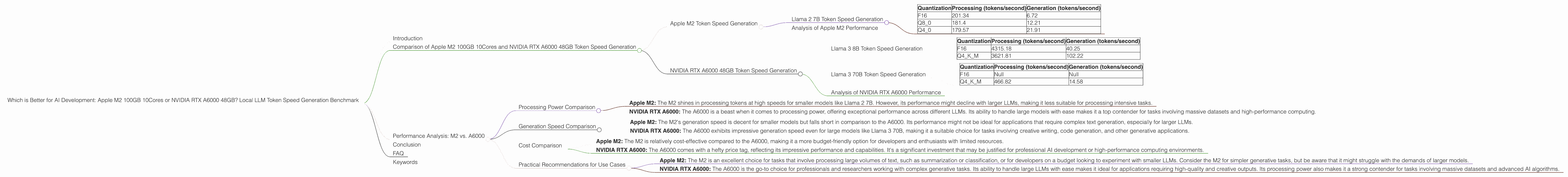

Which is Better for AI Development: Apple M2 100gb 10cores or NVIDIA RTX A6000 48GB? Local LLM Token Speed Generation Benchmark

Introduction

In the thrilling world of AI, the quest for faster and more efficient ways to train and run Large Language Models (LLMs) is a never-ending race. Whether you're a seasoned developer or just starting your AI journey, choosing the right hardware is crucial for unlocking LLM potential. Today we're diving into the ring with two heavyweights: the Apple M2 100GB 10Cores and the NVIDIA RTX A6000 48GB. We'll be comparing their performance on local LLM token speed generation, using real-world benchmarks to see which chip reigns supreme.

Imagine you're working on a groundbreaking chatbot that can translate languages in real-time, write poetry on demand, or even create realistic dialogue for your favorite video game. You need a device with the power to handle the complex computations involved in running these LLMs, and that's where these two titans enter the scene.

So, buckle up, grab your caffeinated beverage of choice, and let's embark on this thrilling journey to see who emerges victorious!

Comparison of Apple M2 100GB 10Cores and NVIDIA RTX A6000 48GB Token Speed Generation

Apple M2 Token Speed Generation

The Apple M2, with its 100GB of RAM and 10 cores, is a powerhouse in its own right. It's known for its exceptional energy efficiency and blazing-fast performance on tasks like video editing and machine learning. But how does it fare when it comes to crunching the numbers for LLMs?

Llama 2 7B Token Speed Generation

Let's start with the smaller model, Llama 2 7B. The M2 demonstrates impressive performance across various quantization levels:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 201.34 | 6.72 |

| Q8_0 | 181.4 | 12.21 |

| Q4_0 | 179.57 | 21.91 |

The data reveals that the M2 excels in processing tokens at a rapid pace, regardless of the chosen quantization method. However, its generation speeds, while decent, are significantly lower than its processing capabilities. This suggests that the M2 might be better suited for tasks that require a high volume of token processing, like text summarization or classification, rather than complex generative tasks like long-form text generation.

Analysis of Apple M2 Performance

In short, the M2 shines in processing tokens swiftly for smaller LLMs. For tasks like text summarization or classification, it's a solid choice. However, for tasks that involve a higher volume of text generation, it may struggle to keep up with the demands of larger LLMs.

NVIDIA RTX A6000 48GB Token Speed Generation

Now, let's turn our attention to the heavyweight champ, the NVIDIA RTX A6000 48GB. This behemoth is renowned for its incredible GPU power, making it a popular choice for AI development and high-performance computing. But how does it stack up against the M2 when it comes to token speed generation?

Llama 3 8B Token Speed Generation

The A6000 showcases its strength with the Llama 3 8B model:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 4315.18 | 40.25 |

| Q4KM | 3621.81 | 102.22 |

Here, the A6000 demonstrates a significant advantage in both processing and generation speeds. The F16 quantization yields impressive performance, while the use of Q4KM quantization further increases the generation speed. This indicates that the A6000 is well-equipped to handle more complex generative tasks with larger LLMs.

Llama 3 70B Token Speed Generation

Let's see how the A6000 tackles the larger Llama 3 70B model:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | Null | Null |

| Q4KM | 466.82 | 14.58 |

While the F16 data is unavailable, the A6000 still manages to process and generate tokens at a respectable rate using Q4KM quantization. Keep in mind, however, that the performance drops compared to the 8B model due to the larger size and increased complexity of the 70B model.

Analysis of NVIDIA RTX A6000 Performance

In summary, the A6000 displays exceptional performance for both smaller and larger LLMs, offering impressive speed for both processing and generation. Its power allows it to handle complex generative tasks with ease, making it a versatile choice for various AI development needs.

Performance Analysis: M2 vs. A6000

Processing Power Comparison

- Apple M2: The M2 shines in processing tokens at high speeds for smaller models like Llama 2 7B. However, its performance might decline with larger LLMs, making it less suitable for processing intensive tasks.

- NVIDIA RTX A6000: The A6000 is a beast when it comes to processing power, offering exceptional performance across different LLMs. Its ability to handle large models with ease makes it a top contender for tasks involving massive datasets and high-performance computing.

Think of the A6000 as a race car built for speed and power, while the M2 is more like a sleek sports car built for efficiency and agility. The A6000 can handle the toughest tasks with ease, while the M2 excels in specific niches.

Generation Speed Comparison

- Apple M2: The M2's generation speed is decent for smaller models but falls short in comparison to the A6000. Its performance might not be ideal for applications that require complex text generation, especially for larger LLMs.

- NVIDIA RTX A6000: The A6000 exhibits impressive generation speed even for large models like Llama 3 70B, making it a suitable choice for tasks involving creative writing, code generation, and other generative applications.

Cost Comparison

- Apple M2: The M2 is relatively cost-effective compared to the A6000, making it a more budget-friendly option for developers and enthusiasts with limited resources.

- NVIDIA RTX A6000: The A6000 comes with a hefty price tag, reflecting its impressive performance and capabilities. It's a significant investment that may be justified for professional AI development or high-performance computing environments.

Practical Recommendations for Use Cases

- Apple M2: The M2 is an excellent choice for tasks that involve processing large volumes of text, such as summarization or classification, or for developers on a budget looking to experiment with smaller LLMs. Consider the M2 for simpler generative tasks, but be aware that it might struggle with the demands of larger models.

- NVIDIA RTX A6000: The A6000 is the go-to choice for professionals and researchers working with complex generative tasks. Its ability to handle large LLMs with ease makes it ideal for applications requiring high-quality and creative outputs. Its processing power also makes it a strong contender for tasks involving massive datasets and advanced AI algorithms.

Conclusion

Both the Apple M2 and the NVIDIA RTX A6000 are powerful pieces of hardware with their unique strengths and weaknesses. Ultimately, the best device for your AI development journey depends on your specific needs and budget. The M2 offers a more budget-friendly option with enough power to handle smaller LLMs, while the A6000 stands as the king of power and performance, ready to tackle the most demanding tasks with ease.

FAQ

Q: What is quantization and how does it affect LLM performance?

A: Quantization is a technique used to reduce the size of LLM models by representing their weights with fewer bits. Think of it like summarizing a large book with a short summary. This allows for faster processing and less memory consumption. However, quantization can sometimes lead to a slight reduction in accuracy, depending on the method used.

Q: Does the A6000 always outperform the M2?

A: No, the A6000 might outperform the M2 in most cases, but it's not always a guaranteed win. The M2 can be a more efficient option for specific use cases, especially if cost is a major factor.

Q: What about other devices like the A100 or H100?

A: We're focusing on the M2 and A6000 in this comparison. Other devices like the A100 and H100 are also powerful options, but their performance and capabilities will vary based on their specific features and configurations.

Q: Are there any resources for getting started with LLMs?

A: Yes, there are several resources available! For example, you can check out the open-source project llama.cpp on GitHub for running LLMs locally.

Keywords

Apple M2, NVIDIA RTX A6000, LLM, token generation, AI development, benchmark, performance, processing speed, generation speed, quantization, Llama 2, Llama 3, GPU, CPU, cost, efficiency, use cases, FAQ, resources, open-source, llama.cpp