Which is Better for AI Development: Apple M2 100gb 10cores or NVIDIA RTX 4000 Ada 20GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with excitement, and the core of this frenzy is Large Language Models (LLMs). LLMs are powerful AI systems that can understand and generate human-like text, revolutionizing fields like natural language processing, code generation, and creative writing.

But running these models locally can be resource-intensive, requiring powerful hardware. This is where the debate intensifies: Should you choose the Apple M2 chip or the NVIDIA RTX4000Ada_20GB for your LLM development needs?

This article dives deep into the performance of these two powerful devices, comparing them based on token speed generation for popular LLM models like Llama 2 and Llama 3. We'll analyze benchmark results, explore strengths and weaknesses, and provide practical recommendations for choosing the right device for your AI projects. Buckle up, because this is going to be a wild ride through the exciting world of LLM development!

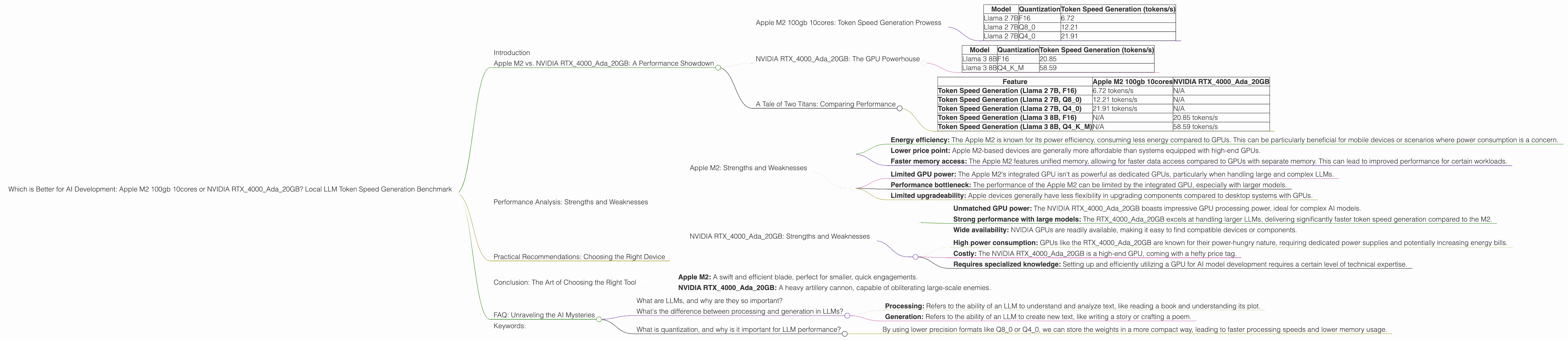

Apple M2 vs. NVIDIA RTX4000Ada_20GB: A Performance Showdown

The battle for LLM supremacy is on, and our contenders are the Apple M2 with 100GB of memory and 10 cores, and the NVIDIA RTX4000Ada_20GB graphics card. Both are formidable players in the AI arena, but they have unique strengths and weaknesses. To understand which champion reigns supreme, we need to delve into their token speed generation performance, measured in tokens per second (tokens/s).

Apple M2 100gb 10cores: Token Speed Generation Prowess

The Apple M2 chip has earned a reputation for its impressive performance in various tasks, including AI. It’s known for its energy efficiency, power, and ability to handle complex calculations. Let's see how it fares in our LLM benchmark:

| Model | Quantization | Token Speed Generation (tokens/s) |

|---|---|---|

| Llama 2 7B | F16 | 6.72 |

| Llama 2 7B | Q8_0 | 12.21 |

| Llama 2 7B | Q4_0 | 21.91 |

Observations:

- The Apple M2 demonstrates decent token generation speed for the Llama 2 7B model.

- The performance significantly improves with the use of quantized models.

- Quantization is a technique used to reduce the size of AI models and accelerate inference. Think of it like a compression algorithm for AI models. By using lower precision formats (like Q80 or Q40) compared to standard float 16 (F16), we can squeeze more data into less memory, leading to faster processing speeds.

NVIDIA RTX4000Ada_20GB: The GPU Powerhouse

The NVIDIA RTX4000Ada_20GB is a top-tier graphics card designed for high-performance computing, including machine learning and AI. Its powerful Ada architecture and 20GB of memory make it a strong contender for running large and complex AI models. Let's examine its performance:

| Model | Quantization | Token Speed Generation (tokens/s) |

|---|---|---|

| Llama 3 8B | F16 | 20.85 |

| Llama 3 8B | Q4KM | 58.59 |

Observations:

- The NVIDIA RTX4000Ada20GB delivers impressive token speed generation for both F16 and Q4K_M quantization levels of Llama 3 8B model.

- The NVIDIA RTX4000Ada20GB shows a significant advantage in processing speed for the larger Llama 3 8B model compared to the Apple M2, especially with Q4K_M quantization.

A Tale of Two Titans: Comparing Performance

Now, let's compare the performance of these two devices side by side. Here's a quick overview:

| Feature | Apple M2 100gb 10cores | NVIDIA RTX4000Ada_20GB |

|---|---|---|

| Token Speed Generation (Llama 2 7B, F16) | 6.72 tokens/s | N/A |

| Token Speed Generation (Llama 2 7B, Q8_0) | 12.21 tokens/s | N/A |

| Token Speed Generation (Llama 2 7B, Q4_0) | 21.91 tokens/s | N/A |

| Token Speed Generation (Llama 3 8B, F16) | N/A | 20.85 tokens/s |

| Token Speed Generation (Llama 3 8B, Q4KM) | N/A | 58.59 tokens/s |

Key Findings:

- The Apple M2 shines with smaller models like Llama 2 7B, offering a decent token generation speed, especially when using quantized models.

- The NVIDIA RTX4000Ada_20GB performs exceptionally well with larger LLM models like Llama 3 8B, demonstrating significantly higher token speeds.

- There is no data for the NVIDIA RTX4000Ada_20GB for Llama 2 7B or for the Apple M2 for Llama 3 8B and Llama 3 70B.

- The NVIDIA RTX4000Ada_20GB seems to be the clear winner for models larger than 7B, while the Apple M2 provides a good option for smaller models.

Performance Analysis: Strengths and Weaknesses

To make an informed decision about which device is right for you, it's crucial to delve deeper into the strengths and weaknesses of each contender.

Apple M2: Strengths and Weaknesses

Strengths:

- Energy efficiency: The Apple M2 is known for its power efficiency, consuming less energy compared to GPUs. This can be particularly beneficial for mobile devices or scenarios where power consumption is a concern.

- Lower price point: Apple M2-based devices are generally more affordable than systems equipped with high-end GPUs.

- Faster memory access: The Apple M2 features unified memory, allowing for faster data access compared to GPUs with separate memory. This can lead to improved performance for certain workloads.

Weaknesses:

- Limited GPU power: The Apple M2's integrated GPU isn't as powerful as dedicated GPUs, particularly when handling large and complex LLMs.

- Performance bottleneck: The performance of the Apple M2 can be limited by the integrated GPU, especially with larger models.

- Limited upgradeability: Apple devices generally have less flexibility in upgrading components compared to desktop systems with GPUs.

NVIDIA RTX4000Ada_20GB: Strengths and Weaknesses

Strengths:

- Unmatched GPU power: The NVIDIA RTX4000Ada_20GB boasts impressive GPU processing power, ideal for complex AI models.

- Strong performance with large models: The RTX4000Ada_20GB excels at handling larger LLMs, delivering significantly faster token speed generation compared to the M2.

- Wide availability: NVIDIA GPUs are readily available, making it easy to find compatible devices or components.

Weaknesses:

- High power consumption: GPUs like the RTX4000Ada_20GB are known for their power-hungry nature, requiring dedicated power supplies and potentially increasing energy bills.

- Costly: The NVIDIA RTX4000Ada_20GB is a high-end GPU, coming with a hefty price tag.

- Requires specialized knowledge: Setting up and efficiently utilizing a GPU for AI model development requires a certain level of technical expertise.

Practical Recommendations: Choosing the Right Device

For developers working with smaller LLMs (like Llama 2 7B) or focusing on energy efficiency:

The Apple M2 might be the better choice. Its lower price point, energy efficiency, and decent performance with quantized models make it an attractive option. Plus, if you need to run your models on the go, an Apple M2-based device provides a solid portable solution.

For developers working with larger LLMs (like Llama 3 8B or 70B) and prioritizing maximum performance:

The NVIDIA RTX4000Ada_20GB is the undisputed champion. Its powerful GPU capabilities, exceptional performance with larger models, and wide availability make it the go-to choice for demanding LLM development workloads. However, be prepared for a higher price tag and the need for specialized knowledge.

Conclusion: The Art of Choosing the Right Tool

Selecting the right device for LLM development comes down to your specific needs, priorities, and budget.

Think of your choice like choosing the right weapon for a battle:

- Apple M2: A swift and efficient blade, perfect for smaller, quick engagements.

- NVIDIA RTX4000Ada_20GB: A heavy artillery cannon, capable of obliterating large-scale enemies.

Ultimately, the best device is the one that empowers you to achieve your AI development goals most effectively.

FAQ: Unraveling the AI Mysteries

What are LLMs, and why are they so important?

LLMs, or Large Language Models, are AI systems trained on massive datasets of text, allowing them to understand and generate human-like language. They're the driving force behind advancements in natural language processing, code generation, creative writing, and more.

What's the difference between processing and generation in LLMs?

- Processing: Refers to the ability of an LLM to understand and analyze text, like reading a book and understanding its plot.

- Generation: Refers to the ability of an LLM to create new text, like writing a story or crafting a poem.

What is quantization, and why is it important for LLM performance?

Quantization is a technique that reduces the size of AI models by converting the model's weights (the data that represents the model's knowledge) into a more compact format.

Think of it like compressing an image file to reduce its file size without losing too much detail:

- By using lower precision formats like Q80 or Q40, we can store the weights in a more compact way, leading to faster processing speeds and lower memory usage.

Keywords:

LLM, Llama 2, Llama 3, Apple M2, NVIDIA RTX4000Ada_20GB, token speed, AI development, inference, performance, benchmark, quantization, GPU, GPU power, energy efficiency, price, budget, use cases, strengths and weaknesses