Which is Better for AI Development: Apple M2 100gb 10cores or Apple M3 Pro 150gb 14cores? Local LLM Token Speed Generation Benchmark

Introduction

In the fast-paced world of artificial intelligence (AI), the ability to process information quickly and efficiently is paramount. With the rise of Large Language Models (LLMs), powerful hardware is essential for both training and inference. This article delves into the performance of two popular Apple silicon chips - the M2 and M3 Pro - when it comes to running LLMs locally. We'll compare their token generation speeds for the popular Llama 2 7B model, a significant player in the LLM landscape.

Think of an LLM as a super-smart chatbot, capable of understanding and generating human-like text. For it to do its magic, the chip it runs on needs to be able to process text very, very quickly. That's where our two contenders, M2 and M3 Pro, come in. We'll see how they stack up in this performance showdown.

Apple M2 vs. M3 Pro: A Hardware Rundown

Before diving into the token generation benchmarks, let's understand the key hardware specifications of the two contenders:

Apple M2

- Memory bandwidth: 100 GB/s

- GPU cores: 10

Apple M3 Pro

- Memory bandwidth: 150 GB/s

- GPU cores: 14 (we have data for two M3 Pro configurations: 14 cores and 18 cores)

As you can see, the M3 Pro offers higher memory bandwidth and more GPU cores compared to the M2. This suggests it might have an edge in terms of processing power. But what about real-world performance? Let's find out!

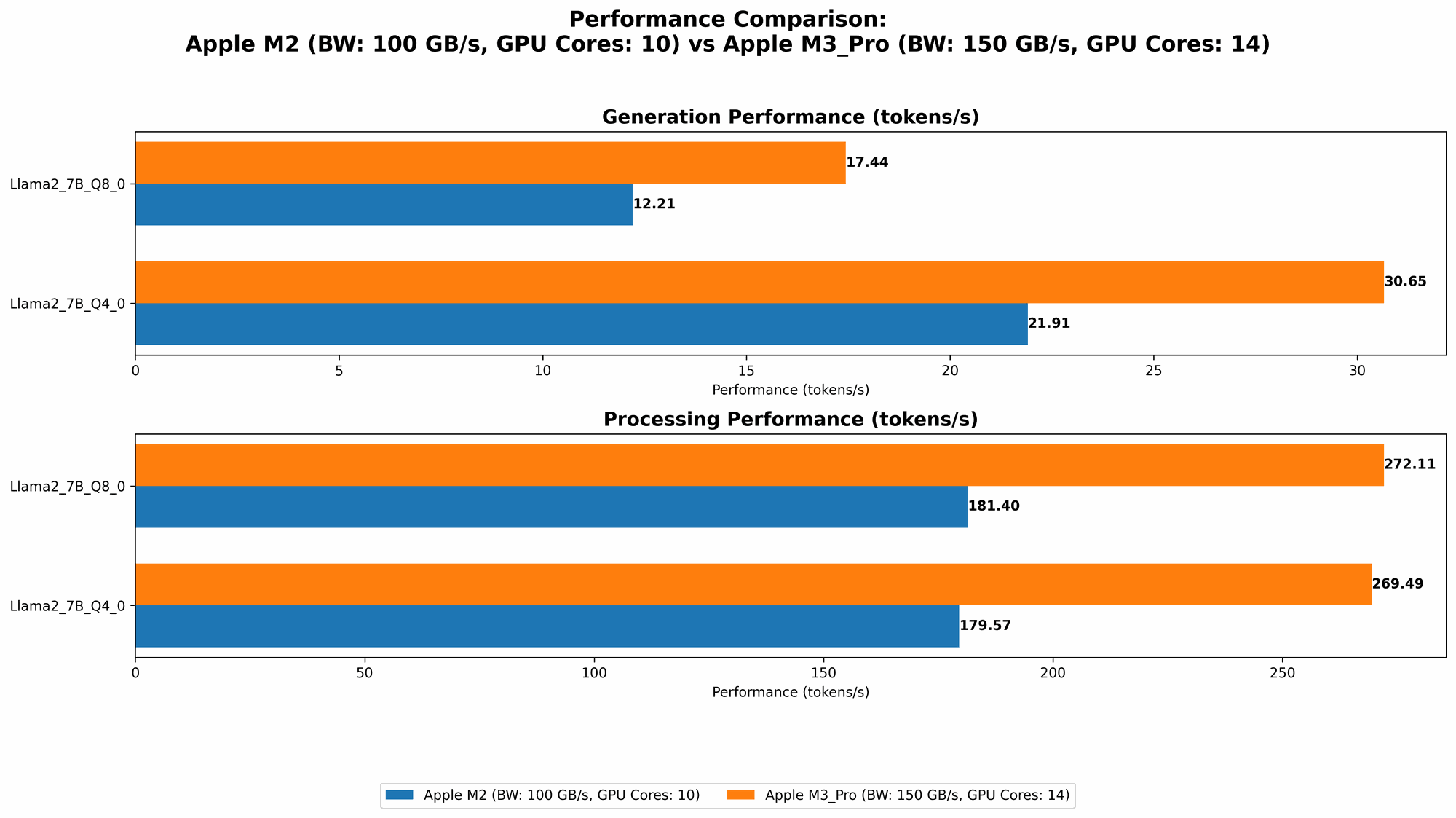

Local LLM Token Speed Generation Benchmark: M2 vs. M3 Pro

Our benchmark focuses on token generation speed, a crucial metric for LLM performance. Essentially, it measures how quickly a device can generate new text tokens, which are the fundamental units of language in LLMs. Higher token speeds mean faster responses and smoother interactions with your AI model.

Token Generation Speed Comparison: M2 vs. M3 Pro (Llama 2 7B)

| Device | Memory Bandwidth (GB/s) | GPU Cores | Llama 2 7B Q8_0 Processing (Tokens/Second) | Llama 2 7B Q8_0 Generation (Tokens/Second) | Llama 2 7B Q4_0 Processing (Tokens/Second) | Llama 2 7B Q4_0 Generation (Tokens/Second) |

|---|---|---|---|---|---|---|

| Apple M2 | 100 | 10 | 181.4 | 12.21 | 179.57 | 21.91 |

| Apple M3 Pro (14 Cores) | 150 | 14 | 272.11 | 17.44 | 269.49 | 30.65 |

| Apple M3 Pro (18 Cores) | 150 | 18 | 344.66 | 17.53 | 341.67 | 30.74 |

Note: We don't have data for the M3 Pro with Llama 2 7B running in F16 precision. However, based on the available data, we can draw valuable conclusions. Let's break down the results.

Performance Analysis: M2 vs. M3 Pro

The M3 Pro clearly outperforms the M2 in terms of token speed generation. We see a significant jump in both processing and generation speed, especially with the 18-core configuration. This is likely due to the higher memory bandwidth and increased GPU cores of the M3 Pro.

Here's a breakdown of the key takeaways:

- M3 Pro for Processing: The M3 Pro consistently delivers faster token processing speeds, making it ideal for tasks that require a large amount of text processing, such as language translation or text summarization. Think of it as a speed demon in the text processing lane!

- M3 Pro for Generation: While the speed difference in generation between the M2 and M3 Pro is not as dramatic, the M3 Pro still provides a noticeable improvement. This is especially true for the 14-core and 18-core configurations. This enhanced generation speed translates to faster and smoother interactions with your LLM.

Quantization: A Quick Explanation

You might have noticed the "Q80" and "Q40" in the table. This refers to quantization, a technique used to reduce the size of LLM models and improve their efficiency. Quantization essentially compresses the model by representing its values using fewer bits (Q80 uses 8 bits, Q40 uses 4 bits). This results in faster processing and reduced memory usage, but sometimes at the cost of a slight decrease in accuracy.

Practical Recommendations for Use Cases

- M2 for Budget-Conscious Users: If you're on a tighter budget or don't need the absolute top-tier performance, the M2 offers a solid balance of price and performance. It still delivers respectable token speeds for many LLM applications.

- M3 Pro for Performance-Critical Applications: For developers and researchers working with computationally intensive tasks, the M3 Pro is the clear winner. Its increased processing power and significantly faster token generation speeds allow for more efficient development and smoother interactions with your AI models. Imagine it as the turbocharged engine for your AI projects!

FAQ: LLM Development and Apple Silicon

What are the best Apple silicon chips for LLM development?

The M3 Pro stands out as the top contender for LLM development due to its enhanced performance. However, for budget-conscious users, the M2 still offers respectable performance.

What are the advantages of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: Your data stays on your device, eliminating concerns about sending sensitive information to remote servers.

- Speed: Local processing often delivers faster response times, especially for smaller models.

- Control: You have full control over your model's configuration and environment.

What factors should I consider when choosing a device for LLM development?

- Model size: Larger models require devices with more processing power and memory.

- Use case: Consider your specific requirements, such as the speed of token generation or memory bandwidth.

- Budget: Balance your needs with the price of the device.

Keywords

Apple M2, Apple M3 Pro, LLM, Large Language Model, Llama 2 7B, token speed, generation, processing, quantization, AI, AI development, machine learning, local LLM, GPU, memory bandwidth, GPU cores, performance benchmark, speed comparison, practical recommendations, FAQs, privacy, efficiency, control.