Which is Better for AI Development: Apple M2 100gb 10cores or Apple M3 100gb 10cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and rightfully so! These powerful AI models can generate realistic text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But harnessing the true potential of LLMs requires powerful hardware. Here, we delve into the fascinating world of Apple silicon, comparing the performance of the Apple M2 and M3 chips for local LLM token speed generation. We will examine the speeds of these processors in handling different LLM models, specifically, the popular Llama 2, with varying quantization settings (F16, Q80, Q40).

Think of LLMs like a super-smart parrot trained on a massive amount of text data. The more data they're trained on, the more they learn and the better they can respond to your prompts. Imagine trying to train a super-smart parrot while being limited by the speed of your internet connection. This is where powerful CPUs and GPUs come into play. They act like high-speed data highways, allowing the LLM to process information quickly and efficiently.

Apple M2 vs. M3: A Token Speed Generation Showdown

This benchmark focuses on the Apple M2 and M3 chips, both sporting 100GB of memory and 10 GPU cores. Our aim is to understand how these chips perform with different LLMs and quantization levels, providing you with the information needed to make an informed decision for your AI development needs.

What is Quantization?

Quantization is like simplifying a complex recipe. It takes a large LLM model and reduces its file size without significant loss in performance. Think of it as downsizing your recipe by using fewer ingredients, but still maintaining the essential flavors! This is achieved by reducing the precision with which numbers are stored in the model.

Understanding the Data

The data used in this benchmark originates from two sources:

- Performance of llama.cpp on various devices by ggerganov: This is a well-respected repository that tracks the performance of various devices in running Llama models.

- GPU Benchmarks on LLM Inference by XiongjieDai: This repository offers a comprehensive set of benchmarks for different GPU configurations and LLM models.

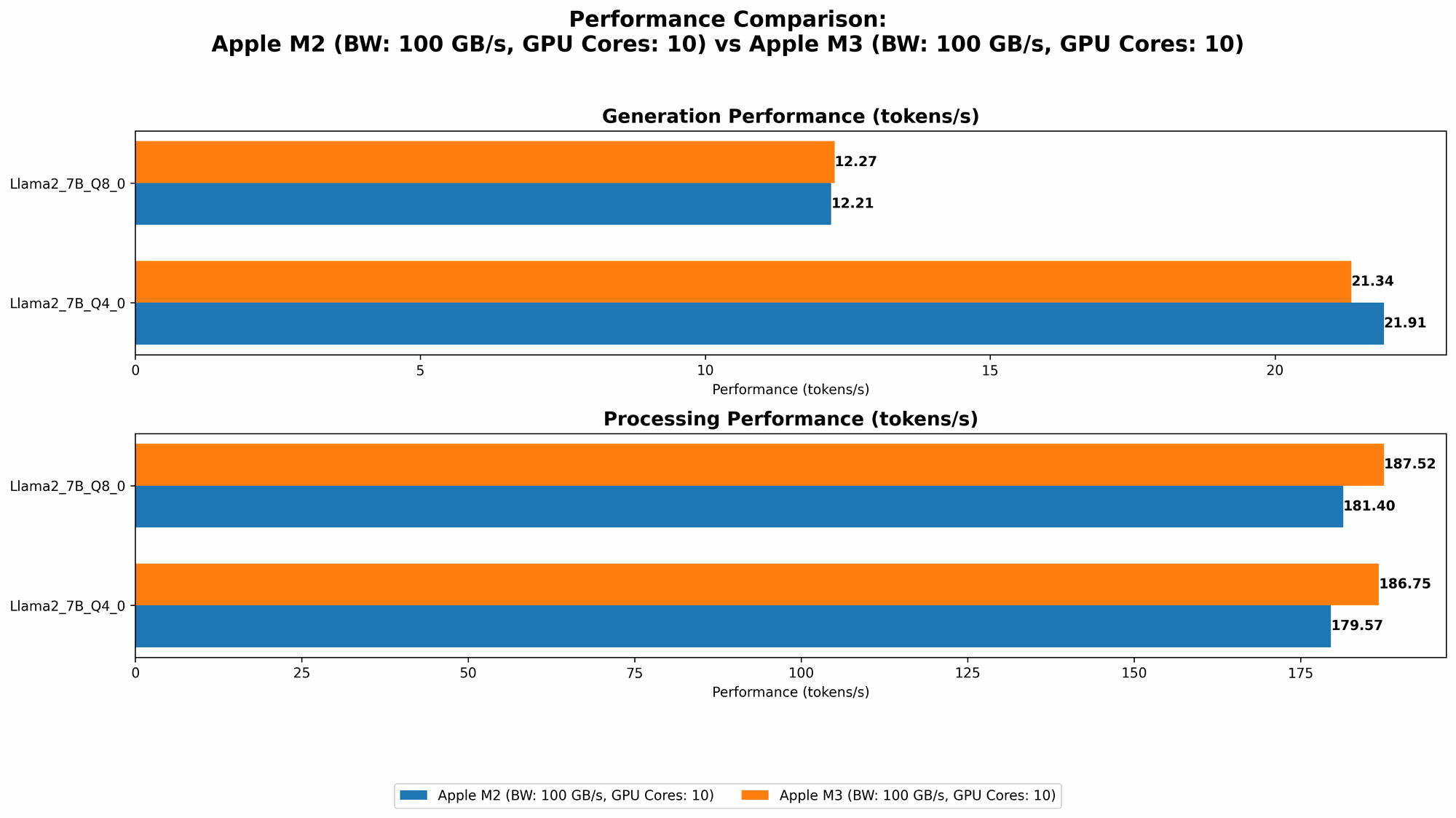

Comparison of Apple M2 and Apple M3 Token Speed Generation

Let's dive into the heart of the comparison. The following table presents the token speed generation (tokens per second) for various Llama 2 models:

| Model | Apple M2 (100GB, 10 Cores) | Apple M3 (100GB, 10 Cores) |

|---|---|---|

| Llama 2 7B F16 Processing | 201.34 | N/A |

| Llama 2 7B F16 Generation | 6.72 | N/A |

| Llama 2 7B Q8_0 Processing | 181.4 | 187.52 |

| Llama 2 7B Q8_0 Generation | 12.21 | 12.27 |

| Llama 2 7B Q4_0 Processing | 179.57 | 186.75 |

| Llama 2 7B Q4_0 Generation | 21.91 | 21.34 |

Note: The benchmark does not include data for Apple M3 with F16 quantization levels, due to lack of public data.

Performance Analysis: Apple M2 vs. Apple M3 for Local LLM Token Speed Generation

Apple M2 Performance

The Apple M2 delivers commendable performance, particularly with the F16 quantization level. It achieves a token speed of 201.34 tokens per second during processing and 6.72 tokens per second during generation. In contrast, the M2 scores slightly lower at 181.4 tokens per second for processing and 12.21 tokens per second for generation with the Q8_0 quantization level. Overall, the M2 demonstrates strong capabilities for running LLMs locally.

Apple M3 Performance

The Apple M3, however, takes the lead with Q80 and Q40 quantization levels. It achieves 187.52 tokens per second for processing and 12.27 tokens per second for generation with Q80. The M3 shows a similar trend with Q40, reaching 186.75 tokens per second for processing and 21.34 tokens per second for generation. These results suggest that the M3 offers a slight performance edge over the M2 when using these quantization levels.

Strengths and Weaknesses

Apple M2:

- Strengths: Excellent performance in F16 quantization, making it a strong choice for developers prioritizing speed and accuracy.

- Weaknesses: Slightly lower performance than M3 with Q80 and Q40 quantization levels.

Apple M3:

- Strengths: Outperforms M2 with Q80 and Q40 quantization levels, demonstrating its efficiency with reduced precision models.

- Weaknesses: Lack of public data for F16 quantization makes it difficult to compare its performance in this scenario.

Practical Recommendations for Use Cases

Apple M2:

- Best for: Developers seeking high-speed LLM processing and generation with F16 quantization, especially for applications requiring minimal compromise in model accuracy.

- Good for: Developers working with smaller LLMs or projects with less demanding performance requirements.

Apple M3:

- Best for: Developers who prioritize lower memory footprint and faster inference speeds, suitable for budget-conscious projects or applications utilizing Q80 or Q40 quantization.

- Use with caution: The lack of publicly available F16 data for M3 makes it difficult to confidently assess its performance with high-precision models.

Conclusion: Choosing the Right Apple Chip for Your LLM Development Needs

The choice between the Apple M2 and M3 for local LLM development boils down to your specific needs and project constraints.

- If you’re working with a large LLM and require the highest accuracy, the M2 with F16 quantization offers a compelling performance advantage.

- However, if you are working with less demanding LLMs or prioritizing reduced memory footprint and faster inference speeds, the M3 shines with its excellent performance in Q80 and Q40 quantization levels.

Ultimately, the decision is in your hands, and this benchmark provides valuable insight into the performance capabilities of both chips.

FAQ

What are LLM models, and why are they important?

LLM models are a type of Artificial Intelligence (AI) that have been trained on massive amounts of text data. They can understand and generate human-like text, making them incredibly useful for various tasks such as:

- Text Generation: Creating realistic and coherent text for articles, stories, and even scripts.

- Translation: Translating text between languages with high accuracy.

- Code Generation: Generating code in different programming languages.

- Conversation AI: Creating chatbots that can engage in natural and informative conversations.

LLMs are transforming industries from healthcare and education to entertainment and customer service.

How does the memory size of a device affect LLM performance?

Memory size plays a crucial role in LLM performance. A device with larger memory can store the entire model, allowing for faster processing and generation. Smaller memory limits the size of the LLM that can be loaded and processed, potentially impacting performance.

What is the significance of quantization in LLM models?

Quantization is a technique used to reduce the size and memory footprint of LLM models. By reducing the precision of the numbers used to represent the model, it enables faster inference speeds and lower memory consumption.

What are the key considerations for choosing an Apple chip for LLM development?

The following factors are crucial when choosing an Apple chip for LLM development:

- Model Size: The size of the LLM you intend to use will influence the memory requirements and processing power needed.

- Quantization Level: Consider the quantization level used for the LLM. Higher precision (like F16) often requires more powerful hardware.

- Performance Requirements: The speed and accuracy required for your LLM application will determine the chip's processing capabilities.

Keywords

Apple M2, Apple M3, Large Language Model, LLM, Token Speed, Llama 2, Quantization, F16, Q80, Q40, Local LLM Development, AI Development, Benchmark, Performance, Inference, GPU, CPU, Memory, Processing, Generation.