Which is Better for AI Development: Apple M2 100gb 10cores or Apple M2 Ultra 800gb 60cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development has exploded in recent years, with large language models (LLMs) taking center stage. LLMs are incredibly powerful tools that can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But for all their power, LLMs can be resource-hungry, demanding powerful hardware to run effectively.

Two popular choices for local LLM development are Apple's M2 and M2 Ultra chips. These powerful processors, packed with cores and bandwidth, are known for their remarkable performance, making them ideal for demanding tasks like LLM inference.

In this article, we'll dive into the world of local LLM token speed generation by comparing the performance of the Apple M2 and M2 Ultra chips. We'll analyze the differences in speed and efficiency for various LLM models with different levels of quantization, giving you a clear picture of which device might be best for your specific LLM needs.

Let's get this AI party started!

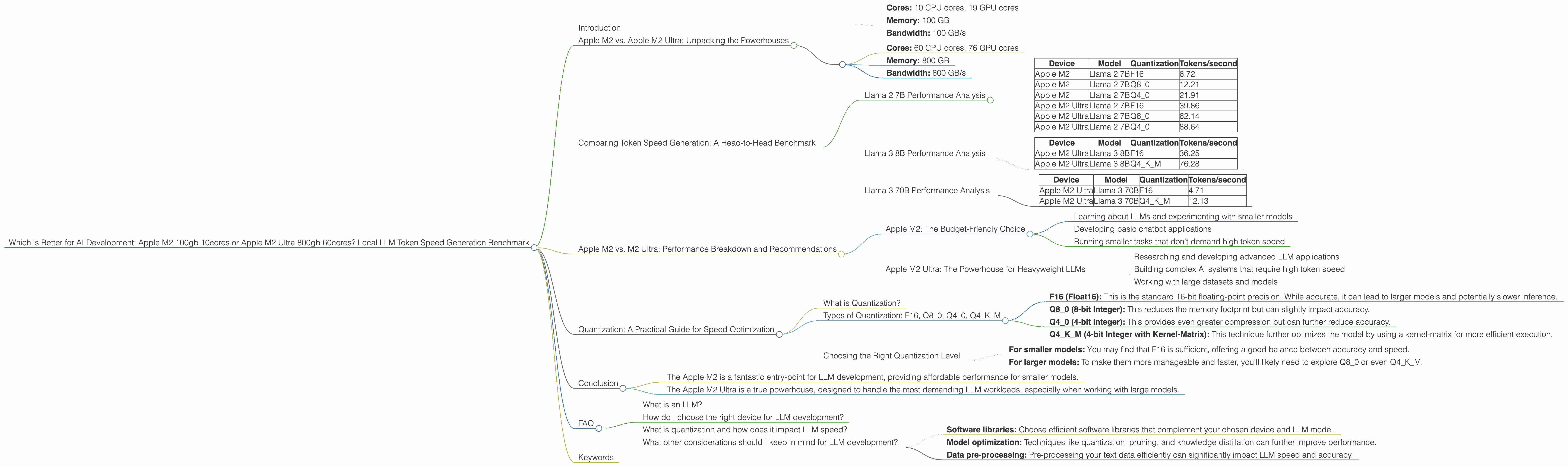

Apple M2 vs. Apple M2 Ultra: Unpacking the Powerhouses

Before we jump into the benchmark results, it's helpful to understand the key differences between the Apple M2 and M2 Ultra. These chips are both beasts in their own right, but their specs paint a clear picture of their strengths and weaknesses.

Apple M2:

- Cores: 10 CPU cores, 19 GPU cores

- Memory: 100 GB

- Bandwidth: 100 GB/s

Apple M2 Ultra:

- Cores: 60 CPU cores, 76 GPU cores

- Memory: 800 GB

- Bandwidth: 800 GB/s

The M2 Ultra is clearly the big brother, boasting a significantly higher core count, more memory, and significantly faster bandwidth. This makes it an absolute powerhouse for demanding tasks like LLM inference.

Comparing Token Speed Generation: A Head-to-Head Benchmark

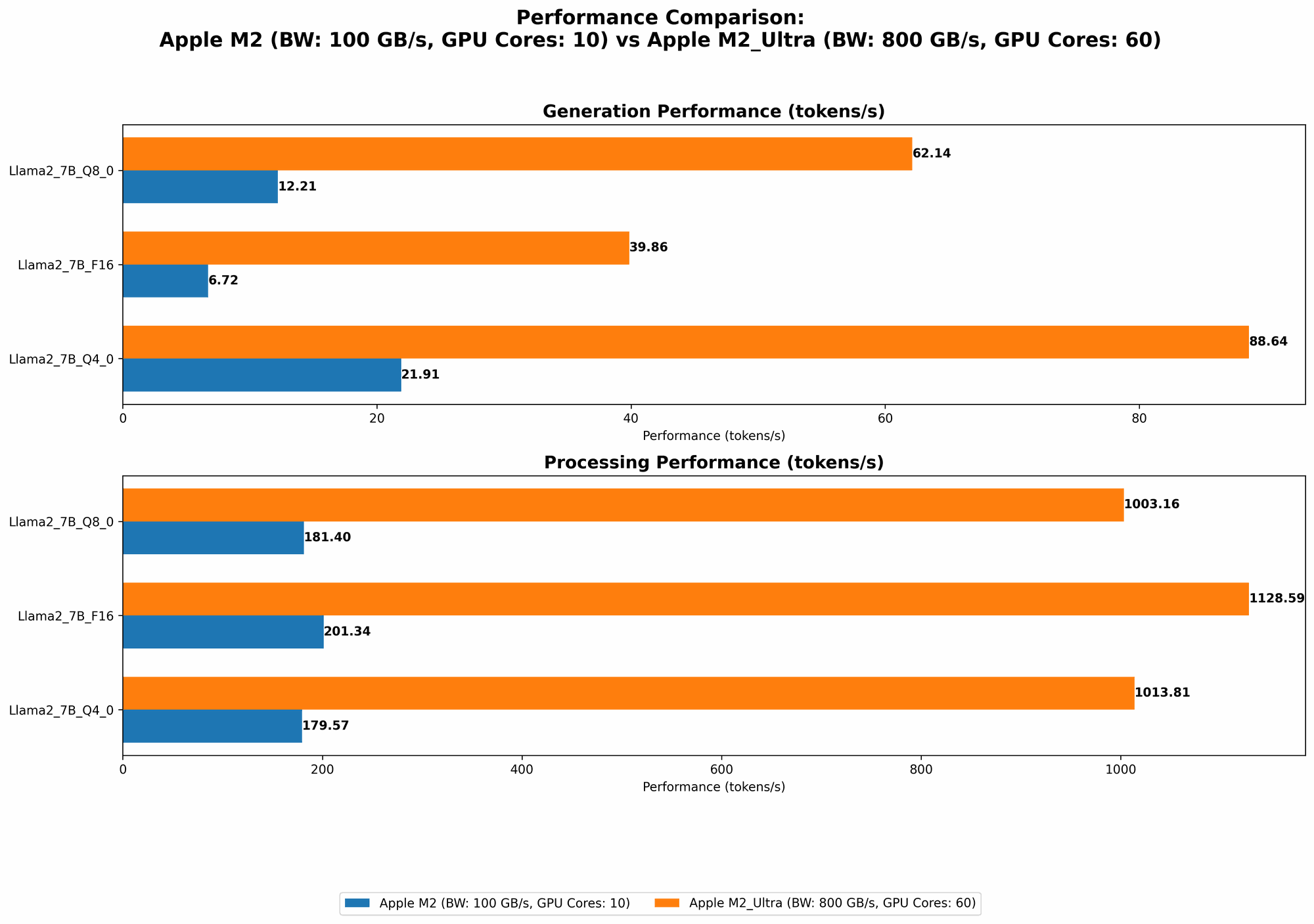

Now, let's dig into the good stuff—the benchmark numbers! We'll be looking at the token speed generation for different LLM models, quantized at various levels (F16, Q80, Q40), on both the Apple M2 and M2 Ultra chips. For a better understanding, we'll use the tokens/second as our metric to compare speeds.

Important: If there is no data (or NULL) for a specific LLM model and device combination, then it's not included in this benchmark.

Llama 2 7B Performance Analysis

Let's start with the Llama 2 7B model, a popular choice for experimentation.

| Device | Model | Quantization | Tokens/second |

|---|---|---|---|

| Apple M2 | Llama 2 7B | F16 | 6.72 |

| Apple M2 | Llama 2 7B | Q8_0 | 12.21 |

| Apple M2 | Llama 2 7B | Q4_0 | 21.91 |

| Apple M2 Ultra | Llama 2 7B | F16 | 39.86 |

| Apple M2 Ultra | Llama 2 7B | Q8_0 | 62.14 |

| Apple M2 Ultra | Llama 2 7B | Q4_0 | 88.64 |

Analysis:

- The M2 Ultra clearly outperforms the M2 across all quantization levels for the Llama 2 7B model, generating tokens significantly faster. This is no surprise, considering the M2 Ultra's superior core count, bandwidth, and memory.

- We see a clear trend of increasing token speed with higher levels of quantization (Q40 > Q80 > F16). Quantization is like compressing the LLM model to make it more efficient and run faster. Essentially, you're trading some precision in the model's calculations for increased speed.

Llama 3 8B Performance Analysis

Now, let's move on to a larger model, Llama 3 8B.

| Device | Model | Quantization | Tokens/second |

|---|---|---|---|

| Apple M2 Ultra | Llama 3 8B | F16 | 36.25 |

| Apple M2 Ultra | Llama 3 8B | Q4KM | 76.28 |

Analysis:

- Note that the M2 is not included in this analysis because there aren't any benchmark numbers available for this model on this device.

- The M2 Ultra, as expected, performs much faster with the Llama 3 8B model, showcasing its prowess for handling larger models.

- The Q4KM quantization level achieves a much faster token speed compared to F16, demonstrating the advantage of quantization for larger models.

Llama 3 70B Performance Analysis

We're going bigger! Let's take a look at the mighty Llama 3 70B.

| Device | Model | Quantization | Tokens/second |

|---|---|---|---|

| Apple M2 Ultra | Llama 3 70B | F16 | 4.71 |

| Apple M2 Ultra | Llama 3 70B | Q4KM | 12.13 |

Analysis:

- Again, the M2 doesn't show up here as there are no benchmark numbers available for this model and device.

- With the massive Llama 3 70B, the M2 Ultra still shows its might! It's able to run the model, although the token speed drops considerably compared to the smaller models.

- Here, the power of quantization becomes even more apparent. The Q4KM quantization level significantly increases the token speed, making the model more manageable on the M2 Ultra.

Apple M2 vs. M2 Ultra: Performance Breakdown and Recommendations

So, which device reigns supreme for local LLM development? The answer, as it often is, depends on your specific needs.

Apple M2: The Budget-Friendly Choice

The Apple M2 emerges as a budget-friendly option for LLM development. It excels with smaller models like Llama 2 7B, offering reasonable performance at a more affordable price point. If you are just starting out with LLMs or developing smaller-scale projects, the M2 can provide a great starting point.

Use Cases:

- Learning about LLMs and experimenting with smaller models

- Developing basic chatbot applications

- Running smaller tasks that don't demand high token speed

Apple M2 Ultra: The Powerhouse for Heavyweight LLMs

The Apple M2 Ultra truly shines when you need to work with larger models. Its massive core count, memory, and bandwidth empower it to tackle models like Llama 3 8B and Llama 3 70B, generating tokens much faster.

Use Cases:

- Researching and developing advanced LLM applications

- Building complex AI systems that require high token speed

- Working with large datasets and models

Quantization: A Practical Guide for Speed Optimization

Remember those quantization numbers we saw? Quantization plays a crucial role in optimizing LLM performance, especially when dealing with larger models.

What is Quantization?

Think of quantization as a way to compress your LLM model. Instead of using a lot of bits (like 16 bits for float16), you use fewer bits (8 bits or even 4 bits) to represent the weights in the model. It's like using a smaller "bucket" to carry the same amount of water.

This compression can significantly reduce the memory footprint required to run the model and potentially increase inference speed, making it a valuable tool for developers.

Types of Quantization: F16, Q80, Q40, Q4KM

Each quantization level has its trade-offs:

- F16 (Float16): This is the standard 16-bit floating-point precision. While accurate, it can lead to larger models and potentially slower inference.

- Q8_0 (8-bit Integer): This reduces the memory footprint but can slightly impact accuracy.

- Q4_0 (4-bit Integer): This provides even greater compression but can further reduce accuracy.

- Q4KM (4-bit Integer with Kernel-Matrix): This technique further optimizes the model by using a kernel-matrix for more efficient execution.

Choosing the Right Quantization Level

The right quantization level depends on your specific needs and the trade-off between accuracy and speed.

- For smaller models: You may find that F16 is sufficient, offering a good balance between accuracy and speed.

- For larger models: To make them more manageable and faster, you'll likely need to explore Q80 or even Q4K_M.

Conclusion

In the battle of the Apple M2 vs. M2 Ultra for local LLM development, the winner depends on your specific needs.

- The Apple M2 is a fantastic entry-point for LLM development, providing affordable performance for smaller models.

- The Apple M2 Ultra is a true powerhouse, designed to handle the most demanding LLM workloads, especially when working with large models.

Understanding the different levels of quantization can also play a huge role in maximizing performance and efficiency. When choosing the right device and quantization level, you'll be equipped to navigate the exciting world of LLM development with confidence and speed!

FAQ

What is an LLM?

An LLM (Large Language Model) is a type of artificial intelligence that is specifically trained to understand and generate human-like text. These models are trained on massive datasets of text and code, allowing them to learn complex patterns and relationships in language.

How do I choose the right device for LLM development?

The right device for LLM development depends on your needs. If you are working with smaller models or just starting out, the Apple M2 is a good option. For larger models and more demanding workloads, the Apple M2 Ultra is the way to go.

What is quantization and how does it impact LLM speed?

Quantization is a technique used to compress LLM models by reducing the number of bits used to represent their weights. This compression can significantly reduce memory consumption and potentially improve inference speed, but it may also slightly affect the model's accuracy.

What other considerations should I keep in mind for LLM development?

Besides hardware, other crucial factors include:

- Software libraries: Choose efficient software libraries that complement your chosen device and LLM model.

- Model optimization: Techniques like quantization, pruning, and knowledge distillation can further improve performance.

- Data pre-processing: Pre-processing your text data efficiently can significantly impact LLM speed and accuracy.

Keywords

Apple M2, Apple M2 Ultra, LLM, Large Language Model, Token Speed, Inference, Local Development, Benchmark, Quantization, F16, Q80, Q40, Q4KM, Performance, AI Development, Llama 2, Llama 3, Llama 7B, Llama 8B, Llama 70B, GPU Cores, Bandwidth, Memory, Software Libraries, Model Optimization, Data Preprocessing.