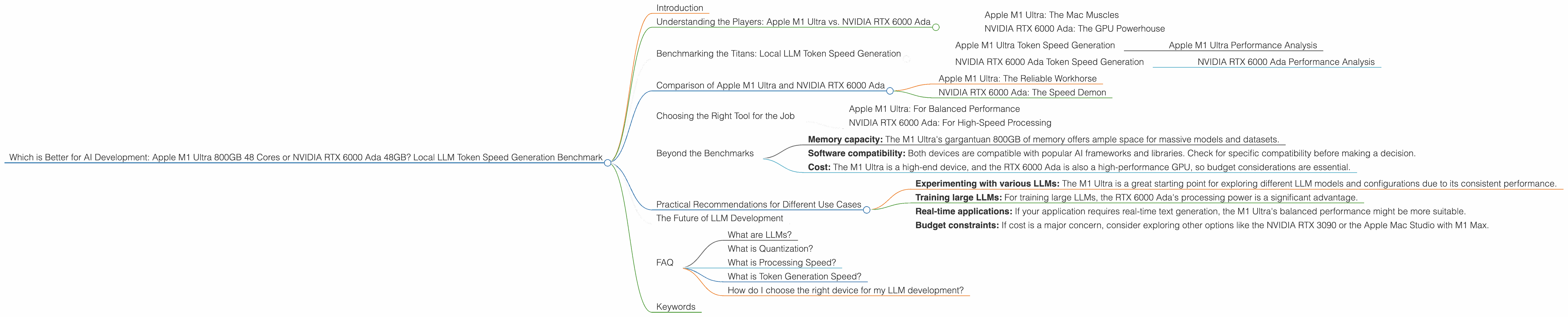

Which is Better for AI Development: Apple M1 Ultra 800gb 48cores or NVIDIA RTX 6000 Ada 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. Imagine a computer that can understand and generate human-like text – that's the power of LLMs. But to unleash this potential, you need a powerful machine.

This article focuses on two heavyweights in the AI development world: the Apple M1 Ultra with its 800GB memory and 48 cores, and the NVIDIA RTX 6000 Ada with its 48GB memory. We will pit these titans against each other in a token speed generation benchmark for local LLM models. This comparison will equip you with the information you need to choose the perfect device for your AI development journey.

Understanding the Players: Apple M1 Ultra vs. NVIDIA RTX 6000 Ada

Apple M1 Ultra: The Mac Muscles

Apple's M1 Ultra is a beast of a processor, packing a whopping 48 CPU cores and 800GB of unified memory. This combination makes it a formidable force for handling large datasets and complex computations. Think of the M1 Ultra as a marathon runner, excelling in sustained performance over long periods.

NVIDIA RTX 6000 Ada: The GPU Powerhouse

On the other side of the ring, we have the NVIDIA RTX 6000 Ada, a graphics processing unit (GPU) designed for high-performance computing. Its 48GB of GDDR6 memory and dedicated CUDA cores make it a champion in parallel processing, particularly for tasks like machine learning. This GPU is like a sprinter, delivering explosive speed and efficiency for specific, intensive tasks.

Benchmarking the Titans: Local LLM Token Speed Generation

We'll analyze the token speed generation of different LLMs, specifically focusing on Llama 2 7B and Llama 3 8B, on both devices. Tokens are the building blocks of text in LLMs, akin to words in human language. The faster the device generates tokens, the faster your AI models can process and generate text.

Apple M1 Ultra Token Speed Generation

| Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama2 7B | F16 | 875.81 | 33.92 |

| Llama2 7B | Q8_0 | 783.45 | 55.69 |

| Llama2 7B | Q4_0 | 772.24 | 74.93 |

Apple M1 Ultra Performance Analysis

Looking at the numbers, the M1 Ultra shows consistently strong performance across various Llama 2 7B model configurations. It achieves impressive processing speeds with all quantization levels while also maintaining a decent token generation speed, particularly with Q4_0 quantization. The M1 Ultra is a great option for those who need consistent and sustained performance across all LLM tasks.

NVIDIA RTX 6000 Ada Token Speed Generation

| Model | Quantization | Processing (Tokens/Second) | Generation (Tokens/Second) |

|---|---|---|---|

| Llama3 8B | F16 | 6205.44 | 51.97 |

| Llama3 8B | Q4KM | 5560.94 | 130.99 |

| Llama3 70B | Q4KM | 547.03 | 18.36 |

NVIDIA RTX 6000 Ada Performance Analysis

The RTX 6000 Ada shines with its incredible processing speeds for Llama 3 8B models, especially in F16 and Q4KM quantization. However, when it comes to token generation, it falls behind the M1 Ultra in both Llama 3 8B and Llama 3 70B models. This highlights the RTX 6000 Ada's strength in parallel processing for tasks like training and inference, but it struggles to match the M1 Ultra's consistent generation speed.

Comparison of Apple M1 Ultra and NVIDIA RTX 6000 Ada

Apple M1 Ultra: The Reliable Workhorse

The M1 Ultra excels with its consistent performance across various models and quantization schemes. It's like a reliable workhorse, consistently delivering results without any major hiccups. It's a solid choice for those who need a device that can handle a variety of LLMs and tasks without compromising performance.

NVIDIA RTX 6000 Ada: The Speed Demon

The RTX 6000 Ada is a speed demon in the arena of processing, particularly with larger LLMs like Llama 3 8B. But its token generation speed is less impressive, hinting at a trade-off between raw processing power and generation efficiency. This device is perfect for users who prioritize lightning-fast processing for specific tasks but don't mind a slight dip in generation speed.

Choosing the Right Tool for the Job

So, which device reigns supreme? It depends on your specific needs and priorities.

Apple M1 Ultra: For Balanced Performance

If you are looking for a device that can handle a wide range of LLMs with consistent performance, the M1 Ultra is the champion. It excels in both processing and generation speed, making it a versatile tool for various AI tasks.

NVIDIA RTX 6000 Ada: For High-Speed Processing

For tasks that demand lightning-fast processing, the RTX 6000 Ada is the clear winner. However, be prepared for a slight dip in generation speed compared to the M1 Ultra. This device is ideal for users who prioritize processing power for specific tasks.

Beyond the Benchmarks

While token generation speed is a crucial metric, remember that it's not the only factor influencing your AI development experience. Consider other factors:

- Memory capacity: The M1 Ultra's gargantuan 800GB of memory offers ample space for massive models and datasets.

- Software compatibility: Both devices are compatible with popular AI frameworks and libraries. Check for specific compatibility before making a decision.

- Cost: The M1 Ultra is a high-end device, and the RTX 6000 Ada is also a high-performance GPU, so budget considerations are essential.

Practical Recommendations for Different Use Cases

Experimenting with various LLMs: The M1 Ultra is a great starting point for exploring different LLM models and configurations due to its consistent performance.

Training large LLMs: For training large LLMs, the RTX 6000 Ada's processing power is a significant advantage.

Real-time applications: If your application requires real-time text generation, the M1 Ultra's balanced performance might be more suitable.

Budget constraints: If cost is a major concern, consider exploring other options like the NVIDIA RTX 3090 or the Apple Mac Studio with M1 Max.

The Future of LLM Development

The world of LLMs is evolving rapidly. Expect new models and architectures to emerge alongside advancements in hardware and software. As these advancements progress, the choice of devices will become even more critical. This article provides a starting point for navigating the complexities of local LLM development, empowering you to make informed decisions based on your specific needs and priorities.

FAQ

What are LLMs?

LLMs are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive amounts of data and can perform various language-related tasks, including translation, summarization, and question answering.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models while maintaining acceptable performance. It involves representing numbers with fewer bits, which reduces memory usage and speeds up processing.

What is Processing Speed?

Processing speed refers to the rate at which a device can process tokens, the building blocks of text in LLMs. Higher processing speed means faster model inference and training.

What is Token Generation Speed?

Token generation speed is the rate at which a device can generate new tokens, essentially creating new text. It's crucial for applications that require real-time text generation or interactive AI.

How do I choose the right device for my LLM development?

The best device depends on your specific needs and priorities. Consider your budget, the size of your LLM models, the type of tasks you'll be performing, and the performance demands of your application.

Keywords

Apple M1 Ultra, NVIDIA RTX 6000 Ada, LLM, Llama 2, Llama 3, Token Speed Generation, Benchmark, AI Development, Processing Speed, Generation Speed, Quantization, F16, Q80, Q40, Q4KM, Performance Analysis, GPU, CPU, Memory, Software Compatibility, Cost, Use Cases.