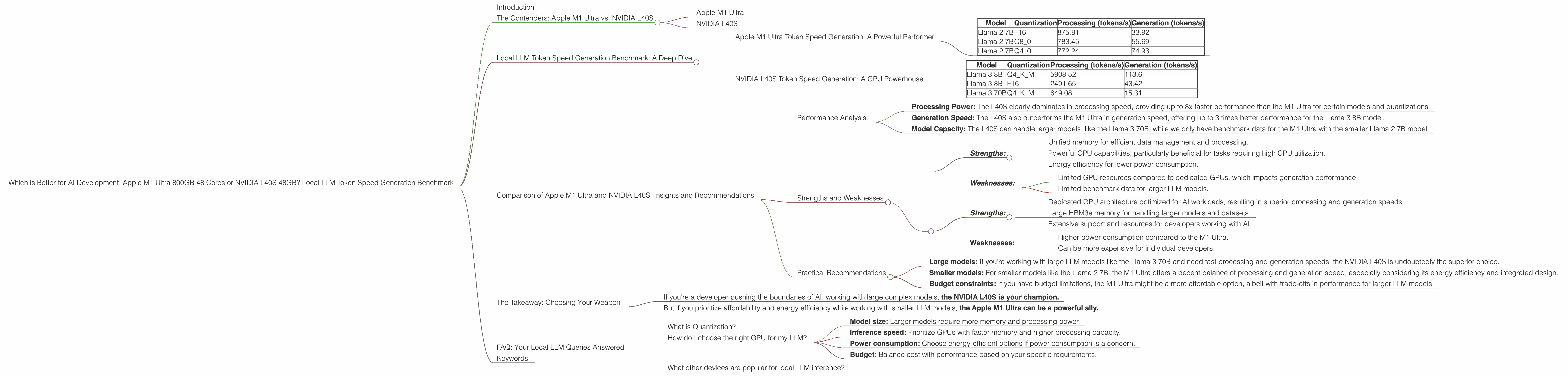

Which is Better for AI Development: Apple M1 Ultra 800gb 48cores or NVIDIA L40S 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The quest for the perfect hardware setup for AI development, especially for local Large Language Model (LLM) inference, is like searching for the Holy Grail. You want speed, efficiency, and affordability — and that's no easy feat.

This article delves into the world of local LLM performance, pitting two titans against each other: the Apple M1 Ultra 800GB 48 cores and the NVIDIA L40S 48GB. We will analyze their capabilities and compare their performance in token speed generation for various LLM models, using real-world data from benchmarks. Buckle up, geeks, because this will be a thrilling ride!

The Contenders: Apple M1 Ultra vs. NVIDIA L40S

Apple M1 Ultra

The Apple M1 Ultra is a beast of a chip, sporting a whopping 48 CPU cores, 76 GPU cores, and a mind-boggling 800GB of unified memory. It's designed for intense workload tasks, including AI development.

NVIDIA L40S

The NVIDIA L40S is a powerhouse in the world of GPUs, boasting 48GB of HBM3e memory and incredibly high performance. It's specifically aimed at AI workloads, often used in cloud-based setups and demanding AI applications.

Local LLM Token Speed Generation Benchmark: A Deep Dive

To understand which device reigns supreme for local LLM token speed generation, we'll analyze data from two benchmark sources: Performance of llama.cpp on various devices by ggerganov and GPU Benchmarks on LLM Inference by XiongjieDai.

Apple M1 Ultra Token Speed Generation: A Powerful Performer

Here's the data from the M1 Ultra 800GB 48 cores:

| Model | Quantization | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|---|

| Llama 2 7B | F16 | 875.81 | 33.92 |

| Llama 2 7B | Q8_0 | 783.45 | 55.69 |

| Llama 2 7B | Q4_0 | 772.24 | 74.93 |

Note: The benchmark data for the M1 Ultra is only available for the Llama 2 7B model, with different quantization levels.

The Results:

- Llama 2 7B: The M1 Ultra exhibits impressive processing speeds, reaching over 700 tokens/s across different quantization levels. However, its generation speed trails behind, with the highest recorded value at 74.93 tokens/s.

Analysis:

- The M1 Ultra's unified memory architecture and its powerful cores contribute to its excellent processing performance.

- However, the generation speed, which is how fast the model generates text, lags behind. This might be because the model is bottlenecked by the limited GPU resources.

NVIDIA L40S Token Speed Generation: A GPU Powerhouse

Here's the data from the NVIDIA L40S 48GB:

| Model | Quantization | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 5908.52 | 113.6 |

| Llama 3 8B | F16 | 2491.65 | 43.42 |

| Llama 3 70B | Q4KM | 649.08 | 15.31 |

Note: No data is available for F16 quantization on the L40S for Llama 3 70B.

The Results:

- Llama 3 8B: The L40S showcases incredibly fast processing speeds, especially when using Q4KM quantization, exceeding 5000 tokens/s. It also demonstrates strong generation speeds, reaching over 100 tokens/s.

- Llama 3 70B: Although slightly slower than the 8B model, the L40S still maintains impressive processing and generation speeds for the 70B model, especially using Q4KM quantization.

Analysis:

- The L40S's dedicated GPU architecture, with its massive HBM3e memory, delivers unparalleled performance for both processing and generation, especially with the Llama 3 8B model.

- Its capability to handle larger models like Llama 3 70B is a significant advantage.

Comparison of Apple M1 Ultra and NVIDIA L40S: Insights and Recommendations

Performance Analysis:

- Processing Power: The L40S clearly dominates in processing speed, providing up to 8x faster performance than the M1 Ultra for certain models and quantizations.

- Generation Speed: The L40S also outperforms the M1 Ultra in generation speed, offering up to 3 times better performance for the Llama 3 8B model.

- Model Capacity: The L40S can handle larger models, like the Llama 3 70B, while we only have benchmark data for the M1 Ultra with the smaller Llama 2 7B model.

Strengths and Weaknesses

Apple M1 Ultra:

- Strengths:

- Unified memory for efficient data management and processing.

- Powerful CPU capabilities, particularly beneficial for tasks requiring high CPU utilization.

- Energy efficiency for lower power consumption.

- Weaknesses:

- Limited GPU resources compared to dedicated GPUs, which impacts generation performance.

- Limited benchmark data for larger LLM models.

NVIDIA L40S:

- Strengths:

- Dedicated GPU architecture optimized for AI workloads, resulting in superior processing and generation speeds.

- Large HBM3e memory for handling larger models and datasets.

- Extensive support and resources for developers working with AI.

- Weaknesses:

- Higher power consumption compared to the M1 Ultra.

- Can be more expensive for individual developers.

Practical Recommendations

- Large models: If you're working with large LLM models like the Llama 3 70B and need fast processing and generation speeds, the NVIDIA L40S is undoubtedly the superior choice.

- Smaller models: For smaller models like the Llama 2 7B, the M1 Ultra offers a decent balance of processing and generation speed, especially considering its energy efficiency and integrated design.

- Budget constraints: If you have budget limitations, the M1 Ultra might be a more affordable option, albeit with trade-offs in performance for larger LLM models.

The Takeaway: Choosing Your Weapon

Ultimately, the right choice between the Apple M1 Ultra and the NVIDIA L40S depends on your specific needs and budget constraints.

- If you're a developer pushing the boundaries of AI, working with large complex models, the NVIDIA L40S is your champion.

- But if you prioritize affordability and energy efficiency while working with smaller LLM models, the Apple M1 Ultra can be a powerful ally.

Remember, the world of AI development is constantly evolving, and both the M1 Ultra and L40S are excellent tools for exploring the exciting possibilities of LLMs. The key is to choose the weapon that best suits your battlefield!

FAQ: Your Local LLM Queries Answered

What is Quantization?

Quantization is a technique used to reduce the size of LLM models and improve inference speed. Imagine compressing a high-resolution image to reduce its file size without losing too much detail. Quantization does the same for LLMs, reducing the number of bits used to represent each parameter, thus making the model smaller and faster to process.

How do I choose the right GPU for my LLM?

Consider factors like:

- Model size: Larger models require more memory and processing power.

- Inference speed: Prioritize GPUs with faster memory and higher processing capacity.

- Power consumption: Choose energy-efficient options if power consumption is a concern.

- Budget: Balance cost with performance based on your specific requirements.

What other devices are popular for local LLM inference?

Other popular options include GPUs like the NVIDIA A100, NVIDIA RTX 4090, and AMD Radeon RX 7900 XT. You can find detailed benchmarks and comparisons online to determine the best fit for your project.

Keywords:

Apple M1 Ultra, NVIDIA L40S, LLM, Large Language Model, Local Inference, Token Speed Generation, Llama 2, Llama 3, Quantization, AI Development, GPU, GPU Benchmark, Performance, Comparison, Benchmark, Processing Speed, Generation Speed, Model Size, Power Consumption, Budget,