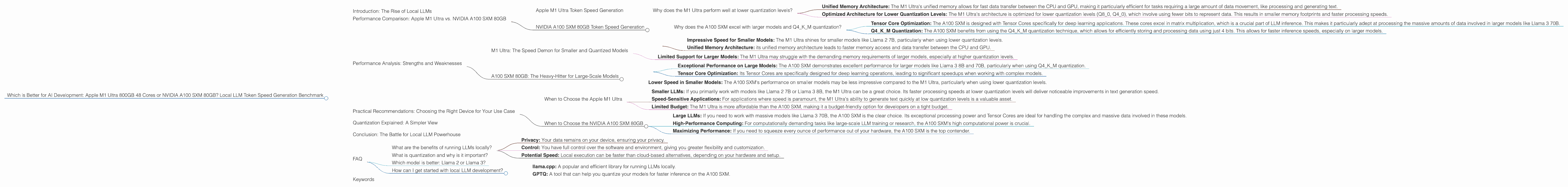

Which is Better for AI Development: Apple M1 Ultra 800gb 48cores or NVIDIA A100 SXM 80GB? Local LLM Token Speed Generation Benchmark

Introduction: The Rise of Local LLMs

Large language models (LLMs) are revolutionizing how we interact with technology. From generating creative text to translating languages and writing code, LLMs are becoming increasingly powerful and accessible. While cloud-based LLMs dominate the landscape, running these models locally on your own hardware offers a new level of control, privacy, and potential speed.

This article dives deep into the performance comparison of two powerful hardware contenders – Apple M1 Ultra 800GB 48 Cores and NVIDIA A100 SXM 80GB – specifically focusing on their token speed generation capabilities for popular LLM models like Llama 2 and Llama 3.

Performance Comparison: Apple M1 Ultra vs. NVIDIA A100 SXM 80GB

To understand the performance difference, we'll compare their token speed generation (tokens/second) for various LLM models and quantization levels, ultimately seeking to determine which device excels for specific use cases.

Apple M1 Ultra Token Speed Generation

The Apple M1 Ultra boasts a staggering 48 cores, a whopping 800GB of memory, and an impressive unified memory architecture. Let's analyze its performance:

| Model (Quantization) | Tokens/Second (Generation) |

|---|---|

| Llama 2 7B (F16) | 33.92 |

| Llama 2 7B (Q8_0) | 55.69 |

| Llama 2 7B (Q4_0) | 74.93 |

As you can see, the Apple M1 Ultra shines at lower quantization levels (Q80 and Q40), achieving token speeds that are significantly higher than those of the A100 SXM 80GB for the Llama 2 7B model. This means it can generate text faster when using these quantization levels.

Why does the M1 Ultra perform well at lower quantization levels?

The M1 Ultra excels in this setup for two main reasons:

Unified Memory Architecture: The M1 Ultra's unified memory allows for fast data transfer between the CPU and GPU, making it particularly efficient for tasks requiring a large amount of data movement, like processing and generating text.

Optimized Architecture for Lower Quantization Levels: The M1 Ultra's architecture is optimized for lower quantization levels (Q80, Q40), which involve using fewer bits to represent data. This results in smaller memory footprints and faster processing speeds.

NVIDIA A100 SXM 80GB Token Speed Generation

The NVIDIA A100 SXM is a powerhouse in the world of GPUs, known for its Tensor Cores and exceptional performance in deep learning applications. Its performance numbers for local LLM execution are presented below:

| Model (Quantization) | Tokens/Second (Generation) |

|---|---|

| Llama 3 8B (Q4KM) | 133.38 |

| Llama 3 8B (F16) | 53.18 |

| Llama 3 70B (Q4KM) | 24.33 |

While we don't have data for the A100 SXM for the 7B Llama 2 model, the data we do have suggests the A100 SXM 80GB outperforms the M1 Ultra on larger models when using Q4_K_M quantization.

Why does the A100 SXM excel with larger models and Q4KM quantization?

Tensor Core Optimization: The A100 SXM is designed with Tensor Cores specifically for deep learning applications. These cores excel in matrix multiplication, which is a crucial part of LLM inference. This makes it particularly adept at processing the massive amounts of data involved in larger models like Llama 3 70B.

Q4KM Quantization: The A100 SXM benefits from using the Q4KM quantization technique, which allows for efficiently storing and processing data using just 4 bits. This allows for faster inference speeds, especially on larger models.

Performance Analysis: Strengths and Weaknesses

M1 Ultra: The Speed Demon for Smaller and Quantized Models

Strengths:

- Impressive Speed for Smaller Models: The M1 Ultra shines for smaller models like Llama 2 7B, particularly when using lower quantization levels.

- Unified Memory Architecture: its unified memory architecture leads to faster memory access and data transfer between the CPU and GPU.

Weaknesses:

- Limited Support for Larger Models: The M1 Ultra may struggle with the demanding memory requirements of larger models, especially at higher quantization levels.

A100 SXM 80GB: The Heavy-Hitter for Large-Scale Models

Strengths:

- Exceptional Performance on Large Models: The A100 SXM demonstrates excellent performance for larger models like Llama 3 8B and 70B, particularly when using Q4KM quantization.

- Tensor Core Optimization: Its Tensor Cores are specifically designed for deep learning operations, leading to significant speedups when working with complex models.

Weaknesses:

- Lower Speed in Smaller Models: The A100 SXM's performance on smaller models may be less impressive compared to the M1 Ultra, particularly when using lower quantization levels.

Practical Recommendations: Choosing the Right Device for Your Use Case

When to Choose the Apple M1 Ultra

- Smaller LLMs: If you primarily work with models like Llama 2 7B or Llama 3 8B, the M1 Ultra can be a great choice. Its faster processing speeds at lower quantization levels will deliver noticeable improvements in text generation speed.

- Speed-Sensitive Applications: For applications where speed is paramount, the M1 Ultra's ability to generate text quickly at low quantization levels is a valuable asset.

- Limited Budget: The M1 Ultra is more affordable than the A100 SXM, making it a budget-friendly option for developers on a tight budget.

When to Choose the NVIDIA A100 SXM 80GB

- Large LLMs: If you need to work with massive models like Llama 3 70B, the A100 SXM is the clear choice. Its exceptional processing power and Tensor Cores are ideal for handling the complex and massive data involved in these models.

- High-Performance Computing: For computationally demanding tasks like large-scale LLM training or research, the A100 SXM's high computational power is crucial.

- Maximizing Performance: If you need to squeeze every ounce of performance out of your hardware, the A100 SXM is the top contender.

Quantization Explained: A Simpler View

Think of quantization as a way of compressing the data used by an LLM. By using fewer bits to represent the information, LLMs can run faster and use less memory.

Imagine you're describing a color. With full precision, you might use 24 bits to represent its shades. Now, imagine you only have 8 bits. You'll still be able to describe the color, but the detail will be less precise. This is analogous to how quantization works with LLMs - it reduces the amount of information used, leading to faster processing and smaller model sizes.

Conclusion: The Battle for Local LLM Powerhouse

The choice between the Apple M1 Ultra and the NVIDIA A100 SXM depends on your specific needs. The M1 Ultra is a fantastic choice for smaller models and speed-sensitive applications, while the A100 SXM excels at handling large-scale models and pushing the boundaries of LLM performance. Choosing the right device will empower you to unlock the true potential of local LLM development.

FAQ

What are the benefits of running LLMs locally?

- Privacy: Your data remains on your device, ensuring your privacy.

- Control: You have full control over the software and environment, giving you greater flexibility and customization.

- Potential Speed: Local execution can be faster than cloud-based alternatives, depending on your hardware and setup.

What is quantization and why is it important?

Quantization is a technique that reduces the precision of the numbers used by an LLM. This can lead to faster processing, smaller model sizes, and lower memory requirements.

Which model is better: Llama 2 or Llama 3?

Both Llama 2 and Llama 3 are excellent LLMs, but they each have their strengths. Llama 2 excels in its ease of use and speed, while Llama 3 offers greater accuracy and potential for more complex tasks. The best choice depends on your specific use case.

How can I get started with local LLM development?

There are many open-source projects available to help you get started:

- llama.cpp: A popular and efficient library for running LLMs locally.

- GPTQ: A tool that can help you quantize your models for faster inference on the A100 SXM.

Keywords

LLM, Llama 2, Llama 3, Apple M1 Ultra, NVIDIA A100 SXM 80GB, token speed generation, quantization, local AI development, GPU, GPU benchmarks, performance comparison, AI tools, deep learning, AI hardware, AI inference.