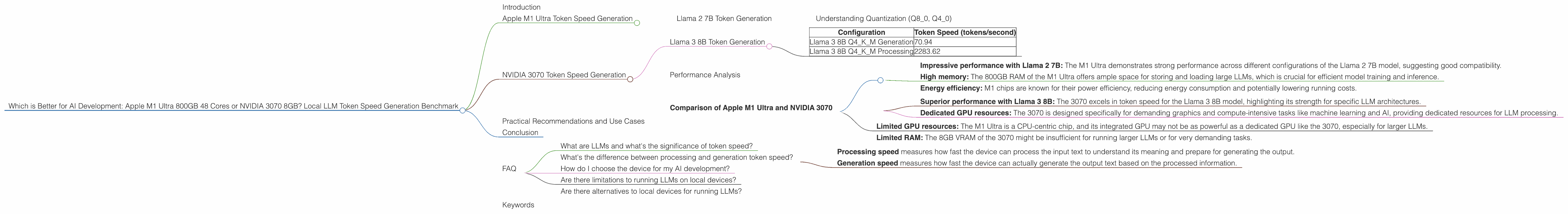

Which is Better for AI Development: Apple M1 Ultra 800gb 48cores or NVIDIA 3070 8GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is buzzing with the potential of large language models (LLMs), and choosing the right hardware for running these models can make all the difference in speed, efficiency, and ultimately, the success of your projects.

This article dives into the head-to-head comparison of two powerful contenders: the Apple M1 Ultra with 800 GB of memory and 48 cores and the NVIDIA GeForce RTX 3070 with 8 GB of VRAM. We'll be looking at their performance in token generation speed for various LLM models, providing you with the insights needed to decide which machine best suits your AI development needs.

Think of LLMs as AI brains that can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. They're like those amazing friends who always know the answer, but instead of just talking, they can actually write, code, and create.

These models are getting larger and more complex, demanding more computing power to run. This is where the battle between the Apple M1 Ultra and the NVIDIA 3070 comes in. Both are capable machines, but they have different strengths and weaknesses, which we'll explore in this article.

Apple M1 Ultra Token Speed Generation

The Apple M1 Ultra is a powerhouse of a chip, known for its incredible performance and efficiency. Let's see how it fares in generating tokens, the building blocks of text, for various LLM models.

Llama 2 7B Token Generation

The M1 Ultra delivers impressive results with the Llama 2 7B model:

| Configuration | Token Speed (tokens/second) |

|---|---|

| Llama 2 7B F16 Processing | 875.81 |

| Llama 2 7B F16 Generation | 33.92 |

| Llama 2 7B Q8_0 Processing | 783.45 |

| Llama 2 7B Q8_0 Generation | 55.69 |

| Llama 2 7B Q4_0 Processing | 772.24 |

| Llama 2 7B Q4_0 Generation | 74.93 |

The M1 Ultra achieves impressive token speed, especially in processing. This indicates its capability to handle the computational demands of running LLMs efficiently.

Understanding Quantization (Q80, Q40)

Quantization is a technique used to reduce the size of the model and improve performance. It's like compressing a video file: you lose some quality, but the file gets much smaller and easier to share or stream.

Q80 means using 8 bits to represent values, while Q40 uses 4 bits. The lower the number, the smaller the model but usually with some loss in accuracy.

For the M1 Ultra, we see a noticeable improvement in token generation speed with lower quantization levels (Q4_0) compared to F16 (half-precision floating point).

NVIDIA 3070 Token Speed Generation

The NVIDIA 3070 is a popular choice for gaming and other demanding tasks, and it also holds its own in the LLM arena.

Llama 3 8B Token Generation

The NVIDIA 3070 was tested with the Llama 3 8B model:

| Configuration | Token Speed (tokens/second) |

|---|---|

| Llama 3 8B Q4KM Generation | 70.94 |

| Llama 3 8B Q4KM Processing | 2283.62 |

Note: The benchmark data does not include the 3070's performance with F16 for Llama 3 8B or any results with Llama 3 70B. This means we cannot directly compare the 3070 with the M1 Ultra on these models.

Performance Analysis

When it comes to token generation speed, the NVIDIA 3070 seems to outperform the M1 Ultra with the Llama 3 8B model, especially in processing.

However, this is just a snapshot of a single LLM model. Notably, the M1 Ultra shines with the Llama 2 7B model, showing its versatility across different LLMs.

Comparison of Apple M1 Ultra and NVIDIA 3070

Strengths of the Apple M1 Ultra:

- Impressive performance with Llama 2 7B: The M1 Ultra demonstrates strong performance across different configurations of the Llama 2 7B model, suggesting good compatibility.

- High memory: The 800GB RAM of the M1 Ultra offers ample space for storing and loading large LLMs, which is crucial for efficient model training and inference.

- Energy efficiency: M1 chips are known for their power efficiency, reducing energy consumption and potentially lowering running costs.

Strengths of the NVIDIA 3070:

- Superior performance with Llama 3 8B: The 3070 excels in token speed for the Llama 3 8B model, highlighting its strength for specific LLM architectures.

- Dedicated GPU resources: The 3070 is designed specifically for demanding graphics and compute-intensive tasks like machine learning and AI, providing dedicated resources for LLM processing.

Weaknesses of the Apple M1 Ultra:

- Limited GPU resources: The M1 Ultra is a CPU-centric chip, and its integrated GPU may not be as powerful as a dedicated GPU like the 3070, especially for larger LLMs.

Weaknesses of the NVIDIA 3070:

- Limited RAM: The 8GB VRAM of the 3070 might be insufficient for running larger LLMs or for very demanding tasks.

Practical Recommendations and Use Cases

For developers working with smaller LLMs like Llama 2 7B, the Apple M1 Ultra offers a compelling combination of speed and efficiency, especially for tasks that heavily rely on processing like fine-tuning models. Its large memory capacity is also a big advantage for managing large datasets.

However, if you plan to work with larger models like Llama 3 8B or require specialized hardware for more demanding tasks, the NVIDIA 3070 might be a better fit, offering a dedicated GPU for improved performance in specific scenarios.

It's worth noting that the data might not be comprehensive, and there are many other factors to consider when choosing hardware for local LLM development, such as the specific needs of your project, your budget, and the availability of software and libraries for your chosen device.

Conclusion

The choice between the Apple M1 Ultra and the NVIDIA 3070 for local LLM development depends on your specific requirements. If you're focused on smaller models and value speed and efficiency, the M1 Ultra is a solid contender. If you need the extra firepower of a dedicated GPU for larger models and complex tasks, the NVIDIA 3070 is a strong option.

Ultimately, understanding the strengths and weaknesses of each device, along with your specific project needs, is the key to making the right decision for your AI development journey.

FAQ

What are LLMs and what's the significance of token speed?

LLMs are AI models that can understand and generate human-like text. Think of them as powerful AI assistants that can write, translate, summarize, and even create code. Token speed is a measure of how quickly a device can process and generate tokens, the building blocks of text, which directly impacts the speed of tasks like text generation, chatbots, and code completion. Higher token speed means faster LLM operations.

What's the difference between processing and generation token speed?

- Processing speed measures how fast the device can process the input text to understand its meaning and prepare for generating the output.

- Generation speed measures how fast the device can actually generate the output text based on the processed information.

How do I choose the device for my AI development?

Consider the size of the LLM you'll be working with, your budget, the specific tasks you'll be performing, and the availability of software and libraries for your chosen device. The M1 Ultra is a good choice for smaller models and tasks like fine-tuning. The NVIDIA 3070 is better suited for larger models and tasks that demand dedicated GPU resources.

Are there limitations to running LLMs on local devices?

Yes, local devices may not have enough processing power, memory, or resources to handle larger and more complex LLMs efficiently. You might need to compromise on model size, features, and speed when working locally.

Are there alternatives to local devices for running LLMs?

Yes, cloud-based platforms like Google Colab, Amazon SageMaker, and Hugging Face Spaces offer powerful infrastructure and resources for running LLMs without the need for expensive local hardware.

Keywords

LLM, Apple M1 Ultra, NVIDIA 3070, token speed, AI development, Llama 2, Llama 3, GPU, RAM, processing speed, generation speed, quantization, F16, Q80, Q40, benchmark, performance, recommendations, use cases, cloud computing, Google Colab, Amazon SageMaker, Hugging Face Spaces.