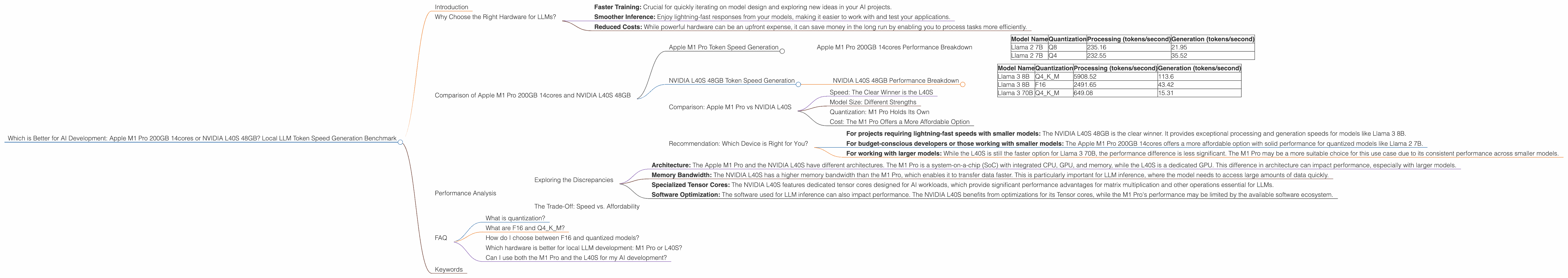

Which is Better for AI Development: Apple M1 Pro 200gb 14cores or NVIDIA L40S 48GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is exploding, and with it, the demand for powerful hardware to run these computationally intensive models. But which hardware is best? Whether you're a developer building cutting-edge AI applications or a data scientist crunching numbers, choosing the right device can make a significant difference in your workflow. This article will be your guide to comparing two popular choices: the Apple M1 Pro 200GB 14cores and the NVIDIA L40S 48GB, focusing on local LLM token speed generation. We'll dive into the benchmark results and break down the performance, highlighting strengths and weaknesses to help you make the right decision for your specific needs.

Why Choose the Right Hardware for LLMs?

Think of it this way: loading a video game on a low-end computer is like trying to run a marathon with a broken leg. You'll get there eventually, but it'll be a painful, slow, and frustrating experience. The same applies to LLMs. The right hardware can mean:

- Faster Training: Crucial for quickly iterating on model design and exploring new ideas in your AI projects.

- Smoother Inference: Enjoy lightning-fast responses from your models, making it easier to work with and test your applications.

- Reduced Costs: While powerful hardware can be an upfront expense, it can save money in the long run by enabling you to process tasks more efficiently.

Comparison of Apple M1 Pro 200GB 14cores and NVIDIA L40S 48GB

Apple M1 Pro Token Speed Generation

The Apple M1 Pro 200GB 14cores is a powerful chip that boasts impressive performance for local LLM development. Let's see how it fares with the Llama 2 series:

Apple M1 Pro 200GB 14cores Performance Breakdown

| Model Name | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 2 7B | Q8 | 235.16 | 21.95 |

| Llama 2 7B | Q4 | 232.55 | 35.52 |

Observations:

- Solid Performance with Quantization: The M1 Pro shows good performance with quantized Llama 2 7B models using Q8 and Q4 quantization, which are methods of reducing the size of the model without sacrificing too much accuracy.

- Generation Slower Than Processing: The disparity between processing and generation speeds is noticeable. This is typical for many devices, as generation requires more complex computations than processing.

- No F16 Results: The M1 Pro doesn't seem to have results available for the Llama 2 7B model with the F16 precision format. This could be due to computational limitations, or the lack of benchmark data.

NVIDIA L40S 48GB Token Speed Generation

The NVIDIA L40S 48GB is a high-end GPU designed for demanding workloads, including AI development. Let's examine its performance with the Llama 3 series:

NVIDIA L40S 48GB Performance Breakdown

| Model Name | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 5908.52 | 113.6 |

| Llama 3 8B | F16 | 2491.65 | 43.42 |

| Llama 3 70B | Q4KM | 649.08 | 15.31 |

Observations:

- Unrivaled Performance: The L40S displays significantly faster processing and generation speeds compared to the M1 Pro. This is particularly evident with the Llama 3 8B model, where the L40S outperforms the M1 Pro by several orders of magnitude.

- Strong F16 Performance: The L40S demonstrates impressive performance with F16 models, indicating its ability to handle higher-precision computations.

- Lower Speed for Larger Models: While the L40S excels with smaller models, performance drops with larger models like Llama 3 70B. This is expected as larger models require more memory and computation.

- No F16 Results for Llama 3 70B: It appears no F16 results are available for the Llama 3 70B model. This could be due to memory limitations or insufficient benchmark data.

Comparison: Apple M1 Pro vs NVIDIA L40S

Speed: The Clear Winner is the L40S

The L40S dominates the speed race, showcasing significantly faster processing and generation speeds for the Llama 3 8B model. This means you can train and generate text with the L40S much faster, potentially saving you hours or even days.

Model Size: Different Strengths

The L40S shines with smaller models like Llama 3 8B, but its performance deteriorates with larger models like Llama 3 70B. The M1 Pro, while not reaching the L40S's speed, shows more consistent performance across smaller models like Llama 2 7B.

Quantization: M1 Pro Holds Its Own

The M1 Pro demonstrates solid performance with Q8 and Q4 quantized Llama 2 7B models. The L40S, while fast, performs better with F16 models. The choice between quantized and F16 models often depends on the trade-off between speed and accuracy. For applications where speed is paramount, quantized models are a good choice.

Cost: The M1 Pro Offers a More Affordable Option

The Apple M1 Pro 200GB 14cores is typically more affordable than the NVIDIA L40S 48GB. This makes the M1 Pro an attractive option for budget-conscious developers or those working with smaller models.

Recommendation: Which Device is Right for You?

Here's a breakdown to guide your decision:

- For projects requiring lightning-fast speeds with smaller models: The NVIDIA L40S 48GB is the clear winner. It provides exceptional processing and generation speeds for models like Llama 3 8B.

- For budget-conscious developers or those working with smaller models: The Apple M1 Pro 200GB 14cores offers a more affordable option with solid performance for quantized models like Llama 2 7B.

- For working with larger models: While the L40S is still the faster option for Llama 3 70B, the performance difference is less significant. The M1 Pro may be a more suitable choice for this use case due to its consistent performance across smaller models.

Performance Analysis

Exploring the Discrepancies

The differences in performance between the M1 Pro and L40S can be attributed to several factors:

- Architecture: The Apple M1 Pro and the NVIDIA L40S have different architectures. The M1 Pro is a system-on-a-chip (SoC) with integrated CPU, GPU, and memory, while the L40S is a dedicated GPU. This difference in architecture can impact performance, especially with larger models.

- Memory Bandwidth: The NVIDIA L40S has a higher memory bandwidth than the M1 Pro, which enables it to transfer data faster. This is particularly important for LLM inference, where the model needs to access large amounts of data quickly.

- Specialized Tensor Cores: The NVIDIA L40S features dedicated tensor cores designed for AI workloads, which provide significant performance advantages for matrix multiplication and other operations essential for LLMs.

- Software Optimization: The software used for LLM inference can also impact performance. The NVIDIA L40S benefits from optimizations for its Tensor cores, while the M1 Pro's performance may be limited by the available software ecosystem.

The Trade-Off: Speed vs. Affordability

The speed of the L40S is undeniable, but it comes at a higher cost. The M1 Pro, while not as fast, provides a more affordable option for budget-conscious developers. Deciding between the two often comes down to the specific needs of your project and your budget constraints.

Analogy: Imagine a race between a high-performance sports car and a well-maintained family sedan. The sports car might be faster on the track, but it's also more expensive, requires specialized maintenance, and may not be as practical for everyday commutes. The family sedan offers a more affordable, reliable, and practical option for most daily needs.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of an LLM without sacrificing too much accuracy. It's like compressing an image file – you lose some detail, but the overall image is still recognizable.

What are F16 and Q4KM?

These are different precision formats used for storing and processing LLMs. F16 (half-precision floating point) is a less precise format than F32 (single-precision floating point) but uses half the memory. Q4KM is a type of quantization that uses 4 bits per value. The choice of precision impacts speed and accuracy.

How do I choose between F16 and quantized models?

The choice between F16 and quantization depends on the specific trade-off between speed and accuracy. If speed is more important, then quantized models are a good choice. If accuracy is more crucial, then F16 models might be preferred.

Which hardware is better for local LLM development: M1 Pro or L40S?

It depends on your needs. The L40S is faster for smaller models but more expensive. The M1 Pro is more affordable and offers solid performance with smaller quantized models.

Can I use both the M1 Pro and the L40S for my AI development?

Absolutely! You can combine the strengths of both by using the M1 Pro for prototyping and development and then deploying your models on the L40S for faster inference.

Keywords

Apple M1 Pro, NVIDIA L40S, LLM, token speed generation, Llama 2, Llama 3, AI development, performance benchmark, hardware comparison, GPU, processing, generation, quantization, F16, Q8, Q4, cost, speed, accuracy, budget, data science, developer, AI, machine learning, deep learning.