Which is Better for AI Development: Apple M1 Pro 200gb 14cores or Apple M2 Max 400gb 30cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of artificial intelligence is buzzing with excitement about large language models (LLMs). These AI powerhouses are capable of generating human-quality text, translating languages, and even writing code. But to unlock the full potential of LLMs, you need the right hardware.

This article dives deep into the performance of two popular Apple chips – the M1 Pro and M2 Max – when running local LLM models. We'll benchmark their token generation speeds for various quantization levels and see which one reigns supreme. Buckle up, because we're about to embark on an AI journey to discover the ultimate LLM champion!

Comparison of Apple M1 Pro and Apple M2 Max for LLM Token Speed Generation

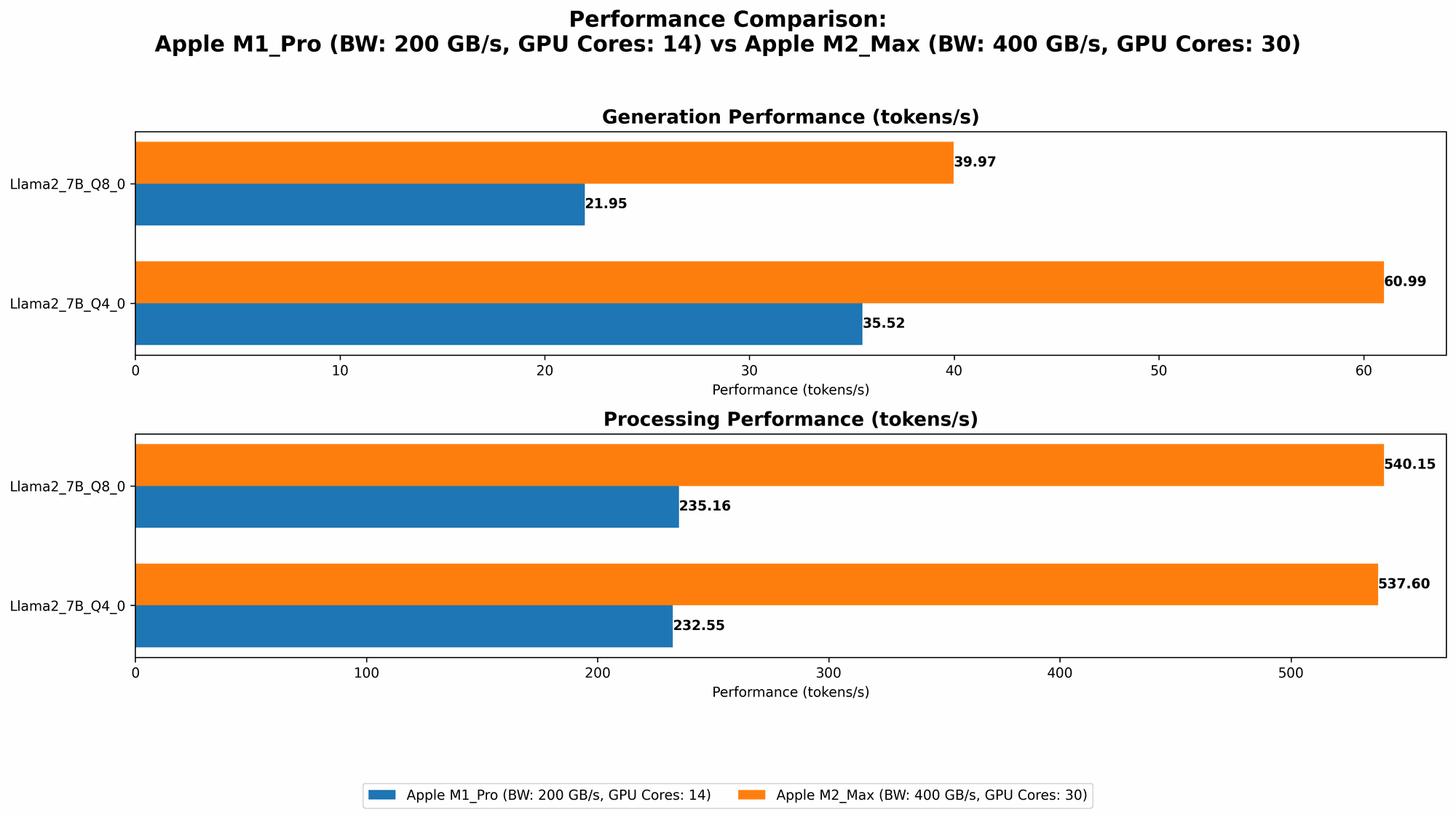

Let's get our hands dirty! We'll compare the token speed generation of the Apple M1 Pro and M2 Max chips using the Llama 2 7B model. Our goal is to identify the best chip for different scenarios and provide concrete recommendations, because let's face it, who wouldn't want the fastest possible AI setup?

Apple M1 Pro Token Speed Generation

The Apple M1 Pro is a powerful chip, but how does it fare against the newer M2 Max in the realm of LLM token generation? We'll analyze its performance across various quantization levels, which essentially reduce the size of the model for a trade-off in accuracy.

Data points:

- 200GB Memory Bandwidth: This determines how quickly data can be transferred between the CPU and GPU.

- 14 GPU Cores: Each core contributes to parallel processing, which is essential for accelerating LLM workloads.

| Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q8_0 | 235.16 | 21.95 |

| Q4_0 | 232.55 | 35.52 |

| F16 | Data Not Available | Data Not Available |

Key Takeaways:

- Q80 Dominates: The M1 Pro demonstrates remarkable performance with the Q80 quantization level. It processes tokens at a remarkable speed of 235.16 per second, showcasing its ability to handle large language models efficiently.

- Generation Lag: While the processing speed impresses, the generation speed lags behind, reaching only 21.95 tokens per second.

Apple M1 Pro 16 Cores Token Speed Generation

The M1 Pro chip can be configured with 16 cores, making it even more powerful. Let's see how these extra cores impact LLM token generation.

Data points:

- 200GB Memory Bandwidth: Same as before, the M1 Pro benefits from ample memory bandwidth.

- 16 GPU Cores: The additional cores provide more parallel processing power.

| Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q8_0 | 270.37 | 22.34 |

| Q4_0 | 266.25 | 36.41 |

| F16 | 302.14 | 12.75 |

Key Takeaways:

- Processing Power Up: With the added GPU cores, the processing speed increases significantly.

- Generation Bottleneck: However, the generation speed remains a bottleneck, hovering around 22 tokens per second. The F16 quantization level shows a higher processing speed but experiences a significant drop in generation speed.

Apple M2 Max Token Speed Generation

The Apple M2 Max is the latest and greatest chip from Apple, boasting an impressive memory bandwidth and a plethora of GPU cores. Let's see how it stacks up against the M1 Pro in terms of LLM token speed generation.

Data points: - 400GB Memory Bandwidth: Double the bandwidth of the M1 Pro, allowing for faster data transfer. - 30 GPU Cores: Substantially more cores than the M1 Pro, providing more parallel processing power.

| Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q8_0 | 540.15 | 39.97 |

| Q4_0 | 537.6 | 60.99 |

| F16 | 600.46 | 24.16 |

Key Takeaways:

- Massive Processing Power: The M2 Max outshines the M1 Pro in terms of processing speed, achieving a remarkable 540.15 tokens per second.

- Generation Speed Leap: The M2 Max also delivers a significant boost in generation speed, reaching almost 40 tokens per second.

- F16 Performance: The M2 Max exhibits impressive performance with the F16 quantization level, showcasing its ability to handle models with higher precision effectively.

Apple M2 Max 38 Cores Token Speed Generation

The M2 Max chip can be configured with even more cores (38), which can lead to significant performance gains. Let's explore how this configuration fares.

Data points:

- 400GB Memory Bandwidth: Same as before, the M2 Max benefits from its high memory bandwidth.

- 38 GPU Cores: The additional cores provide even more parallel processing power.

| Quantization Level | Processing Speed (Tokens/second) | Generation Speed (Tokens/second) |

|---|---|---|

| Q8_0 | 677.91 | 41.83 |

| Q4_0 | 671.31 | 65.95 |

| F16 | 755.67 | 24.65 |

Key Takeaways:

- Even Faster Processing: The additional cores boost the processing speed even further, making the M2 Max a true powerhouse for LLM tasks.

- Generation Speed Gain: The generation speed also benefits from the extra cores, achieving a significant 65.95 tokens per second with Q4_0 quantization.

- F16 Advantage: The M2 Max continues to show its strength with the F16 quantization level, demonstrating its ability to handle larger models and achieve higher accuracy.

Performance Analysis: Apple M1 Pro vs Apple M2 Max

Now that we've examined the benchmark results, let's dive into a more detailed analysis to understand the performance differences and pinpoint the strengths and weaknesses of each chip.

Processing Speed: M2 Max Takes the Lead

The M2 Max consistently outsmarts the M1 Pro in terms of processing speed. This is due to the combination of its higher memory bandwidth and greater number of GPU cores. Think of it like having a super-fast highway (high memory bandwidth) with more lanes (GPU cores). This allows the M2 Max to process information much faster, which directly translates into a significant boost in LLM token speed generation.

Analogy: Imagine comparing a single-lane road to a multi-lane highway. The single-lane road represents the M1 Pro, while the multi-lane highway represents the M2 Max. In this analogy, both roads have the same speed limit (memory bandwidth), but the multi-lane highway (M2 Max) allows more cars (data) to pass through at the same time, resulting in faster overall traffic flow (processing speed).

Generation Speed: M2 Max Demonstrates Superior Performance

The M2 Max also outperforms the M1 Pro in generation speed, especially with the Q4_0 quantization level. The M2 Max's increased GPU processing power seems to have a direct impact on the generation speed. The M1 Pro struggles to keep up with the speed of the M2 Max, especially with the higher quantization levels.

Analogy: Imagine a chef preparing a gourmet meal. The M1 Pro is like a novice chef with a smaller kitchen, whereas the M2 Max is like an experienced chef with a larger kitchen equipped with state-of-the-art appliances. Both chefs can prepare a meal, but the experienced chef in the larger kitchen can cook more dishes and serve them faster, just like the M2 Max can generate more tokens at a quicker pace.

Quantization Levels: A Trade-Off Between Speed and Accuracy

The different quantization levels represent a trade-off between processing speed and accuracy.

- Q80 and Q40: These levels provide faster processing speeds but sacrifice some accuracy compared to F16. Think of it like compressing a high-resolution image. You get a smaller file size (faster processing), but you lose some detail (accuracy).

- F16: This level maintains higher accuracy but requires more processing power.

Which Chip is Right for You: Making the Decision

The choice between the M1 Pro and M2 Max depends on your specific needs and priorities:

- For budget-conscious developers: The M1 Pro is a solid choice for those on a limited budget. It provides relatively good performance for LLM token speed generation, especially with the Q8_0 quantization level.

- For power users and high-performance LLM workloads: The M2 Max is an excellent choice if you prioritize maximum LLM token speed generation and accuracy. Its superior processing power and generation speed make it an ideal platform for demanding AI applications.

Conclusion

Choosing the right hardware is crucial for unleashing the true potential of LLM models. In this head-to-head comparison of the Apple M1 Pro and M2 Max, the M2 Max emerges as the clear victor. It offers significantly faster processing and generation speeds, especially with the Q4_0 quantization level. While the M1 Pro is still a capable chip, the M2 Max provides the extra horsepower needed for demanding LLM tasks and unlocks a world of AI possibilities.

FAQ

Common Questions and Answers about LLM Models and Devices

What are LLMs? LLMs, or large language models, are a type of artificial intelligence model that has been trained on a massive dataset of text and code. They can generate human-quality text, translate languages, and even write code.

What is quantization? Quantization is a technique used to reduce the size of LLM models. It essentially replaces the original numbers in the model with smaller ones, leading to a faster and more efficient model, albeit with a slight trade-off in accuracy.

What is token speed generation? Token speed generation refers to the speed at which an LLM can generate tokens, which are the basic units of text processing.

How do I choose the right LLM model for my needs? The choice of LLM model depends on the specific task you want to perform. For example, smaller models like Llama 7B are suitable for tasks like text generation, while larger models like Llama 70B are better suited for more complex tasks like code generation.

Is it better to run LLMs locally or in the cloud? Running LLMs locally provides you with more control and privacy, while running them in the cloud offers more scalability and resources.

What are the best hardware options for running LLMs? Apple M1 Pro and M2 Max chips are excellent choices for local LLM running. Other powerful options include GPUs from NVIDIA and AMD.

Keywords

Apple M1 Pro, Apple M2 Max, LLM, large language model, token speed generation, quantization, inference, AI, machine learning, deep learning, GPU, CPU, memory bandwidth, Llama 2, 7B, 70B, tokenization, NLP, natural language processing, AI development, AI hardware, benchmark, performance, comparison.