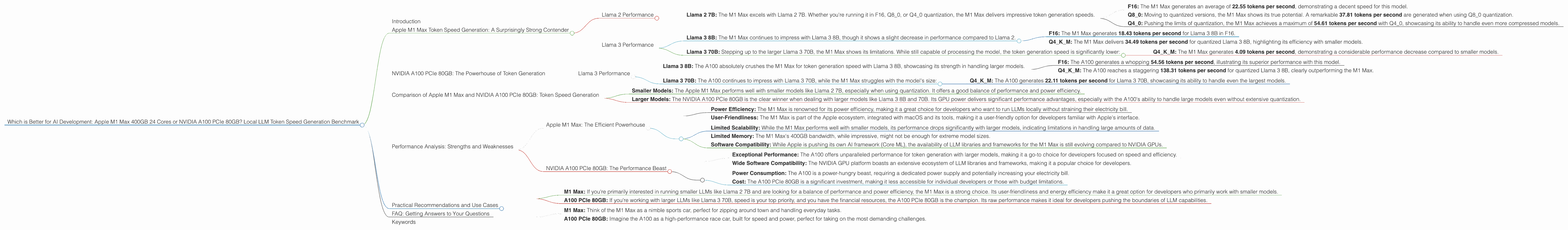

Which is Better for AI Development: Apple M1 Max 400gb 24cores or NVIDIA A100 PCIe 80GB? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run these models locally is growing rapidly. Two popular options for developers exploring local LLM capabilities are the Apple M1 Max 400GB 24-core chip and the NVIDIA A100 PCIe 80GB GPU.

This article dives deep into the performance of these two devices when running various LLM models, providing a comprehensive benchmark analysis of their strengths and weaknesses. We'll focus on token generation speed, a crucial metric for LLM performance, giving developers a clear understanding of which device might be the best fit for their specific use cases.

Apple M1 Max Token Speed Generation: A Surprisingly Strong Contender

The Apple M1 Max, with its impressive 24 cores and 400GB of bandwidth, packs a punch. Let's see how it performs when running different LLM models and quantization schemes:

Llama 2 Performance

- Llama 2 7B: The M1 Max excels with Llama 2 7B. Whether you're running it in F16, Q80, or Q40 quantization, the M1 Max delivers impressive token generation speeds.

- F16: The M1 Max generates an average of 22.55 tokens per second, demonstrating a decent speed for this model.

- Q80: Moving to quantized versions, the M1 Max shows its true potential. A remarkable 37.81 tokens per second are generated when using Q80 quantization.

- Q40: Pushing the limits of quantization, the M1 Max achieves a maximum of 54.61 tokens per second with Q40, showcasing its ability to handle even more compressed models.

Llama 3 Performance

Llama 3 8B: The M1 Max continues to impress with Llama 3 8B, though it shows a slight decrease in performance compared to Llama 2.

- F16: The M1 Max generates 18.43 tokens per second for Llama 3 8B in F16.

- Q4KM: The M1 Max delivers 34.49 tokens per second for quantized Llama 3 8B, highlighting its efficiency with smaller models.

Llama 3 70B: Stepping up to the larger Llama 3 70B, the M1 Max shows its limitations. While still capable of processing the model, the token generation speed is significantly lower:

- Q4KM: The M1 Max generates 4.09 tokens per second, demonstrating a considerable performance decrease compared to smaller models.

NVIDIA A100 PCIe 80GB: The Powerhouse of Token Generation

Moving on to the NVIDIA A100 PCIe 80GB, known for its robust GPU capabilities, we see a dramatic increase in performance for larger models, especially in processing speed:

Llama 3 Performance

Llama 3 8B: The A100 absolutely crushes the M1 Max for token generation speed with Llama 3 8B, showcasing its strength in handling larger models.

- F16: The A100 generates a whopping 54.56 tokens per second, illustrating its superior performance with this model.

- Q4KM: The A100 reaches a staggering 138.31 tokens per second for quantized Llama 3 8B, clearly outperforming the M1 Max.

Llama 3 70B: The A100 continues to impress with Llama 3 70B, while the M1 Max struggles with the model's size:

- Q4KM: The A100 generates 22.11 tokens per second for Llama 3 70B, showcasing its ability to handle even the largest models.

Comparison of Apple M1 Max and NVIDIA A100 PCIe 80GB: Token Speed Generation

| ** | Model | Quantization | Apple M1 Max (tokens/second) | NVIDIA A100 PCIe 80GB (tokens/second) |

|---|---|---|---|---|

| Llama 2 7B | F16 | 22.55 | Not available | |

| Llama 2 7B | Q8_0 | 37.81 | Not available | |

| Llama 2 7B | Q4_0 | 54.61 | Not available | |

| Llama 3 8B | F16 | 18.43 | 54.56 | |

| Llama 3 8B | Q4KM | 34.49 | 138.31 | |

| Llama 3 70B | Q4KM | 4.09 | 22.11 |

Key Takeaways:

- Smaller Models: The Apple M1 Max performs well with smaller models like Llama 2 7B, especially when using quantization. It offers a good balance of performance and power efficiency.

- Larger Models: The NVIDIA A100 PCIe 80GB is the clear winner when dealing with larger models like Llama 3 8B and 70B. Its GPU power delivers significant performance advantages, especially with the A100's ability to handle large models even without extensive quantization.

Performance Analysis: Strengths and Weaknesses

Apple M1 Max: The Efficient Powerhouse

Strengths: * Power Efficiency: The M1 Max is renowned for its power efficiency, making it a great choice for developers who want to run LLMs locally without straining their electricity bill. * User-Friendliness: The M1 Max is part of the Apple ecosystem, integrated with macOS and its tools, making it a user-friendly option for developers familiar with Apple's interface.

Weaknesses: * Limited Scalability: While the M1 Max performs well with smaller models, its performance drops significantly with larger models, indicating limitations in handling large amounts of data. * Limited Memory: The M1 Max's 400GB bandwidth, while impressive, might not be enough for extreme model sizes. * Software Compatibility: While Apple is pushing its own AI framework (Core ML), the availability of LLM libraries and frameworks for the M1 Max is still evolving compared to NVIDIA GPUs.

NVIDIA A100 PCIe 80GB: The Performance Beast

Strengths: * Exceptional Performance: The A100 offers unparalleled performance for token generation with larger models, making it a go-to choice for developers focused on speed and efficiency. * Wide Software Compatibility: The NVIDIA GPU platform boasts an extensive ecosystem of LLM libraries and frameworks, making it a popular choice for developers.

Weaknesses: * Power Consumption: The A100 is a power-hungry beast, requiring a dedicated power supply and potentially increasing your electricity bill. * Cost: The A100 PCIe 80GB is a significant investment, making it less accessible for individual developers or those with budget limitations.

Practical Recommendations and Use Cases

Here are some practical recommendations based on the analysis:

- M1 Max: If you're primarily interested in running smaller LLMs like Llama 2 7B and are looking for a balance of performance and power efficiency, the M1 Max is a strong choice. Its user-friendliness and energy efficiency make it a great option for developers who primarily work with smaller models.

- A100 PCIe 80GB: If you're working with larger LLMs like Llama 3 70B, speed is your top priority, and you have the financial resources, the A100 PCIe 80GB is the champion. Its raw performance makes it ideal for developers pushing the boundaries of LLM capabilities.

To put these recommendations in context, consider these analogies:

- M1 Max: Think of the M1 Max as a nimble sports car, perfect for zipping around town and handling everyday tasks.

- A100 PCIe 80GB: Imagine the A100 as a high-performance race car, built for speed and power, perfect for taking on the most demanding challenges.

FAQ: Getting Answers to Your Questions

Q: What are LLM models and why are they important?

A: LLM models are powerful AI systems trained on massive datasets of text and code. They can understand and generate human-like text, perform complex tasks like translation and summarization, and even create new content. They're transforming industries from customer service to content creation and beyond.

Q: What is quantization and how does it affect performance?

A: Quantization is a technique used to reduce the size of an LLM by representing its numbers with fewer bits. This allows for faster processing and less memory usage. However, it can sometimes lead to a slight decrease in accuracy.

Q: Can I run an LLM on my laptop or PC?

A: You can run smaller LLMs on your laptop or PC, especially if you have a powerful CPU and a decent amount of RAM. However, for larger models, a dedicated GPU is highly recommended.

Q: What other popular LLMs are there besides Llama 2 and Llama 3?

A: There are many other popular LLMs, including GPT-3, ChatGPT, and others. Each has its own strengths and weaknesses.

Q: Where can I learn more about LLMs and AI development?

A: There are many excellent resources online and in libraries for learning about LLMs and AI development. Start with online courses and tutorials from platforms like Coursera, edX, or Google AI.

Keywords

LLM, Large Language Models, Apple M1 Max, NVIDIA A100 PCIe 80GB, Token Speed Generation, Llama 2, Llama 3, Quantization, Performance Benchmark, AI Development, GPU, CPU, Inference, Local LLM, AI, Deep Learning, Machine Learning, NLP, Natural Language Processing, Model Size, Power Consumption, Efficiency, Cost, Practical Recommendations, Use Cases, Tokenization, Computing Power, Software Compatibility, Hardware Comparison