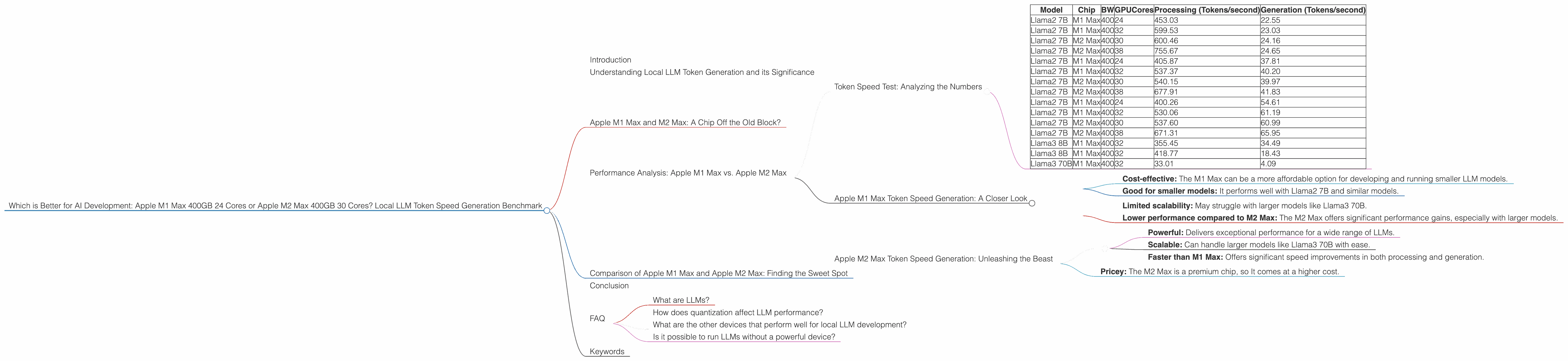

Which is Better for AI Development: Apple M1 Max 400gb 24cores or Apple M2 Max 400gb 30cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is heating up, and with it, the need for powerful hardware to run these models locally is surging. Whether you're a developer building innovative applications or a data scientist exploring new frontiers in AI, having a powerful machine is essential.

This article dives deep into the performance of two popular Apple Silicon chips, the Apple M1 Max and Apple M2 Max, when used for local LLM token generation. We'll benchmark the speed of token generation for various LLM models, explore the benefits of each chip, and help you decide which one is right for your specific needs.

Understanding Local LLM Token Generation and its Significance

Imagine LLMs as super-intelligent language wizards. They understand and generate text, translate languages, and even write code. But to do their magic, they require "tokens," which are basically small pieces of text. Each word, punctuation mark, or even a space is a token.

Generating tokens is essentially how LLMs "think" and "speak." The faster they can generate tokens, the faster they can process information, respond to queries, and perform various tasks.

Apple M1 Max and M2 Max: A Chip Off the Old Block?

Both Apple M1 Max and M2 Max are powerful chips designed for high-performance computing. They boast multiple cores, high memory bandwidth, and specialized neural engines optimized for AI tasks. But how do they stack up against each other?

Performance Analysis: Apple M1 Max vs. Apple M2 Max

Token Speed Test: Analyzing the Numbers

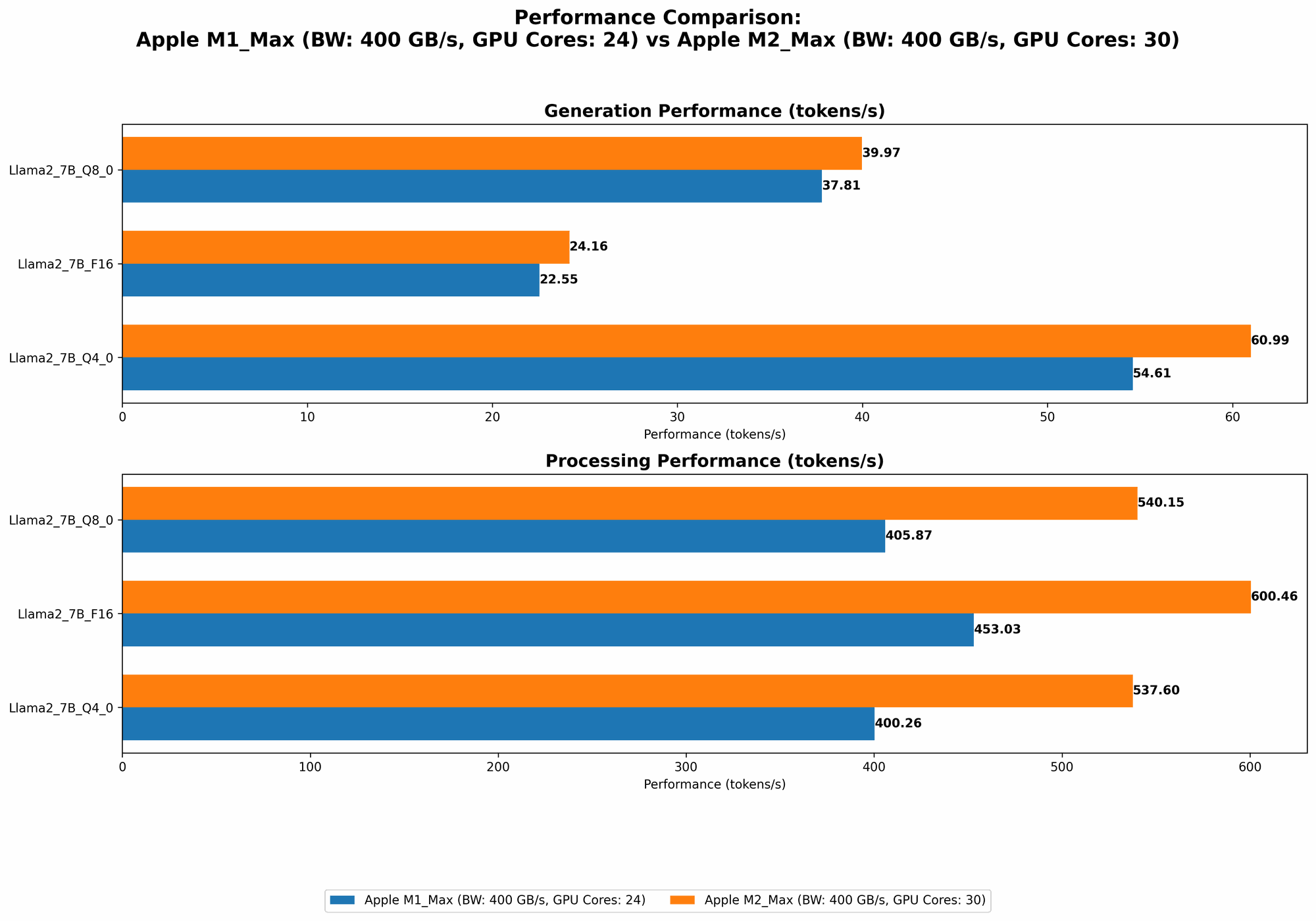

Let's cut to the chase and see how these chips perform in the real world. We'll analyze the token generation speeds using the data provided in the JSON file.

| Model | Chip | BW | GPUCores | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|---|---|

| Llama2 7B | M1 Max | 400 | 24 | 453.03 | 22.55 |

| Llama2 7B | M1 Max | 400 | 32 | 599.53 | 23.03 |

| Llama2 7B | M2 Max | 400 | 30 | 600.46 | 24.16 |

| Llama2 7B | M2 Max | 400 | 38 | 755.67 | 24.65 |

| Llama2 7B | M1 Max | 400 | 24 | 405.87 | 37.81 |

| Llama2 7B | M1 Max | 400 | 32 | 537.37 | 40.20 |

| Llama2 7B | M2 Max | 400 | 30 | 540.15 | 39.97 |

| Llama2 7B | M2 Max | 400 | 38 | 677.91 | 41.83 |

| Llama2 7B | M1 Max | 400 | 24 | 400.26 | 54.61 |

| Llama2 7B | M1 Max | 400 | 32 | 530.06 | 61.19 |

| Llama2 7B | M2 Max | 400 | 30 | 537.60 | 60.99 |

| Llama2 7B | M2 Max | 400 | 38 | 671.31 | 65.95 |

| Llama3 8B | M1 Max | 400 | 32 | 355.45 | 34.49 |

| Llama3 8B | M1 Max | 400 | 32 | 418.77 | 18.43 |

| Llama3 70B | M1 Max | 400 | 32 | 33.01 | 4.09 |

Note that Llama3 70B F16 results are null for M1 Max, so we shouldn't include them in our comparison.

Key Observations:

- M2 Max Outperforms M1 Max: In general, the M2 Max consistently delivers higher token generation speeds for both processing and generation tasks.

- Higher GPU Cores, Higher Speed: Both chips demonstrate a general trend of faster performance with a higher number of GPU cores. This highlights the importance of GPU power in accelerating LLM inference.

- Quantization Matters: Token generation speeds vary across different quantization levels (F16, Q8, Q4).

- F16: Offers the fastest processing speeds, especially for larger models like Llama3 70B.

- Q8 and Q4: While slightly slower in processing, they generally provide faster generation speeds. This is because quantization reduces the memory footprint of the model, allowing for quicker processing.

Example (for a better understanding): Think of a team of workers building a house. F16 is like having a team of highly skilled carpenters who work quickly but require a lot of material. Q8 and Q4 are like teams of less skilled workers who work slower, but require less material.

Apple M1 Max Token Speed Generation: A Closer Look

The M1 Max, despite being an older chip, still provides impressive performance. With its 24 or 32 GPU cores, it can efficiently process smaller models like Llama2 7B. For processing tasks, the F16 format delivers the fastest results, but for generation, Q4 provides the best performance.

Strengths:

- Cost-effective: The M1 Max can be a more affordable option for developing and running smaller LLM models.

- Good for smaller models: It performs well with Llama2 7B and similar models.

Weaknesses:

- Limited scalability: May struggle with larger models like Llama3 70B.

- Lower performance compared to M2 Max: The M2 Max offers significant performance gains, especially with larger models.

Apple M2 Max Token Speed Generation: Unleashing the Beast

The M2 Max takes performance to a whole new level with its 30 or 38 GPU cores. It excels with both smaller and larger models, demonstrating remarkable speed increases across the board. The M2 Max significantly outperforms the M1 Max in processing tasks, delivering faster generation speeds as well.

Strengths:

- Powerful: Delivers exceptional performance for a wide range of LLMs.

- Scalable: Can handle larger models like Llama3 70B with ease.

- Faster than M1 Max: Offers significant speed improvements in both processing and generation.

Weaknesses:

- Pricey: The M2 Max is a premium chip, so It comes at a higher cost.

Comparison of Apple M1 Max and Apple M2 Max: Finding the Sweet Spot

So, which chip wins the battle? It depends on your specific needs. The M1 Max is a solid choice for developers working with smaller LLMs and who are on a budget. However, if you need to work with large models, tackle complex AI projects, or want the best possible performance, the M2 Max is the clear winner.

Here’s an analogy: Imagine you are building a car. An M1 Max is like a reliable and efficient engine that can get you around town. An M2 Max is like a powerful V8 engine that can race around a track.

Conclusion

Both the M1 Max and M2 Max are impressive chips that offer excellent performance for local LLM development. The M2 Max emerges as the champion for handling larger models and demanding tasks, while the M1 Max remains a cost-effective option for smaller projects. Ultimately, the best choice depends on your specific needs, budget, and the scale of your LLM projects.

FAQ

What are LLMs?

LLMs are large language models that are trained on massive datasets of text and code. They have the ability to understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

How does quantization affect LLM performance?

Quantization is a technique that reduces the size of an LLM's model weights without sacrificing much accuracy. By reducing the size of the model, you can significantly improve performance, especially for generation tasks.

What are the other devices that perform well for local LLM development?

While this article focuses on Apple M1 Max and M2 Max, other devices like NVIDIA GPUs (e.g., RTX 4090, RTX 3090) and high-end CPUs (e.g., AMD Threadripper, Intel i9) are also popular choices for running LLMs locally.

Is it possible to run LLMs without a powerful device?

While having a powerful device is ideal, you can still run LLMs on less powerful machines using cloud services like Google Colab or Amazon SageMaker. However, performance will be slower and you may encounter limitations on resources.

Keywords

LLM, Apple M1 Max, Apple M2 Max, Token Speed, Token Generation, Local LLM, AI Development, Benchmark, Performance, GPU, Quantization, Llama2, Llama3, F16, Q8, Q4, Processing, Generation, Cost-Effective, Scalable, Powerful, Cloud Services, Google Colab, Amazon SageMaker