Which is Better for AI Development: Apple M1 Max 400gb 24cores or Apple M1 Ultra 800gb 48cores? Local LLM Token Speed Generation Benchmark

Introduction

The world of AI development is ablaze with excitement, and at the heart of this firestorm are Large Language Models (LLMs). These sophisticated algorithms are revolutionizing everything from text generation and translation to code writing and scientific research.

But for developers and researchers, the biggest question is: how do you run these LLMs efficiently?

This article dives deep into the performance of two powerful Apple silicon chips, the M1 Max and M1 Ultra, and their ability to generate tokens at lightning speed using various quantization levels and LLM models. We'll put these chips through their paces with Llama2 and Llama3 models and dissect the results to help you choose the best device for your AI development needs.

The Great Apple Showdown: M1 Max vs. M1 Ultra for LLM Token Generation

Imagine you're building a super-intelligent chatbot. You need a device that can churn out text predictions with the speed of a caffeinated hummingbird. That's where the Apple M1 Max and M1 Ultra come in.

Let's break down the numbers and see which of these chips is the reigning champion of local LLM token generation.

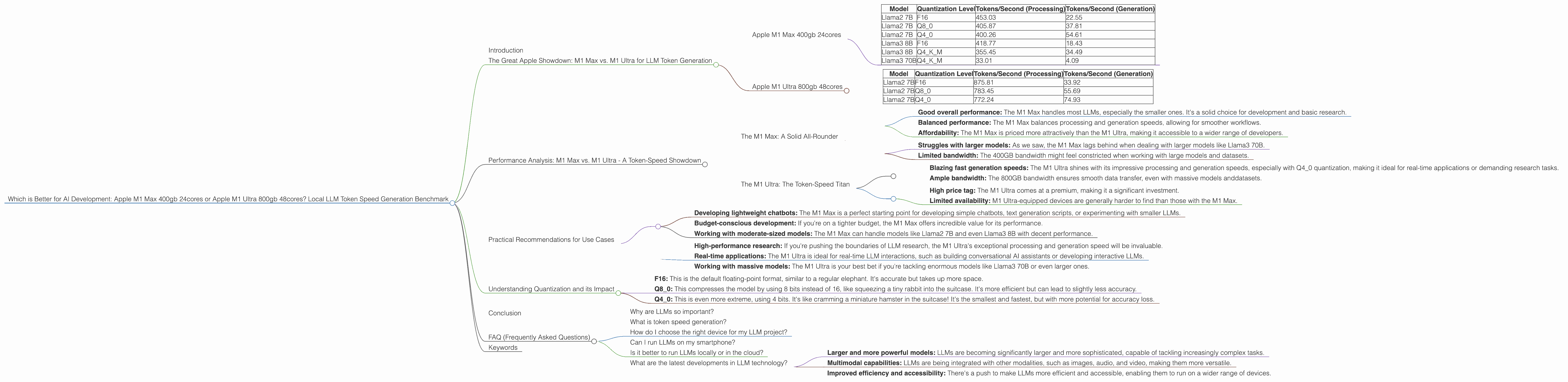

Apple M1 Max 400gb 24cores

The M1 Max is a powerhouse, boasting a respectable 24 GPU cores and 400GB of bandwidth. Let's see how it tackles different LLM models and quantization levels:

Table 1: Apple M1 Max 400gb 24cores Token Speed

| Model | Quantization Level | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama2 7B | F16 | 453.03 | 22.55 |

| Llama2 7B | Q8_0 | 405.87 | 37.81 |

| Llama2 7B | Q4_0 | 400.26 | 54.61 |

| Llama3 8B | F16 | 418.77 | 18.43 |

| Llama3 8B | Q4KM | 355.45 | 34.49 |

| Llama3 70B | Q4KM | 33.01 | 4.09 |

Key Observations:

- Llama2 7B: Seems to perform best with Q4_0 quantization, achieving a respectable 54.61 tokens/second during generation.

- Llama3 8B: While the processing speed is commendable, the generation speed lags behind at 18.43 tokens/second with F16. Q4KM quantization boosts the generation speed to 34.49.

- Llama3 70B: This model is a beast, and even the powerful M1 Max struggles. Q4KM quantization is the only option, resulting in a generation speed of just 4.09 tokens/second.

Apple M1 Ultra 800gb 48cores

The M1 Ultra is Apple's ultimate silicon behemoth, with 48 GPU cores and a whopping 800GB of bandwidth. Let's see if this beast lives up to its name:

Table 2: Apple M1 Ultra 800gb 48cores Token Speed

| Model | Quantization Level | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

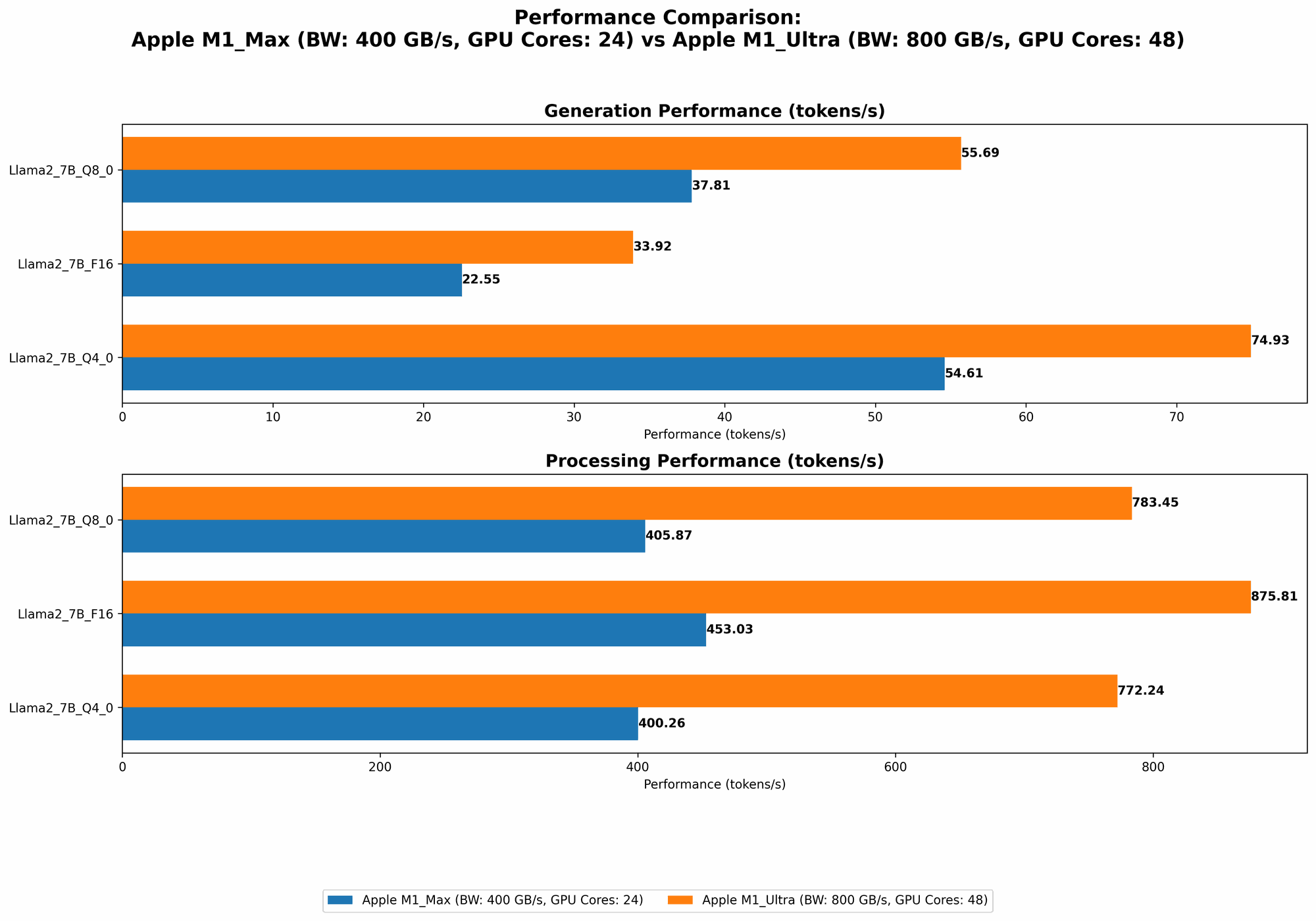

| Llama2 7B | F16 | 875.81 | 33.92 |

| Llama2 7B | Q8_0 | 783.45 | 55.69 |

| Llama2 7B | Q4_0 | 772.24 | 74.93 |

Key Observations:

- Llama2 7B: The M1 Ultra shines here. Q4_0 quantization delivers the fastest generation speed of 74.93 tokens/second. This is significantly faster than the M1 Max.

- No Llama3 Data: Unfortunately, we don't have data for the M1 Ultra with Llama 3 at this time.

Performance Analysis: M1 Max vs. M1 Ultra - A Token-Speed Showdown

So, the M1 Ultra clearly outperforms the M1 Max in terms of token generation speed, at least for Llama2 7B. But let's take a deeper dive and analyze their strengths, weaknesses, and where they excel.

The M1 Max: A Solid All-Rounder

The M1 Max, despite its slightly lower performance, is a great option if you prioritize versatility and affordability. It's a fantastic choice for working with smaller LLM models or when budget is a concern.

Strengths:

- Good overall performance: The M1 Max handles most LLMs, especially the smaller ones. It's a solid choice for development and basic research.

- Balanced performance: The M1 Max balances processing and generation speeds, allowing for smoother workflows.

- Affordability: The M1 Max is priced more attractively than the M1 Ultra, making it accessible to a wider range of developers.

Weaknesses:

- Struggles with larger models: As we saw, the M1 Max lags behind when dealing with larger models like Llama3 70B.

- Limited bandwidth: The 400GB bandwidth might feel constricted when working with large models and datasets.

The M1 Ultra: The Token-Speed Titan

If you need the absolute fastest token generation speeds for your LLM projects, the M1 Ultra is the undisputed champion. It's a true powerhouse for researchers and developers who push the limits of AI.

Strengths:

- Blazing fast generation speeds: The M1 Ultra shines with its impressive processing and generation speeds, especially with Q4_0 quantization, making it ideal for real-time applications or demanding research tasks.

- Ample bandwidth: The 800GB bandwidth ensures smooth data transfer, even with massive models anddatasets.

Weaknesses:

- High price tag: The M1 Ultra comes at a premium, making it a significant investment.

- Limited availability: M1 Ultra-equipped devices are generally harder to find than those with the M1 Max.

Practical Recommendations for Use Cases

To help you make the right decision, let's match the M1 Max and M1 Ultra with common use cases:

M1 Max:

- Developing lightweight chatbots: The M1 Max is a perfect starting point for developing simple chatbots, text generation scripts, or experimenting with smaller LLMs.

- Budget-conscious development: If you're on a tighter budget, the M1 Max offers incredible value for its performance.

- Working with moderate-sized models: The M1 Max can handle models like Llama2 7B and even Llama3 8B with decent performance.

M1 Ultra:

- High-performance research: If you're pushing the boundaries of LLM research, the M1 Ultra's exceptional processing and generation speed will be invaluable.

- Real-time applications: The M1 Ultra is ideal for real-time LLM interactions, such as building conversational AI assistants or developing interactive LLMs.

- Working with massive models: The M1 Ultra is your best bet if you're tackling enormous models like Llama3 70B or even larger ones.

Understanding Quantization and its Impact

Quantization is like compressing an LLM model to make it more compact and faster. Imagine you're trying to fit a giant elephant into a tiny suitcase. Quantization is like using a shrink ray to make the elephant smaller so it fits!

Here's a breakdown of the different quantization levels we've looked at:

- F16: This is the default floating-point format, similar to a regular elephant. It's accurate but takes up more space.

- Q8_0: This compresses the model by using 8 bits instead of 16, like squeezing a tiny rabbit into the suitcase. It's more efficient but can lead to slightly less accuracy.

- Q4_0: This is even more extreme, using 4 bits. It's like cramming a miniature hamster in the suitcase! It's the smallest and fastest, but with more potential for accuracy loss.

The lower the quantization level, the smaller the model and the faster it runs, but it can also impact its accuracy. It's like a trade-off between speed and precision. So, choose the right quantization level based on your specific project's requirements and priorities.

Conclusion

Both the Apple M1 Max and M1 Ultra are powerful machines capable of running LLMs locally with impressive speed. The M1 Ultra reigns supreme when it comes to raw token generation speed, especially for larger models. However, the M1 Max is a more versatile and budget-friendly option for developers and researchers working with smaller models or experimenting with LLM technologies.

Ultimately, the best choice depends on your specific use case, budget, and performance requirements. If you're building a super-fast, super-intelligent AI system, jump on the high-speed train with the M1 Ultra. But if you need a reliable and cost-effective workhorse, the M1 Max is a fantastic choice.

FAQ (Frequently Asked Questions)

Why are LLMs so important?

LLMs are like the brains of AI. They can understand and generate human-like text, making them useful for a wide range of applications, from chatbots and language translation to code generation and scientific research. Their ability to process and generate text is revolutionizing how we interact with computers and shaping the future of AI.

What is token speed generation?

Token speed generation is the rate at which an LLM can process and generate tokens, the basic units of language that make up a sentence. It's like how fast a word processor can type words. The higher the token speed, the faster the LLM can process information and generate text.

How do I choose the right device for my LLM project?

Consider your budget, the size of the LLMs you'll be working with, and the performance demands of your project. If you need lightning-fast generation speeds for complex tasks, the M1 Ultra is your best bet. However, if you're working on smaller models or have a tighter budget, the M1 Max offers a solid balance of performance and affordability.

Can I run LLMs on my smartphone?

While some small LLMs can run on smartphones, the processing power and memory limitations of most smartphones make it difficult to handle larger and more complex LLMs.

Is it better to run LLMs locally or in the cloud?

It depends on factors like budget, security, latency, and the scale of your project. Cloud-based LLM solutions offer scalability and accessibility, but they require an internet connection. Local LLM solutions provide faster response times and better privacy but might have limitations in terms of processing power and memory.

What are the latest developments in LLM technology?

The field of LLMs is constantly evolving. Some exciting advancements include:

- Larger and more powerful models: LLMs are becoming significantly larger and more sophisticated, capable of tackling increasingly complex tasks.

- Multimodal capabilities: LLMs are being integrated with other modalities, such as images, audio, and video, making them more versatile.

- Improved efficiency and accessibility: There's a push to make LLMs more efficient and accessible, enabling them to run on a wider range of devices.

Keywords

LLMs, Large Language Models, Apple M1 Max, Apple M1 Ultra, Token Speed, Token Generation, Quantization, Llama2, Llama3, AI Development, GPU cores, Bandwidth, Performance, Processing Speed, Generation Speed, Use Cases, Applications, Research, Development, Practical Recommendations, FAQ, Keywords.