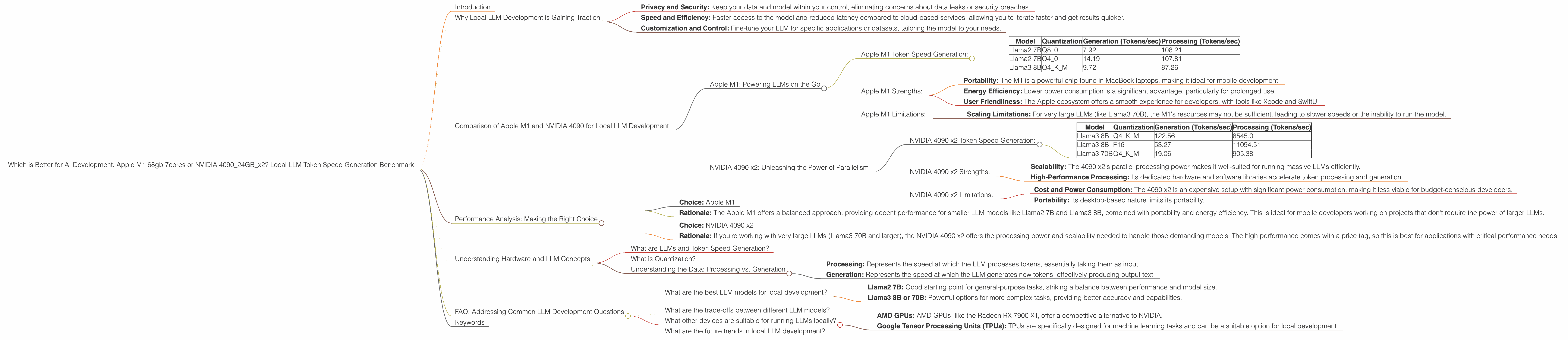

Which is Better for AI Development: Apple M1 68gb 7cores or NVIDIA 4090 24GB x2? Local LLM Token Speed Generation Benchmark

Introduction

The world of artificial intelligence is rapidly evolving, with Large Language Models (LLMs) becoming increasingly powerful and accessible. Training and running these models locally on powerful hardware is a growing trend, allowing for faster iteration and deployment of AI applications. But choosing the right hardware for the job can be a daunting task. In this article, we will delve into the performance of two popular options for local LLM development: the Apple M1 chip with 68GB RAM and 7 cores, and the NVIDIA 4090 with 24GB of RAM. We will compare these devices based on their token speed generation for various LLM models like Llama2 and Llama3, and discuss the strengths and weaknesses of each.

Why Local LLM Development is Gaining Traction

Think of LLMs as the brains of AI, capable of understanding and generating human-like text. Running these models locally offers several advantages:

- Privacy and Security: Keep your data and model within your control, eliminating concerns about data leaks or security breaches.

- Speed and Efficiency: Faster access to the model and reduced latency compared to cloud-based services, allowing you to iterate faster and get results quicker.

- Customization and Control: Fine-tune your LLM for specific applications or datasets, tailoring the model to your needs.

Comparison of Apple M1 and NVIDIA 4090 for Local LLM Development

This comparison focuses on the token speed generation performance of the Apple M1 68GB 7cores and NVIDIA 4090 24GB x2 setup for various LLM models. We'll consider both processing and generation speeds, offering insights into their capabilities for different tasks. Data is based on publicly available benchmarks, and some combinations are missing due to the absence of benchmark data.

Apple M1: Powering LLMs on the Go

The Apple M1 chip, with its blazing-fast processing power and energy efficiency, offers a compelling option for LLM development, especially for those seeking portability and ease of use.

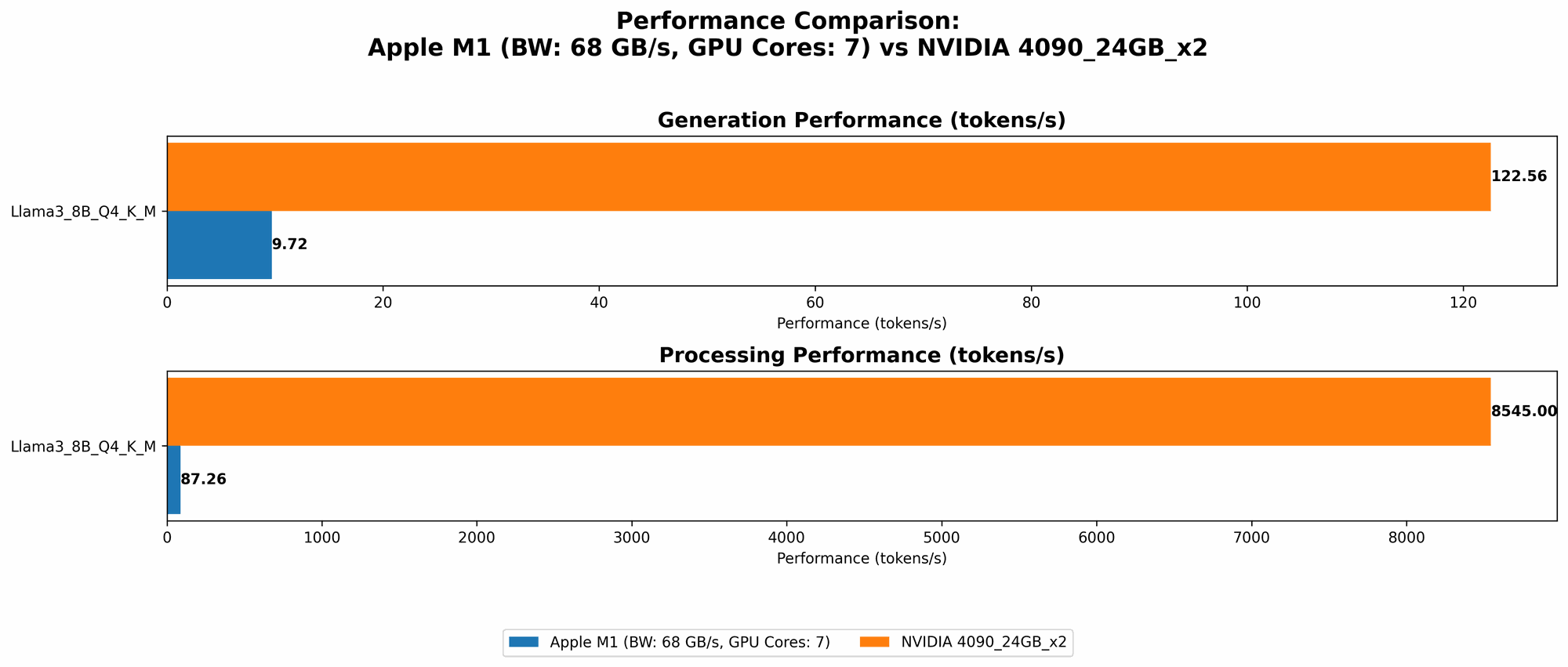

Apple M1 Token Speed Generation:

The Apple M1 demonstrates strong performance with smaller LLMs, especially when using quantized models (Q80 and Q40):

| Model | Quantization | Generation (Tokens/sec) | Processing (Tokens/sec) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 7.92 | 108.21 |

| Llama2 7B | Q4_0 | 14.19 | 107.81 |

| Llama3 8B | Q4KM | 9.72 | 87.26 |

Key Observations:

- Smaller Model Proficiency: The M1 excels with smaller LLMs like Llama2 7B and Llama3 8B, demonstrating a significant speed advantage for generating text.

- Quantization Benefits: Quantization, a technique that reduces the size of LLM models to improve performance and reduce memory usage, significantly boosts token speeds on the Apple M1.

Apple M1 Strengths:

- Portability: The M1 is a powerful chip found in MacBook laptops, making it ideal for mobile development.

- Energy Efficiency: Lower power consumption is a significant advantage, particularly for prolonged use.

- User Friendliness: The Apple ecosystem offers a smooth experience for developers, with tools like Xcode and SwiftUI.

Apple M1 Limitations:

- Scaling Limitations: For very large LLMs (like Llama3 70B), the M1's resources may not be sufficient, leading to slower speeds or the inability to run the model.

NVIDIA 4090 x2: Unleashing the Power of Parallelism

The NVIDIA 4090, with its groundbreaking graphics processing power, offers a powerhouse solution for tackling the demands of large-scale LLMs. Although a desktop solution, the 4090's dedication to delivering high performance makes it a top contender.

NVIDIA 4090 x2 Token Speed Generation:

The NVIDIA 4090 x2 delivers remarkable speeds for larger LLMs, showcasing its prowess in handling complex models:

| Model | Quantization | Generation (Tokens/sec) | Processing (Tokens/sec) |

|---|---|---|---|

| Llama3 8B | Q4KM | 122.56 | 8545.0 |

| Llama3 8B | F16 | 53.27 | 11094.51 |

| Llama3 70B | Q4KM | 19.06 | 905.38 |

Key Observations:

- Larger Model Expertise: The 4090 x2 shines with larger LLMs like Llama3 8B and 70B, achieving significantly higher generation speeds.

- Faster Processing: The 4090 x2 excels in processing tokens, demonstrating its superior processing capabilities.

- F16 Performance: The 4090 x2 handles F16 models efficiently, showcasing its adaptability to different model configurations.

NVIDIA 4090 x2 Strengths:

- Scalability: The 4090 x2's parallel processing power makes it well-suited for running massive LLMs efficiently.

- High-Performance Processing: Its dedicated hardware and software libraries accelerate token processing and generation.

NVIDIA 4090 x2 Limitations:

- Cost and Power Consumption: The 4090 x2 is an expensive setup with significant power consumption, making it less viable for budget-conscious developers.

- Portability: Its desktop-based nature limits its portability.

Performance Analysis: Making the Right Choice

The choice between the Apple M1 and NVIDIA 4090 x2 hinges on your specific use case, budget, and priorities. Let's break down the scenario:

Scenario 1: Mobile Development and Smaller Models

- Choice: Apple M1

- Rationale: The Apple M1 offers a balanced approach, providing decent performance for smaller LLM models like Llama2 7B and Llama3 8B, combined with portability and energy efficiency. This is ideal for mobile developers working on projects that don't require the power of larger LLMs.

Scenario 2: High-Performance Computing and Large Models

- Choice: NVIDIA 4090 x2

- Rationale: If you're working with very large LLMs (Llama3 70B and larger), the NVIDIA 4090 x2 offers the processing power and scalability needed to handle those demanding models. The high performance comes with a price tag, so this is best for applications with critical performance needs.

Understanding Hardware and LLM Concepts

What are LLMs and Token Speed Generation?

LLMs are AI models trained on massive datasets of text, allowing them to understand and generate human-like language. Token speed generation refers to the speed at which a model can process and generate tokens, which are the basic units of text. Higher token speeds mean faster responses and a more efficient LLM.

What is Quantization?

Think of quantization as making an LLM smaller and more nimble. It's like reducing the number of bits used to represent each number in the model, leading to a smaller file size and potentially faster performance. This is especially beneficial on devices with limited memory like the Apple M1.

Understanding the Data: Processing vs. Generation

- Processing: Represents the speed at which the LLM processes tokens, essentially taking them as input.

- Generation: Represents the speed at which the LLM generates new tokens, effectively producing output text.

Example: Imagine you have a model that processes 1000 tokens per second (tokens processed per second), and it generates 100 tokens per second (tokens generated per second). This means the model is quickly digesting the input text but takes longer to produce the output.

FAQ: Addressing Common LLM Development Questions

What are the best LLM models for local development?

There's no one-size-fits-all answer, as the best model depends on your specific needs:

- Llama2 7B: Good starting point for general-purpose tasks, striking a balance between performance and model size.

- Llama3 8B or 70B: Powerful options for more complex tasks, providing better accuracy and capabilities.

What are the trade-offs between different LLM models?

Smaller models are generally faster and require less memory or processing power. Larger models offer better accuracy and can handle more complex requests but come with higher computational requirements.

What other devices are suitable for running LLMs locally?

Besides the Apple M1 and NVIDIA 4090 x2, other options include:

- AMD GPUs: AMD GPUs, like the Radeon RX 7900 XT, offer a competitive alternative to NVIDIA.

- Google Tensor Processing Units (TPUs): TPUs are specifically designed for machine learning tasks and can be a suitable option for local development.

What are the future trends in local LLM development?

Expect continued improvements in hardware, software, and LLM optimization techniques. Researchers are exploring techniques like model compression and efficient architectures to make LLMs more accessible for local development.

Keywords

Apple M1, NVIDIA 4090, LLM, Llama2, Llama3, token speed, generation, processing, quantization, local development, AI, machine learning, GPU, TPU, performance, benchmark, comparison, development, hardware, software.