Which is Better for AI Development: Apple M1 68gb 7cores or Apple M1 Max 400gb 24cores? Local LLM Token Speed Generation Benchmark

Introduction

As Large Language Models (LLMs) continue to revolutionize the field of artificial intelligence, the need for powerful hardware to handle their computational demands is growing exponentially. One of the key aspects of LLM performance is token speed generation, which directly impacts the speed at which these models can process text and generate responses.

This article dives deep into a benchmark comparing two popular Apple silicon chips, the M1 68GB 7-core and M1 Max 400GB 24-core, evaluating their suitability for running LLMs locally. We'll analyze their token generation speeds for various popular LLM models, including Llama 2 and Llama 3, and explore how different quantization levels affect performance.

Buckle up, fellow AI enthusiasts, it's time to unravel the secrets of these silicon beasts!

Understanding LLM Token Speed Generation

Before we dive into the benchmark, let's clarify what we mean by "token speed generation" in the context of LLMs.

Imagine you're feeding text to an LLM. Instead of processing the entire text as one large piece, it breaks down the text into smaller units called tokens. These tokens are essentially chunks of words or sub-words, representing the meaning of the text.

Token speed generation refers to the rate at which the LLM can process and generate these tokens. The faster the rate, the quicker the model can understand your input and produce an output. Think of it like a high-speed train zipping through text, converting it into meaningful chunks at lightning speed!

Comparing Apple M1 and M1 Max for LLM Performance

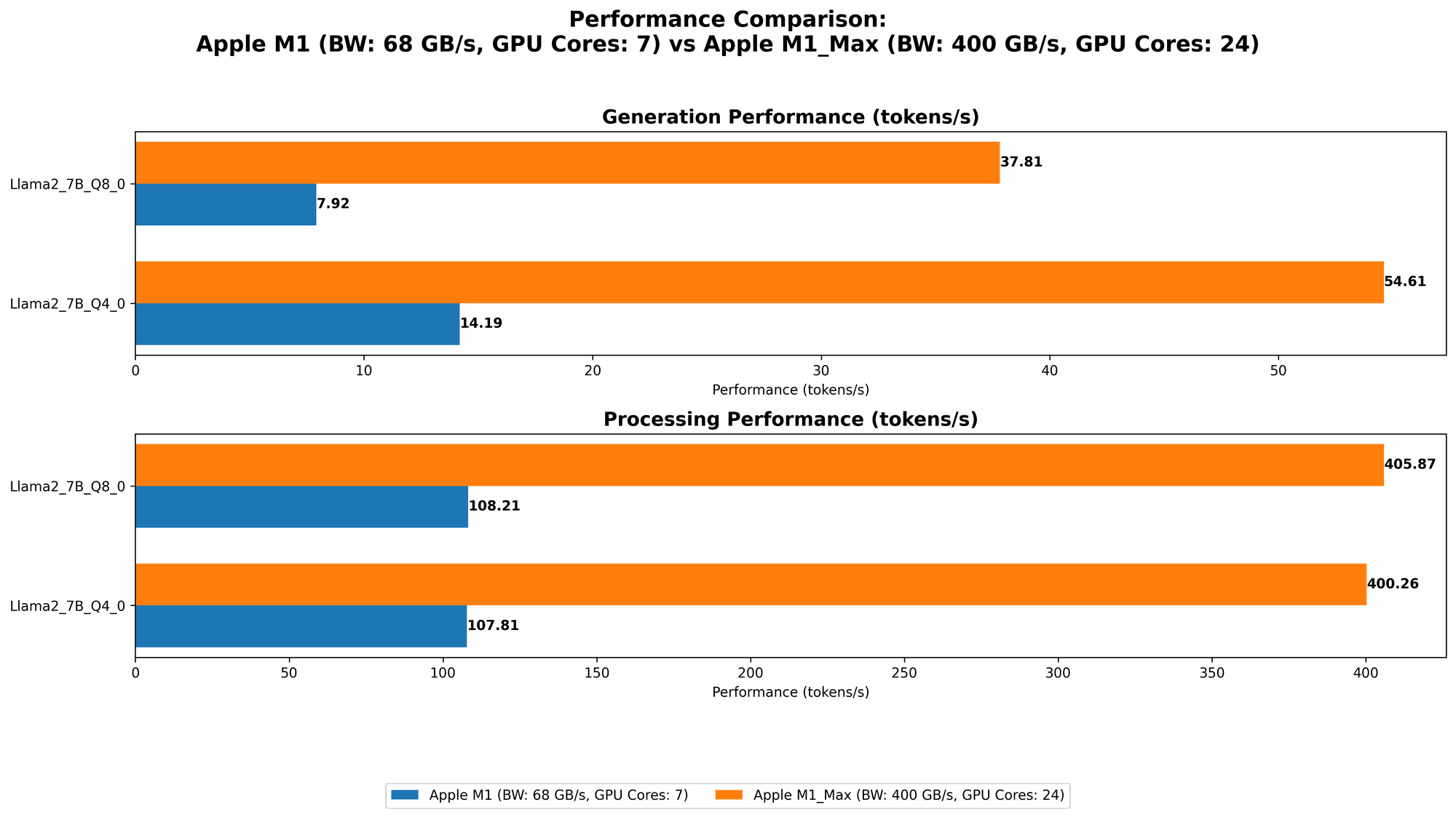

Now, let's get to the heart of the matter: comparing the performance of the Apple M1 68GB 7-core and the Apple M1 Max 400GB 24-core chips for LLM token generation.

To make this comparison meaningful, we'll look at several LLM models, including Llama 2 and Llama 3, and test their performance at different quantization levels. Quantization is a technique used to compress the models by reducing the precision of their weights, allowing them to run faster on less powerful hardware.

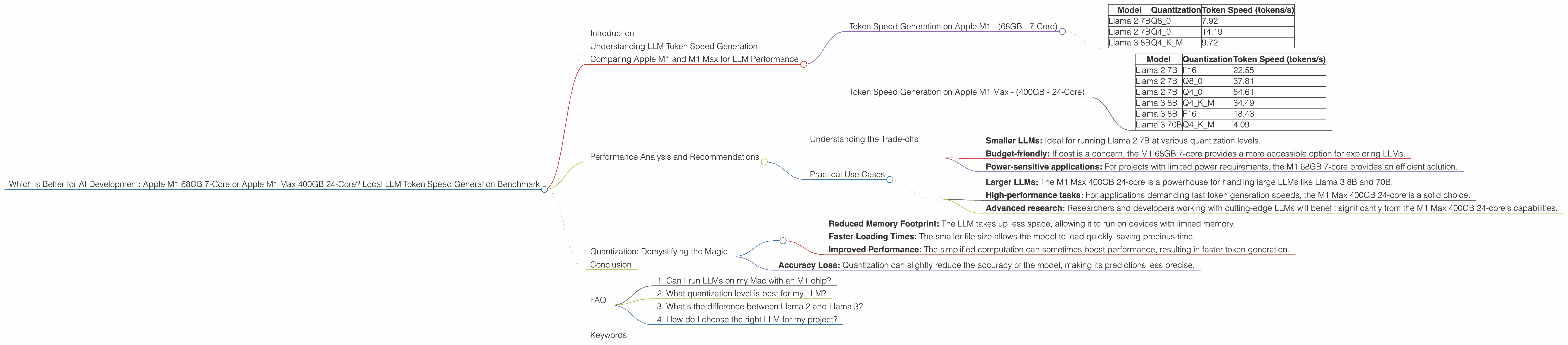

Token Speed Generation on Apple M1 - (68GB - 7-Core)

While the Apple M1 68GB 7-core chip offers a good balance of performance and power efficiency, it struggles to handle larger LLMs like Llama 3 70B effectively. The benchmark data shows that it can achieve reasonable token speeds for Llama 2 7B at various quantization levels (Q80 and Q40) but falls short when it comes to Llama 3 8B and 70B.

Here are the token generation speeds in tokens per second (tokens/s):

| Model | Quantization | Token Speed (tokens/s) |

|---|---|---|

| Llama 2 7B | Q8_0 | 7.92 |

| Llama 2 7B | Q4_0 | 14.19 |

| Llama 3 8B | Q4KM | 9.72 |

Key Highlights:

- Strong performance for Llama 2 7B: The M1 68GB 7-core demonstrates impressive token generation speeds for the Llama 2 7B model, particularly at Q4_0 quantization.

- Limitations with larger models: The M1 68GB 7-core struggles to handle larger LLMs like Llama 3 8B and 70B, due to its limited processing power.

Token Speed Generation on Apple M1 Max - (400GB - 24-Core)

The Apple M1 Max 400GB 24-core chip is a powerhouse designed for demanding tasks like LLM inference. Its higher core count and GPU processing capabilities enable significantly faster token generation speeds compared to the M1 68GB 7-core.

Here’s a breakdown of token generation speeds for various LLM models:

| Model | Quantization | Token Speed (tokens/s) |

|---|---|---|

| Llama 2 7B | F16 | 22.55 |

| Llama 2 7B | Q8_0 | 37.81 |

| Llama 2 7B | Q4_0 | 54.61 |

| Llama 3 8B | Q4KM | 34.49 |

| Llama 3 8B | F16 | 18.43 |

| Llama 3 70B | Q4KM | 4.09 |

Key Highlights:

- Superior performance across the board: The M1 Max 400GB 24-core consistently delivers superior performance for all tested LLM models, offering significant speedups for both processing and generation.

- Excellent for larger LLMs: The M1 Max 400GB 24-core excels at handling large LLMs like Llama 3 8B and 70B, providing acceptable token generation speeds.

- Impact of quantization: The M1 Max 400GB 24-core benefits significantly from quantization, especially for the Llama 2 7B model.

Performance Analysis and Recommendations

Understanding the Trade-offs

Cost vs. Performance: The M1 Max 400GB 24-core offers the clear winner in terms of performance, but comes at a higher cost. The M1 68GB 7-core presents a budget-friendly alternative, especially for those who primarily focus on smaller LLMs.

Power Consumption: The M1 68GB 7-core is more power-efficient than the M1 Max 400GB 24-core, making it suitable for tasks with limited power budgets.

Memory: The M1 Max 400GB 24-core offers a significant advantage in terms of memory capacity, enabling it to load and run larger LLM models effectively.

Practical Use Cases

M1 68GB 7-core:

- Smaller LLMs: Ideal for running Llama 2 7B at various quantization levels.

- Budget-friendly: If cost is a concern, the M1 68GB 7-core provides a more accessible option for exploring LLMs.

- Power-sensitive applications: For projects with limited power requirements, the M1 68GB 7-core provides an efficient solution.

M1 Max 400GB 24-core:

- Larger LLMs: The M1 Max 400GB 24-core is a powerhouse for handling large LLMs like Llama 3 8B and 70B.

- High-performance tasks: For applications demanding fast token generation speeds, the M1 Max 400GB 24-core is a solid choice.

- Advanced research: Researchers and developers working with cutting-edge LLMs will benefit significantly from the M1 Max 400GB 24-core's capabilities.

Quantization: Demystifying the Magic

A common question developers ask is: "What's the deal with this quantization thing? Why should I care?".

Quantization is like a magical trick that shrinks a heavy LLM into a lightweight version, enabling it to run faster on less powerful hardware. Imagine fitting a whole orchestra into a tiny car!

Think of an LLM as a giant recipe book. Each ingredient represents a numerical value, a weight used to predict the output. Quantization is like simplifying the recipe by using fewer ingredients or rounding off measurements.

Here's the magic:

- Reduced Memory Footprint: The LLM takes up less space, allowing it to run on devices with limited memory.

- Faster Loading Times: The smaller file size allows the model to load quickly, saving precious time.

- Improved Performance: The simplified computation can sometimes boost performance, resulting in faster token generation.

However, there's a tradeoff:

- Accuracy Loss: Quantization can slightly reduce the accuracy of the model, making its predictions less precise.

The key is to find the sweet spot between performance and accuracy.

Conclusion

Choosing the right hardware for LLM development is a critical decision. The benchmark clearly shows that the Apple M1 Max 400GB 24-core reigns supreme in terms of token speed generation, especially when handling larger LLMs. The M1 68GB 7-core presents a budget-friendly option for smaller LLMs, offering a balance between performance and power efficiency.

Ultimately, the best choice depends on your specific needs and priorities. Remember, the journey into the world of LLMs is all about finding the perfect balance between performance, cost, and power consumption!

FAQ

1. Can I run LLMs on my Mac with an M1 chip?

Absolutely! Both the Apple M1 and M1 Max chips are powerful enough to run various LLM models locally. The M1 is great for smaller models, while the M1 Max is perfect for larger ones.

2. What quantization level is best for my LLM?

The ideal quantization level depends on your specific LLM and hardware. Lower quantization levels (like Q4_0) typically result in better performance but may sacrifice accuracy. Higher levels (like F16) retain more accuracy but may be slower. Experimentation is key!

3. What's the difference between Llama 2 and Llama 3?

Llama 2 and Llama 3 are popular open-source LLMs developed by Meta. Llama3 boasts a larger model size and improved performance, while Llama 2 is more accessible for experimentation.

4. How do I choose the right LLM for my project?

Choosing the right LLM depends on your project's requirements. Consider factors like model size, accuracy, and computational resources available.

Keywords

LLM, Large Language Model, Apple M1, Apple M1 Max, Token Speed Generation, Llama 2, Llama 3, Quantization, Model Inference, AI Development, Local LLMs, GPU, CPU, Tokenization, Performance Benchmark, Hardware Comparison, AI Enthusiasts, Developers, Machine Learning, Deep Learning, Natural Language Processing.