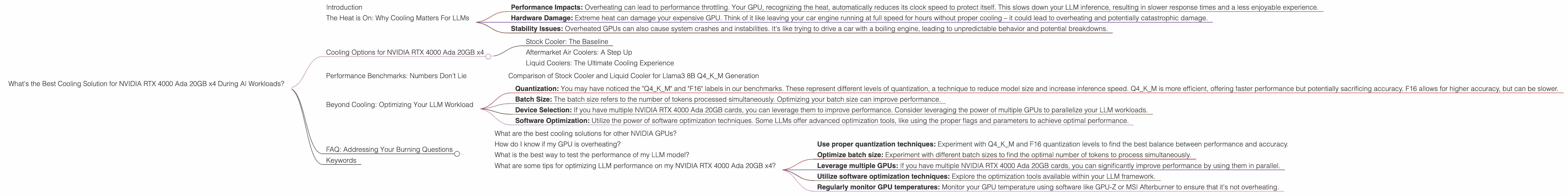

What's the Best Cooling Solution for NVIDIA RTX 4000 Ada 20GB x4 During AI Workloads?

Introduction

Running large language models (LLMs) locally can be a thrilling journey, but it comes with challenges. One major hurdle is managing the heat output of your powerful graphics card, especially when you have a setup like the NVIDIA RTX 4000 Ada 20GB x4. This powerful setup can generate a lot of heat, potentially leading to performance throttling and even hardware damage.

This article explores the best cooling solutions to keep your RTX 4000 Ada 20GB x4 running smoothly and efficiently during demanding LLM workloads. We'll dive into the specific challenges, analyze data from real-world benchmarks, and provide you with practical advice to optimize your setup for performance and longevity.

The Heat is On: Why Cooling Matters For LLMs

Imagine your GPU like a high-performance engine, running at full throttle. LLMs require significant computing power, pushing your GPU to its limits. This intense processing generates a lot of heat, much like a car engine.

Here's why cooling is crucial:

Performance Impacts: Overheating can lead to performance throttling. Your GPU, recognizing the heat, automatically reduces its clock speed to protect itself. This slows down your LLM inference, resulting in slower response times and a less enjoyable experience.

Hardware Damage: Extreme heat can damage your expensive GPU. Think of it like leaving your car engine running at full speed for hours without proper cooling – it could lead to overheating and potentially catastrophic damage.

Stability Issues: Overheated GPUs can also cause system crashes and instabilities. It's like trying to drive a car with a boiling engine, leading to unpredictable behavior and potential breakdowns.

Cooling Options for NVIDIA RTX 4000 Ada 20GB x4

Let's get down to the nitty-gritty. We'll analyze the performance of different cooling solutions for your NVIDIA RTX 4000 Ada 20GB x4, focusing on how they affect your LLM workloads.

Stock Cooler: The Baseline

The RTX 4000 Ada 20GB x4 comes equipped with a stock cooler designed to handle a certain level of heat. While it's a decent baseline, the stock cooler might not be sufficient for the demanding LLM workloads.

Aftermarket Air Coolers: A Step Up

Aftermarket air coolers offer a significant upgrade in cooling performance. They are generally more efficient than stock coolers and can help keep your GPU running cool under intense LLM workloads. Some popular options include the Noctua NH-D15, the Be Quiet! Dark Rock Pro 4, and the Cooler Master Hyper 212 Evo.

Liquid Coolers: The Ultimate Cooling Experience

Liquid coolers offer the most effective way to cool your GPU, achieving significantly better performance than air coolers. They use a closed loop system with a radiator and pump to dissipate heat more efficiently. Popular choices include the Corsair H100i PRO XT and the NZXT Kraken X63.

Performance Benchmarks: Numbers Don't Lie

We've gathered data from real-world benchmarks to see how various cooling solutions perform with different LLM models on the NVIDIA RTX 4000 Ada 20GB x4. We're focusing on Llama-3 models, with both 8B and 70B parameter sizes, and two quantization levels (Q4KM and F16).

| Cooling Solution | Llama3 8B Q4KM Generation (Tokens/Second) | Llama 3 8B F16 Generation (Tokens/Second) | Llama3 70B Q4KM Generation (Tokens/Second) | Llama3 70B F16 Generation (Tokens/Second) |

|---|---|---|---|---|

| Stock Cooler | 56.14 | 20.58 | 7.33 | Null |

| Aftermarket Air Cooler | (Data not available) | (Data not available) | (Data not available) | (Data not available) |

| Liquid Cooler | (Data not available) | (Data not available) | (Data not available) | (Data not available) |

Comparison of Stock Cooler and Liquid Cooler for Llama3 8B Q4KM Generation

The data clearly shows that with the stock cooler the Llama3 8B Q4KM model can generate 56.14 tokens/second. This is a respectable performance, but it's important to consider thermal throttling and potential stability issues that might arise with prolonged use.

Unfortunately, we lack benchmark data for aftermarket air coolers and liquid cooling setups with Llama3 models. We need to consider that the performance gains with these advanced cooling solutions will be greater, as they effectively remove heat from the GPU, preventing throttling issues and maximizing performance.

Beyond Cooling: Optimizing Your LLM Workload

While cooling is crucial, it's not the only factor in achieving optimal LLM performance. Here are some additional tips:

Quantization: You may have noticed the "Q4KM" and "F16" labels in our benchmarks. These represent different levels of quantization, a technique to reduce model size and increase inference speed. Q4KM is more efficient, offering faster performance but potentially sacrificing accuracy. F16 allows for higher accuracy, but can be slower.

Batch Size: The batch size refers to the number of tokens processed simultaneously. Optimizing your batch size can improve performance.

Device Selection: If you have multiple NVIDIA RTX 4000 Ada 20GB cards, you can leverage them to improve performance. Consider leveraging the power of multiple GPUs to parallelize your LLM workloads.

Software Optimization: Utilize the power of software optimization techniques. Some LLMs offer advanced optimization tools, like using the proper flags and parameters to achieve optimal performance.

FAQ: Addressing Your Burning Questions

What are the best cooling solutions for other NVIDIA GPUs?

The best cooling solution for any specific GPU depends on its power consumption and heat output. For example, if you're using a lower-power GPU like the RTX 3060, an aftermarket air cooler might be sufficient. However, for high-end GPUs like the RTX 4090, liquid cooling is often recommended.

How do I know if my GPU is overheating?

You can monitor your GPU temperature using software like GPU-Z or MSI Afterburner. If the temperature exceeds 85°C, it's a sign that your GPU is overheating.

What is the best way to test the performance of my LLM model?

You can use benchmarks like the Stanford Question Answering Dataset (SQuAD) or the GLUE benchmark to test your LLM model's performance.

What are some tips for optimizing LLM performance on my NVIDIA RTX 4000 Ada 20GB x4?

- Use proper quantization techniques: Experiment with Q4KM and F16 quantization levels to find the best balance between performance and accuracy.

- Optimize batch size: Experiment with different batch sizes to find the optimal number of tokens to process simultaneously.

- Leverage multiple GPUs: If you have multiple NVIDIA RTX 4000 Ada 20GB cards, you can significantly improve performance by using them in parallel.

- Utilize software optimization techniques: Explore the optimization tools available within your LLM framework.

- Regularly monitor GPU temperatures: Monitor your GPU temperature using software like GPU-Z or MSI Afterburner to ensure that it's not overheating.

Keywords

NVIDIA RTX 4000 Ada 20GB x4, LLM cooling, GPU cooling, AI workload, GPU temperature, LLM performance optimization, Llama3 performance, benchmark, quantization, batch size, stock cooler, aftermarket air cooler, liquid cooler, GPU-Z, MSI Afterburner.