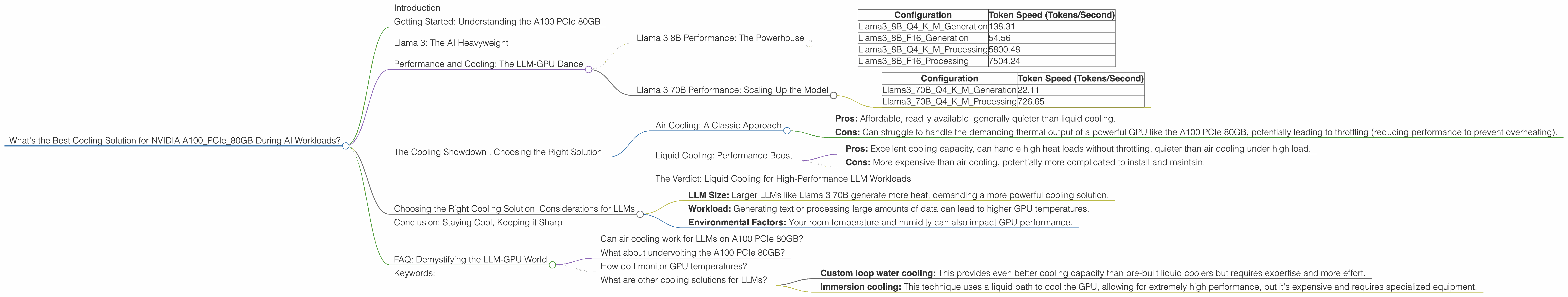

What's the Best Cooling Solution for NVIDIA A100 PCIe 80GB During AI Workloads?

Introduction

The world of large language models (LLMs) is exploding, and with it, the need for powerful hardware to run these AI behemoths. One of the top contenders in the GPU arena is the Nvidia A100, especially the PCIe 80GB version. But as you push its limits with demanding LLMs, the question of cooling becomes crucial. You don't want your AI engine to overheat and lose performance, right?

This article dives deep into the cooling requirements of the A100 PCIe 80GB when dealing with AI workloads, specifically those involving popular LLMs like Llama 3. We'll dissect the performance differences between different configurations and explore the best cooling solutions to keep your LLM running smoothly.

Getting Started: Understanding the A100 PCIe 80GB

The A100 PCIe 80GB is a beast of a GPU, designed for demanding AI workloads like training and inference. It boasts a powerful Ampere architecture with 40GB of HBM2e memory, offering massive parallel processing power. But with this power comes heat, and that's where cooling solutions come into play.

Llama 3: The AI Heavyweight

Llama 3 is a family of powerful open-source LLMs released in 2023. It comes in various sizes, with the 7B and 8B models being popular choices for local inference. You can think of these numbers as the "brain size" of the model, with larger models capable of understanding more complex language and generating richer responses.

Performance and Cooling: The LLM-GPU Dance

Let's get into the nitty-gritty. The A100 PCIe 80GB excels at processing LLMs like Llama 3. Here's a breakdown of how the performance changes with different LLM sizes and configurations:

Llama 3 8B Performance: The Powerhouse

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama38BQ4KM_Generation | 138.31 |

| Llama38BF16_Generation | 54.56 |

| Llama38BQ4KM_Processing | 5800.48 |

| Llama38BF16_Processing | 7504.24 |

- Q4KM: This configuration utilizes quantization - a technique for compressing the model to reduce memory footprint and increase performance. It's like shrinking the brain size without sacrificing too much intelligence.

- F16: This uses a 16-bit floating-point format, which is less precise than the Q4KM configuration but still provides decent performance, especially for processing tasks.

What are we seeing here? The A100 PCIe 80GB shines when running the Llama 3 8B model, especially in the Q4KM configuration. This configuration delivers the highest token speed, meaning it can generate text significantly faster compared to the F16 configuration.

Llama 3 70B Performance: Scaling Up the Model

Unfortunately, we don't have performance data for the Llama 3 70B model running on the A100 PCIe 80GB using the F16 configuration. This doesn't necessarily mean it's bad; the A100 PCIe 80GB can still handle this larger model, but the performance might be affected by factors like memory limitations and the specific workload.

Here's what we do know:

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama370BQ4KM_Generation | 22.11 |

| Llama370BQ4KM_Processing | 726.65 |

What can we learn? The A100 PCIe 80GB can still handle the 70B model, but the token speed is significantly lower compared to the 8B model, especially during generation tasks.

The Cooling Showdown : Choosing the Right Solution

Air Cooling: A Classic Approach

Air cooling is the most common and often the most cost-effective solution. It relies on fans to draw cool air over the GPU, dissipating heat.

- Pros: Affordable, readily available, generally quieter than liquid cooling.

- Cons: Can struggle to handle the demanding thermal output of a powerful GPU like the A100 PCIe 80GB, potentially leading to throttling (reducing performance to prevent overheating).

Liquid Cooling: Performance Boost

Liquid cooling uses a closed loop system of water or other fluids to transfer heat away from the GPU. This solution can significantly improve cooling capacity compared to air cooling.

- Pros: Excellent cooling capacity, can handle high heat loads without throttling, quieter than air cooling under high load.

- Cons: More expensive than air cooling, potentially more complicated to install and maintain.

The Verdict: Liquid Cooling for High-Performance LLM Workloads

For running LLMs on the A100 PCIe 80GB, liquid cooling is the clear winner. It helps maintain optimal performance, especially for demanding models like Llama 3 70B, by preventing throttling and ensuring smooth operation.

Choosing the Right Cooling Solution: Considerations for LLMs

When deciding on a cooling solution, consider the following factors:

- LLM Size: Larger LLMs like Llama 3 70B generate more heat, demanding a more powerful cooling solution.

- Workload: Generating text or processing large amounts of data can lead to higher GPU temperatures.

- Environmental Factors: Your room temperature and humidity can also impact GPU performance.

Conclusion: Staying Cool, Keeping it Sharp

Running LLMs on the A100 PCIe 80GB is an exciting adventure. But to keep your AI engine humming along without overheating, consider liquid cooling. It's the key to unlocking the full potential of your hardware, allowing you to tackle even the most demanding LLMs with confidence.

FAQ: Demystifying the LLM-GPU World

Can air cooling work for LLMs on A100 PCIe 80GB?

Yes, air cooling can work, but it might require a high-end air cooler and a well-ventilated case to prevent throttling. If you're running smaller LLMs or are comfortable with potential performance reduction, air cooling can be a practical option.

What about undervolting the A100 PCIe 80GB?

Undervolting can reduce power consumption and heat output. However, it can also affect performance. Experimenting with undervolting can help, but careful monitoring is essential to avoid instability or performance issues.

How do I monitor GPU temperatures?

Most GPU monitoring software, like Nvidia's GeForce Experience or MSI Afterburner, can monitor GPU temperatures and provide warnings if overheating occurs.

What are other cooling solutions for LLMs?

Other cooling options include:

- Custom loop water cooling: This provides even better cooling capacity than pre-built liquid coolers but requires expertise and more effort.

- Immersion cooling: This technique uses a liquid bath to cool the GPU, allowing for extremely high performance, but it's expensive and requires specialized equipment.

Keywords:

A100PCIe80GB, NVIDIA, LLM, Llama 3, Cooling, Performance, Token Speed, Quantization, F16, Air Cooling, Liquid Cooling, Undervolting, GPU Temperatures, GPU Monitoring, Custom Loop Water Cooling, Immersion Cooling.