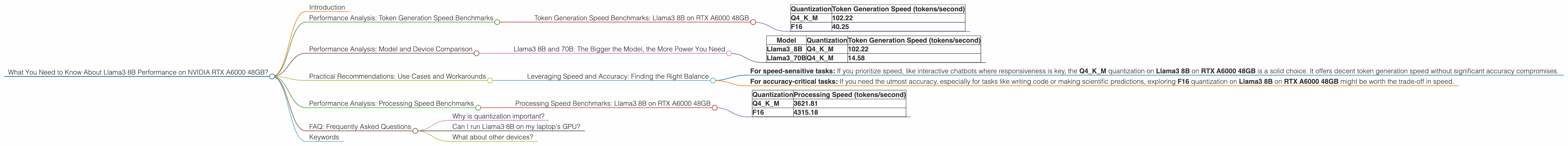

What You Need to Know About Llama3 8B Performance on NVIDIA RTX A6000 48GB?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI models are revolutionizing how we interact with technology, from generating creative content to answering complex questions. But with great power comes… well, you know the rest. One of the biggest challenges with LLMs is their computational demands, requiring robust hardware to handle their processing tasks.

Today, we're taking a deep dive into the performance of the Llama3 8B model on the NVIDIA RTX A6000 48GB graphics card, a powerhouse in the realm of AI hardware. We'll analyze how this combination performs, dissect the impact of different quantization levels, and explore practical use cases for your projects. Buckle up, it's going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is crucial for a smooth LLM experience, especially when you're throwing long prompts at it. Think of tokens like "building blocks" for text, and a faster rate translates to quicker responses.

Token Generation Speed Benchmarks: Llama3 8B on RTX A6000 48GB

Let's start with the basics:

| Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 102.22 |

| F16 | 40.25 |

Data Source: Performance of llama.cpp on various devices by ggerganov

What does this tell us? The Llama3 8B model is capable of generating tokens at a decent speed on the RTX A6000 48GB, even with the Q4KM quantization (which uses 4 bits per value for the weights, making the model smaller and faster but potentially sacrificing some accuracy). F16 quantization, which uses 16 bits per value, achieves a lower token generation rate but offers potentially greater accuracy.

Analogy: Think of it like this: Imagine driving a car. The higher your speed, the faster you reach your destination. The same applies to LLMs: The higher the token generation speed, the faster you can process your data and get your desired output.

Performance Analysis: Model and Device Comparison

While analyzing the Llama3 8B model on a particular device is valuable, it's also insightful to see how it stacks up against other models and devices. Unfortunately, we don't have direct comparisons for F16 quantization on the RTX A6000 48GB from the available data, but we can still gain valuable insights from the numbers we have.

Llama3 8B and 70B: The Bigger the Model, the More Power You Need

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3_8B | Q4KM | 102.22 |

| Llama3_70B | Q4KM | 14.58 |

Data Source: Performance of llama.cpp on various devices by ggerganov

Key Takeaways:

- The Llama3 8B model with Q4KM quantization achieves significantly faster token generation speed compared to the Llama3 70B model with the same quantization.

- This makes sense! LLMs with more parameters (like the 70B model) have a larger 'knowledge base,' requiring more processing power.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance landscape, let's delve into some practical recommendations for using Llama3 8B on the RTX A6000 48GB.

Leveraging Speed and Accuracy: Finding the Right Balance

- For speed-sensitive tasks: If you prioritize speed, like interactive chatbots where responsiveness is key, the Q4KM quantization on Llama3 8B on RTX A6000 48GB is a solid choice. It offers decent token generation speed without significant accuracy compromises.

- For accuracy-critical tasks: If you need the utmost accuracy, especially for tasks like writing code or making scientific predictions, exploring F16 quantization on Llama3 8B on RTX A6000 48GB might be worth the trade-off in speed.

Performance Analysis: Processing Speed Benchmarks

Beyond token generation, we also need to look at processing speed, which is how fast the model can handle the actual "thinking" part of its tasks. Think of it as the time it takes for the model to "understand" and generate a response based on your input.

Processing Speed Benchmarks: Llama3 8B on RTX A6000 48GB

| Quantization | Processing Speed (tokens/second) |

|---|---|

| Q4KM | 3621.81 |

| F16 | 4315.18 |

Data Source: GPU Benchmarks on LLM Inference by XiongjieDai

Interesting Observation: The F16 quantization achieves a higher processing speed compared to Q4KM. This is a bit unexpected!

Explanation: Quantization is a form of "compression" for the model's weights. While Q4KM is smaller and faster for token generation, it seems to trade some processing efficiency for that speed. F16, while slower in token generation, seems to shine when it comes to processing speed.

FAQ: Frequently Asked Questions

Why is quantization important?

Quantization is like a diet for LLMs. It helps reduce the model's size (think: fewer calories!), which makes it faster to load and run on devices. It's great for shrinking your model's footprint without sacrificing too much accuracy.

Can I run Llama3 8B on my laptop's GPU?

It depends on your laptop's GPU. The RTX A6000 48GB has a dedicated AI architecture (Tensor Cores), making it ideal for LLMs. You might be able to run Llama3 8B on a laptop with a dedicated GPU, but you'll likely experience lower performance compared to a powerful desktop GPU.

What about other devices?

This article focused specifically on the RTX A6000 48GB. You can find benchmarks for other devices on the resources linked in this article.

Keywords

Llama3 8B, RTX A6000 48GB, LLM, Large Language Model, token generation speed, processing speed, quantization, Q4KM, F16, GPU, NVIDIA, performance benchmarks, AI, deep learning, natural language processing, NLP