What You Need to Know About Llama3 8B Performance on NVIDIA RTX 6000 Ada 48GB?

Introduction

The world of large language models (LLMs) is booming, and with it comes the need for powerful hardware to run these complex models. Especially for local use, where you're not relying on the cloud, you need a beast of a machine to handle the heavy lifting. Today, we're diving deep into the performance of Meta's Llama3 8B model on the NVIDIA RTX6000Ada_48GB GPU.

This article is your guide to understanding the key benchmarks, comparing different model configurations, and exploring the practical implications for developers and enthusiasts looking to run LLMs locally. We'll also delve into the nitty-gritty details of quantization and its impact on performance.

Buckle up, because we're about to embark on a journey into the exciting world of LLMs and hardware performance.

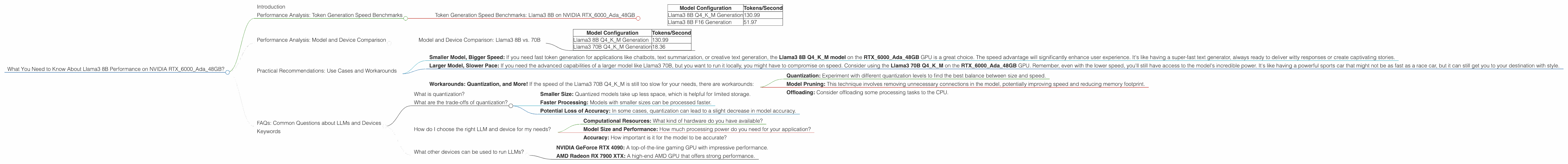

Performance Analysis: Token Generation Speed Benchmarks

The speed at which an LLM can generate tokens (words, characters, or sub-words) is a critical measure of its performance. Let's see how the Llama3 8B model fares on the RTX6000Ada_48GB GPU:

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA RTX6000Ada_48GB

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 130.99 |

| Llama3 8B F16 Generation | 51.97 |

What's the Deal with Q4KM and F16?

- Q4KM: This refers to a quantization technique where model weights are stored using 4-bit precision. This significantly reduces memory footprint, allowing for faster processing and potentially fitting larger models on less powerful hardware. Think of it as squeezing the most juice out of your GPU!

- F16: This indicates that the model weights are stored in 16-bit floating-point precision. This is a common format, but it requires more memory and processing power compared to quantization. It's like having a full-resolution image versus a compressed version – you might lose some detail, but you gain efficiency.

Key Observations:

- The Llama3 8B Q4KM model achieved a significantly higher token generation speed (130.99 tokens/second) compared to the F16 version (51.97 tokens/second). This difference is probably due to the smaller memory footprint and faster processing enabled by quantization. Think of it like this: Imagine you're driving a car. If your car is lighter (quantized model), it can go faster with the same engine power.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs. 70B

We've seen how Llama3 8B performs on the RTX6000Ada_48GB GPU. But how does it stack up against larger models like Llama3 70B?

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 130.99 |

| Llama3 70B Q4KM Generation | 18.36 |

What We Can See:

The Llama3 70B model, despite its significantly larger size, has a much slower token generation speed (18.36 tokens/second) compared to the Llama3 8B Q4KM (130.99 tokens/second). This highlights the importance of model size and quantization in determining performance. Think of it like this: The Llama3 70B is like trying to carry 100 backpacks at once on a hike, whereas the Llama3 8B is like carrying only a few backpacks – it's much faster.

We do not have data for the Llama3 70B F16 configuration on this device. It is possible that the F16 version of the model is not supported by the RTX6000Ada_48GB GPU because it might require more memory than the GPU can handle.

Practical Recommendations: Use Cases and Workarounds

Understanding the performance characteristics of Llama3 8B on the RTX6000Ada_48GB GPU can help you make informed decisions about your LLM endeavors.

Here are some helpful insights:

Smaller Model, Bigger Speed: If you need fast token generation for applications like chatbots, text summarization, or creative text generation, the Llama3 8B Q4KM model on the RTX6000Ada_48GB GPU is a great choice. The speed advantage will significantly enhance user experience. It's like having a super-fast text generator, always ready to deliver witty responses or create captivating stories.

Larger Model, Slower Pace: If you need the advanced capabilities of a larger model like Llama3 70B, but you want to run it locally, you might have to compromise on speed. Consider using the Llama3 70B Q4KM on the RTX6000Ada_48GB GPU. Remember, even with the lower speed, you'll still have access to the model's incredible power. It's like having a powerful sports car that might not be as fast as a race car, but it can still get you to your destination with style.

Workarounds: Quantization, and More! If the speed of the Llama3 70B Q4KM is still too slow for your needs, there are workarounds:

- Quantization: Experiment with different quantization levels to find the best balance between size and speed.

- Model Pruning: This technique involves removing unnecessary connections in the model, potentially improving speed and reducing memory footprint.

- Offloading: Consider offloading some processing tasks to the CPU.

FAQs: Common Questions about LLMs and Devices

What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. It's like compressing a movie file to make it smaller.

What are the trade-offs of quantization?

The trade-offs of quantization are:

- Smaller Size: Quantized models take up less space, which is helpful for limited storage.

- Faster Processing: Models with smaller sizes can be processed faster.

- Potential Loss of Accuracy: In some cases, quantization can lead to a slight decrease in model accuracy.

How do I choose the right LLM and device for my needs?

The best combination of LLM and device depends on your specific requirements:

- Computational Resources: What kind of hardware do you have available?

- Model Size and Performance: How much processing power do you need for your application?

- Accuracy: How important is it for the model to be accurate?

What other devices can be used to run LLMs?

Besides the NVIDIA RTX6000Ada_48GB, there are several other powerful GPUs suitable for local LLM inference, such as:

- NVIDIA GeForce RTX 4090: A top-of-the-line gaming GPU with impressive performance.

- AMD Radeon RX 7900 XTX: A high-end AMD GPU that offers strong performance.

Keywords

Llama3, 8B, 70B, NVIDIA, RTX6000Ada48GB, GPU, performance, token generation speed, benchmarks, quantization, Q4K_M, F16, model comparison, use cases, workarounds, practical recommendations, FAQs, LLM, large language model, local inference, deep dive.