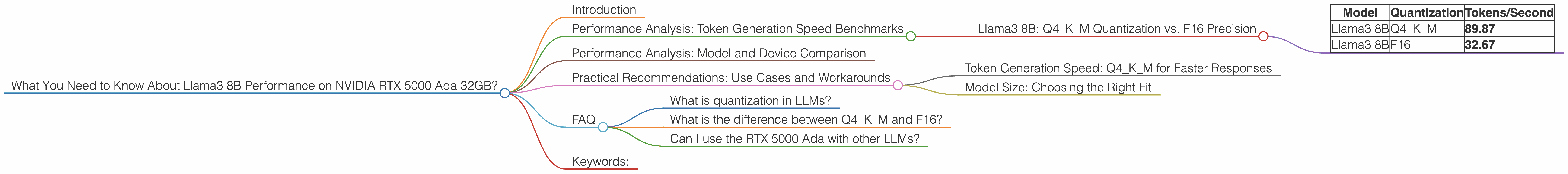

What You Need to Know About Llama3 8B Performance on NVIDIA RTX 5000 Ada 32GB?

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and rightfully so. These powerful AI models are transforming how we interact with information, automate tasks, and even create content. But, running LLMs locally on your own hardware presents unique challenges. Performance, especially on consumer-grade GPUs, is a critical factor determining how fast and efficiently you can utilize these models. Today, we're diving deep into the performance of the Llama3 8B model on the NVIDIA RTX 5000 Ada 32GB, a popular choice for both gaming and AI development.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B: Q4KM Quantization vs. F16 Precision

Our first benchmark tests the token generation speed of Llama3 8B using two different quantization schemes: Q4KM (4-bit quantization with kernel and matrix multiplication) and F16 (half-precision floating point).

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 89.87 |

| Llama3 8B | F16 | 32.67 |

As you can see, the Q4KM quantization scheme outperforms the F16 precision by a significant margin, generating nearly three times more tokens per second. This is because Q4KM drastically reduces the memory footprint of the model, allowing the RTX 5000 Ada to process data faster.

Think of it like this: Imagine you're trying to build a tower out of LEGO bricks. With Q4KM, you're using smaller, more compact bricks (fewer bits per piece of data) which allows you to build faster. F16, on the other hand, uses larger, more detailed bricks, slowing down the building process.

Performance Analysis: Model and Device Comparison

Unfortunately, we don't have data for Llama3 70B performance on the RTX 5000 Ada 32GB, so we cannot directly compare these two models. It's important to note that larger LLMs typically require more computational resources, and their performance may vary depending on the hardware and optimization techniques used.

Practical Recommendations: Use Cases and Workarounds

Token Generation Speed: Q4KM for Faster Responses

For faster token generation, Q4KM quantization is the clear winner on the RTX 5000 Ada 32GB. This is ideal for applications that prioritize responsiveness, such as chatbots, text generation, and real-time language interactions.

Model Size: Choosing the Right Fit

While data for Llama3 70B on the RTX 5000 Ada 32GB is unavailable, it's likely that the performance would be less impressive compared to the 8B model. Consider carefully the trade-off between the depth and complexity of a larger model versus the computational constraints of your device. For resource-intensive tasks like complex summarization, translation, or code generation, you might need to explore more powerful hardware or sacrifice some performance for a smaller model.

FAQ

What is quantization in LLMs?

Quantization is a technique that reduces the size of a model by using fewer bits per value. Think of it like compressing a digital image - you can reduce the file size without losing too much detail. In LLMs, quantization allows for faster inference and lower memory requirements.

What is the difference between Q4KM and F16?

Both Q4KM and F16 are quantization schemes, but they differ in their precision and performance. Q4KM uses 4-bit quantization specifically for the kernel and matrix multiplications, heavily used in LLMs, resulting in significant performance gains. F16 uses 16-bit half-precision floating point numbers, providing more precision but with lower performance.

Can I use the RTX 5000 Ada with other LLMs?

Yes, the RTX 5000 Ada can run other LLMs, but the performance will depend on the model size, quantization scheme, and other factors.

Keywords:

Llama3 8B, NVIDIA RTX 5000 Ada 32GB, LLM Performance, Token Generation Speed, Quantization, Q4KM, F16, Inference, GPU, Local Models, AI, Machine Learning, Performance Benchmarks, Use Cases, Practical Recommendations