What You Need to Know About Llama3 8B Performance on NVIDIA RTX 4000 Ada 20GB?

Introduction: Diving Deep into Local LLMs

The world of Large Language Models (LLMs) is evolving rapidly, bringing powerful capabilities to our fingertips. For developers and tech enthusiasts, the allure of running these models locally is irresistible. It opens up a world of possibilities, from personalized AI assistants to creative text generation and beyond. But before you dive headfirst into the exciting world of local LLMs, it's crucial to understand the hardware you need to harness their full potential.

This article focuses on the performance of the Llama3 8B model on the NVIDIA RTX4000Ada_20GB GPU – a popular choice for many developers. We'll dive into its capabilities, analyze its performance in detail, and provide practical recommendations for maximizing your local LLM experience.

Performance Analysis: Token Generation Speed Benchmarks

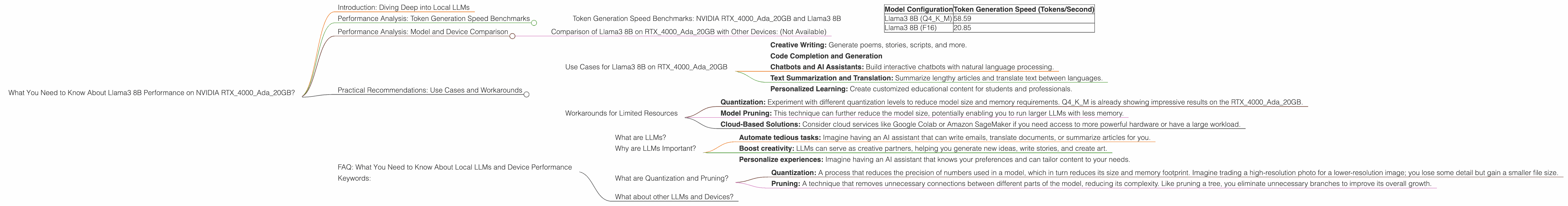

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

Token generation speed is the heartbeat of any LLM. It determines how fast your model can generate text, respond to prompts, and complete tasks. Let's see how the RTX4000Ada_20GB handles the Llama3 8B model:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B (Q4KM) | 58.59 |

| Llama3 8B (F16) | 20.85 |

- Q4KM: This refers to the quantization level of the model. Q4KM uses 4-bit quantization, which is a technique for reducing the model size and memory footprint while preserving accuracy.

- F16: This refers to the use of 16-bit floating-point precision for the model.

What do these numbers mean?

- Llama3 8B (Q4KM): This configuration generates text at a rate of 58.59 tokens per second.

- Llama3 8B (F16): This configuration generates text at a rate of 20.85 tokens per second.

These numbers show a clear advantage of using quantization for the Llama3 8B model on the RTX4000Ada20GB. Think of it like this: if you're writing a story, the Q4K_M version of the model can churn out words at almost triple the speed of the F16 version.

Performance Analysis: Model and Device Comparison

Comparison of Llama3 8B on RTX4000Ada_20GB with Other Devices: (Not Available)

Unfortunately, we don't have performance data for Llama3 8B on other devices to provide a direct comparison.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on RTX4000Ada_20GB

The RTX4000Ada_20GB GPU paired with the Llama3 8B model is a solid combination for a range of practical applications, including:

- Creative Writing: Generate poems, stories, scripts, and more.

- Code Completion and Generation

- Chatbots and AI Assistants: Build interactive chatbots with natural language processing.

- Text Summarization and Translation: Summarize lengthy articles and translate text between languages.

- Personalized Learning: Create customized educational content for students and professionals.

Workarounds for Limited Resources

While the RTX4000Ada_20GB is a powerful card, it's not the ultimate solution for all LLM needs. Here are a few workarounds if you encounter resource limitations:

- Quantization: Experiment with different quantization levels to reduce model size and memory requirements. Q4KM is already showing impressive results on the RTX4000Ada_20GB.

- Model Pruning: This technique can further reduce the model size, potentially enabling you to run larger LLMs with less memory.

- Cloud-Based Solutions: Consider cloud services like Google Colab or Amazon SageMaker if you need access to more powerful hardware or have a large workload.

FAQ: What You Need to Know About Local LLMs and Device Performance

What are LLMs?

LLMs are a type of Artificial Intelligence (AI) model specifically designed to process and generate human-like text. They are trained on massive amounts of data, which allows them to understand language patterns, write coherent text, and even engage in conversations.

Why are LLMs Important?

LLMs have the potential to revolutionize how we interact with technology. They can help us:

- Automate tedious tasks: Imagine having an AI assistant that can write emails, translate documents, or summarize articles for you.

- Boost creativity: LLMs can serve as creative partners, helping you generate new ideas, write stories, and create art.

- Personalize experiences: Imagine having an AI assistant that knows your preferences and can tailor content to your needs.

What are Quantization and Pruning?

- Quantization: A process that reduces the precision of numbers used in a model, which in turn reduces its size and memory footprint. Imagine trading a high-resolution photo for a lower-resolution image; you lose some detail but gain a smaller file size.

- Pruning: A technique that removes unnecessary connections between different parts of the model, reducing its complexity. Like pruning a tree, you eliminate unnecessary branches to improve its overall growth.

What about other LLMs and Devices?

This article focused on the performance of the Llama3 8B model on the RTX4000Ada_20GB GPU. There are many other LLMs and devices available, each with its own strengths and weaknesses. It's always a good idea to research and compare different options before making a decision.

Keywords:

LLMs, Llama3, Llama3 8B, NVIDIA RTX4000Ada_20GB, GPU, Token Generation Speed, Quantization, Model Pruning, Local LLMs, AI, Deep Learning, Natural Language Processing, Performance Benchmarks, DevOps, Cloud Computing, Text Generation, Chatbots, AI Assistants, Creative Writing, Code Completion, Text Summarization, Translation, Personalization, AI for Business, AI for Education.