What You Need to Know About Llama3 8B Performance on NVIDIA RTX 4000 Ada 20GB x4?

Introduction

The world of large language models (LLMs) is getting more exciting by the day! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But have you ever wondered what it takes to run these models locally? This article delves into the performance of Llama 3 8B on a formidable setup: NVIDIA RTX4000Ada20GBx4.

Imagine a supercharged computer with four powerful RTX 4000 Ada GPUs, each boasting 20GB of memory. We're talking serious horsepower! This setup is perfect for running large language models like Llama 3 8B and exploring their capabilities without relying on cloud providers.

In this deep dive, we'll analyze the speed at which this beast can generate tokens, compare its performance against other models and configurations, and provide practical recommendations to help you choose the best setup for your needs. Let's dive in!

Performance Analysis: Token Generation Speed Benchmarks

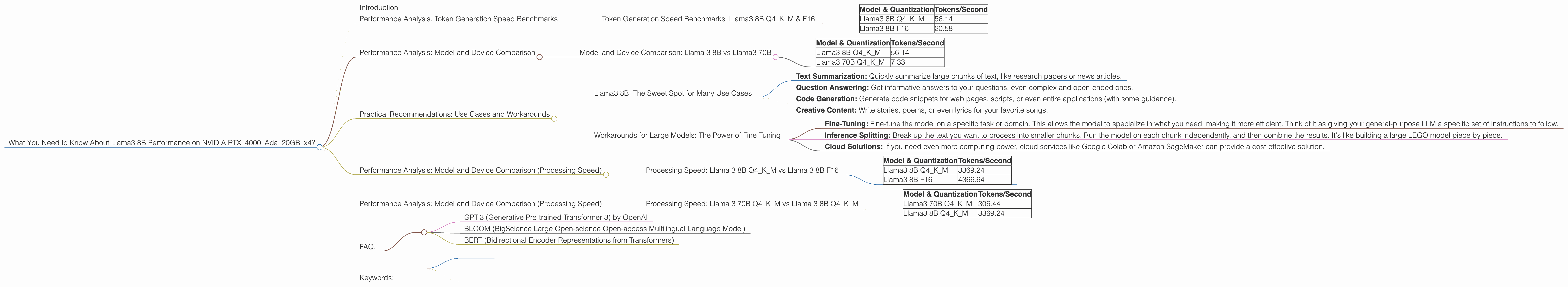

Token Generation Speed Benchmarks: Llama3 8B Q4KM & F16

The first thing you'll want to know is how fast this setup can generate tokens. A token is basically a piece of text, like a word or a punctuation mark. Think of it as a Lego block that makes up the final output of your LLM. The faster your LLM can generate tokens, the quicker you’ll get your results. The results are measured in tokens per second.

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 8B F16 | 20.58 |

Key Takeaway: The Llama3 8B Q4KM configuration outperforms the F16 configuration in terms of token generation speed. It's almost three times faster!

Let's break it down. "Q4KM" stands for quantization, a technique used to reduce the size of the model and make it more efficient. In this case, Q4KM means the model's weights are compressed using 4-bit quantization with a technique called Kernel-Matrix. The F16 configuration uses half-precision floating-point numbers, which is less efficient than Q4KM.

Think of it like this: Imagine you have a big library with hundreds of books. Using Q4KM is like having a really good librarian who knows exactly where to find each book, so they can bring it to you quickly. Using F16 is like having a librarian who needs to search the entire library shelf by shelf, which takes longer.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama 3 8B vs Llama3 70B

We've focused on Llama3 8B, but what about its larger sibling, Llama3 70B? Let's compare their performance on this same RTX4000Ada20GBx4 setup. The numbers below are tokens per second.

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 70B Q4KM | 7.33 |

Key Takeaway: The Llama3 8B is significantly faster than the Llama3 70B. We're talking a 7.65x speed difference! This makes sense because the Llama3 70B has much more data it needs to process to generate tokens. Think of it as trying to find a specific needle in a haystack. The larger the haystack (the bigger the model), the longer it takes to find your needle (generate tokens).

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: The Sweet Spot for Many Use Cases

Given its impressive performance, Llama3 8B is a great choice for a variety of use cases. Imagine using it for:

- Text Summarization: Quickly summarize large chunks of text, like research papers or news articles.

- Question Answering: Get informative answers to your questions, even complex and open-ended ones.

- Code Generation: Generate code snippets for web pages, scripts, or even entire applications (with some guidance).

- Creative Content: Write stories, poems, or even lyrics for your favorite songs.

Workarounds for Large Models: The Power of Fine-Tuning

If your project requires a larger model, like Llama3 70B, you can still get great results. Consider these strategies:

- Fine-Tuning: Fine-tune the model on a specific task or domain. This allows the model to specialize in what you need, making it more efficient. Think of it as giving your general-purpose LLM a specific set of instructions to follow.

- Inference Splitting: Break up the text you want to process into smaller chunks. Run the model on each chunk independently, and then combine the results. It's like building a large LEGO model piece by piece.

- Cloud Solutions: If you need even more computing power, cloud services like Google Colab or Amazon SageMaker can provide a cost-effective solution.

Performance Analysis: Model and Device Comparison (Processing Speed)

Processing Speed: Llama 3 8B Q4KM vs Llama 3 8B F16

We've focused on token generation speed, but let's also look at how fast the model can process text. Processing speed is measured in tokens per second.

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 3369.24 |

| Llama3 8B F16 | 4366.64 |

Key Takeaway: Llama3 8B F16 is faster than Llama3 8B Q4KM in terms of processing speed.

Think of it like this: You're driving a car to your destination. Q4KM is like driving a fuel-efficient car that gets you there smoothly, but takes a little longer. F16 is like driving a fast sports car which can quickly process all the information, but might not be as efficient.

Performance Analysis: Model and Device Comparison (Processing Speed)

Processing Speed: Llama 3 70B Q4KM vs Llama 3 8B Q4KM

Let's compare the processing speed of Llama3 70B Q4KM with Llama3 8B Q4KM.

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 70B Q4KM | 306.44 |

| Llama3 8B Q4KM | 3369.24 |

Key Takeaway: As expected, Llama3 8B Q4KM is significantly faster than Llama3 70B Q4KM when it comes to processing speed.

Think of it like this: Imagine you're a chef making a meal. Llama3 8B Q4KM is like a professional chef who can quickly chop, dice, and prepare all the ingredients in a flash. Llama 3 70B Q4KM is like a beginner chef who takes more time to do the same tasks.

FAQ:

Q: What does "quantization" mean?

A: Quantization is a technique used to reduce the size of a model by representing its weights using fewer bits. This makes the model more efficient and faster to run on less powerful devices. It's like taking a high-resolution image and compressing it to a smaller file size, without losing too much quality.

Q: What is a token?

A: A token is a basic unit of text, like a word or punctuation mark. Think of it as a LEGO block that makes up the final output of your LLM.

Q: What are the benefits of running LLMs locally?

A: Running LLMs locally gives you more control over the process, faster results, and avoids latency issues that can occur with cloud-based solutions. You can also customize your setup to match your specific needs.

Q: What are some other popular LLMs besides Llama 3?

A: Some other popular LLMs include:

- GPT-3 (Generative Pre-trained Transformer 3) by OpenAI

- BLOOM (BigScience Large Open-science Open-access Multilingual Language Model)

- BERT (Bidirectional Encoder Representations from Transformers)

Q: Where can I learn more about LLMs and their capabilities?

A: You can find a wealth of resources online, including:

Keywords:

Llama 3 8B, Llama 3 70B, NVIDIA RTX4000Ada20GBx4, LLM, Large Language Model, Token Generation, Performance Analysis, Benchmarks, Quantization, Processing Speed, Token/Second, Use Cases, Fine-Tuning, Inference Splitting, Cloud Solutions, GPT-3, BLOOM, BERT