What You Need to Know About Llama3 8B Performance on NVIDIA L40S 48GB?

Introduction:

The world of Large Language Models (LLMs) is evolving rapidly, with new models and advancements happening all the time. This means keeping track of model performance across different devices is crucial for developers and researchers. Today, we're diving deep into the performance of the Llama3 8B model on the powerful NVIDIA L40S_48GB GPU. Buckle up, geeks! We're about to explore the fascinating world of tokens, quantization, and the magic of local LLMs.

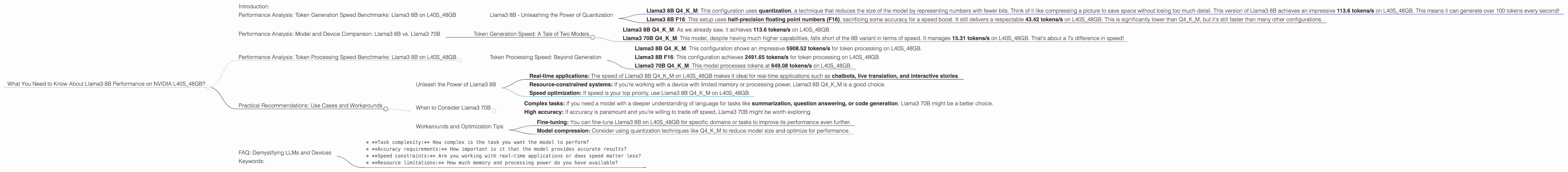

Performance Analysis: Token Generation Speed Benchmarks: Llama3 8B on L40S_48GB

First, let's talk about the raw speed of Llama3 8B on the L40S_48GB. We're looking at tokens per second (tokens/s), the metric that tells us how quickly the model can churn out text. This is crucial for real-time applications and latency-sensitive experiences.

Llama3 8B - Unleashing the Power of Quantization

Llama3 8B Q4KM: This configuration uses quantization, a technique that reduces the size of the model by representing numbers with fewer bits. Think of it like compressing a picture to save space without losing too much detail. This version of Llama3 8B achieves an impressive 113.6 tokens/s on L40S_48GB. This means it can generate over 100 tokens every second!

Llama3 8B F16: This setup uses half-precision floating point numbers (F16), sacrificing some accuracy for a speed boost. It still delivers a respectable 43.42 tokens/s on L40S48GB. This is significantly lower than Q4K_M, but it's still faster than many other configurations.

Think of it this way: Imagine you're typing a message and you need to press one key for each token. With a Q4KM configuration, you could type at a speed of over 100 words per minute!

Performance Analysis: Model and Device Comparison: Llama3 8B vs. Llama3 70B

Now, let's compare the performance of Llama3 8B to the Llama3 70B model, which is significantly larger and more complex. We'll focus on the Q4KM configuration, which provides a good balance between speed and accuracy.

Token Generation Speed: A Tale of Two Models

Llama3 8B Q4KM: As we already saw, it achieves 113.6 tokens/s on L40S_48GB.

Llama3 70B Q4KM: This model, despite having much higher capabilities, falls short of the 8B variant in terms of speed. It manages 15.31 tokens/s on L40S_48GB. That's about a 7x difference in speed!

The Takeaway: Smaller models like Llama3 8B are significantly faster, especially on devices like the L40S_48GB. This is crucial for real-time applications where speed is paramount. The 70B model requires more resources and takes longer to generate text, but it may be worth the trade-off for certain tasks that require a deeper understanding of language.

Performance Analysis: Token Processing Speed Benchmarks: Llama3 8B on L40S_48GB

Another crucial aspect of LLM performance is how quickly they can process tokens. This is measured in tokens per second (tokens/s), just like token generation speed.

Token Processing Speed: Beyond Generation

Llama3 8B Q4KM: This configuration shows an impressive 5908.52 tokens/s for token processing on L40S_48GB.

Llama3 8B F16: This configuration achieves 2491.65 tokens/s for token processing on L40S_48GB.

Llama3 70B Q4KM: This model processes tokens at 649.08 tokens/s on L40S_48GB.

Note: The Llama3 70B F16 configuration is not included in the benchmark data.

Why is this important? Token processing speed directly impacts the time it takes for the model to process and understand input text, which can influence response times and user experience. The L40S_48GB handles processing remarkably well, especially for the Llama3 8B model.

Practical Recommendations: Use Cases and Workarounds

Now that we have a better understanding of Llama3 8B performance on L40S_48GB, let's explore some practical recommendations for choosing the right model and configuration for specific use cases.

Unleash the Power of Llama3 8B

- Real-time applications: The speed of Llama3 8B Q4KM on L40S_48GB makes it ideal for real-time applications such as chatbots, live translation, and interactive stories.

- Resource-constrained systems: If you're working with a device with limited memory or processing power, Llama3 8B Q4KM is a good choice.

- Speed optimization: If speed is your top priority, use Llama3 8B Q4KM on L40S_48GB.

When to Consider Llama3 70B

- Complex tasks: If you need a model with a deeper understanding of language for tasks like summarization, question answering, or code generation, Llama3 70B might be a better choice.

- High accuracy: If accuracy is paramount and you're willing to trade off speed, Llama3 70B might be worth exploring.

Workarounds and Optimization Tips

- Fine-tuning: You can fine-tune Llama3 8B on L40S_48GB for specific domains or tasks to improve its performance even further.

- Model compression: Consider using quantization techniques like Q4KM to reduce model size and optimize for performance.

FAQ: Demystifying LLMs and Devices

1. What is Llama3? Llama3 is a powerful language model developed by Meta AI. It's known for its impressive language understanding and generation capabilities.

2. What are tokens? Tokens are the basic units of text that LLMs process. Think of them as words, but they can also include punctuation and special characters.

3. What is Quantization? Quantization is a technique that reduces the size of a model by using fewer bits to represent numbers. It's like compressing a file to save space without losing too much information.

4. What is F16? F16 is a type of floating-point number that uses half the precision of standard floating-point numbers (F32). This can lead to faster computations but with potential loss of accuracy.

5. What is NVIDIA L40S_48GB? It's a powerful GPU designed for high-performance computing tasks like machine learning. Its large memory makes it suitable for running large language models like Llama3.

6. How do I choose the right LLM and configuration for my use case? Consider the following factors:

* **Task complexity:** How complex is the task you want the model to perform?

* **Accuracy requirements:** How important is it that the model provides accurate results?

* **Speed constraints:** Are you working with real-time applications or does speed matter less?

* **Resource limitations:** How much memory and processing power do you have available?

Keywords:

Llama3, Llama3 8B, Llama3 70B, NVIDIA L40S48GB, GPU, LLM, Large Language Model, Token Generation Speed, Token Processing Speed, Quantization, Q4K_M, F16, Performance Analysis, Benchmarks, Use Cases, Practical Recommendations, Model Compression, Real-time Applications, Resource-Constrained Systems, Fine-tuning, Developer, Geek, AI, Chatbots, Live Translation, Summarization, Question Answering, Code Generation, Latency-Sensitive.