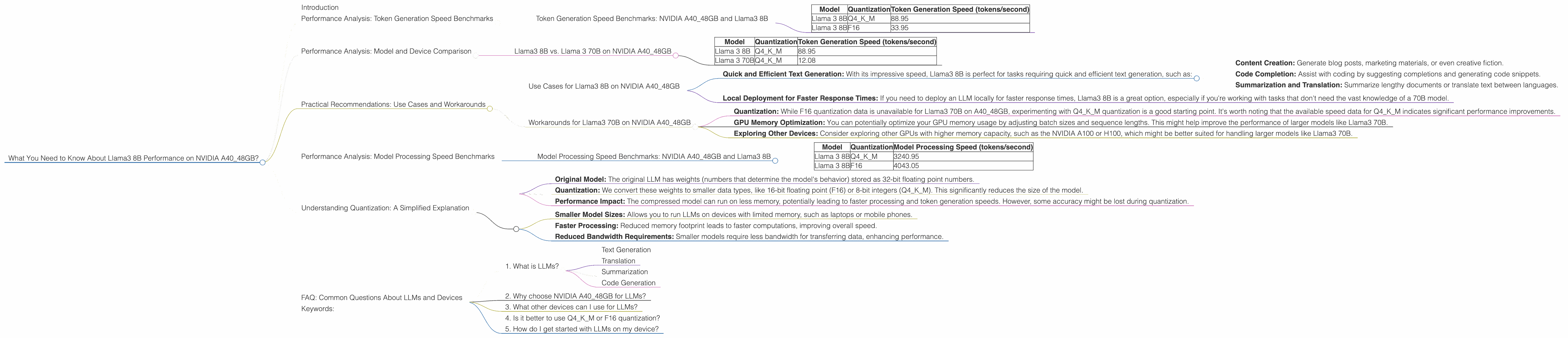

What You Need to Know About Llama3 8B Performance on NVIDIA A40 48GB?

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for powerful hardware to run these complex models locally. If you're a developer looking to harness the power of LLMs on your own system, the NVIDIA A40_48GB GPU is a top contender. But how does this powerhouse perform with the latest llama.cpp model, Llama3 8B? Let's dive deep into the performance analysis and get you equipped with the knowledge you need to make informed decisions.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A40_48GB and Llama3 8B

The benchmark data we'll be looking at comes from the llama.cpp community on GitHub. This data covers token generation speed for various model sizes and quantization techniques.

Here's a table summarizing the token generation speed in tokens per second for Llama3 8B on the NVIDIA A40_48GB GPU:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 88.95 |

| Llama 3 8B | F16 | 33.95 |

Key Observations:

- Quantization Matters: Using Q4KM (quantization for weights, keys, and values) significantly boosts token generation performance compared to F16 (half-precision floating point). This is because quantization reduces the memory footprint of the model, allowing for faster computations.

- Llama3 8B is Quick: Even with F16 quantization, Llama3 8B on the A40_48GB GPU delivers a respectable token generation speed of over 30 tokens per second.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama 3 70B on NVIDIA A40_48GB

You might be wondering how Llama3 8B stacks up against its bigger brother, Llama3 70B. Here's a comparison of their token generation speeds:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 88.95 |

| Llama 3 70B | Q4KM | 12.08 |

Observations:

- Smaller is Faster: It's no surprise that Llama3 8B, being much smaller than Llama3 70B, generates tokens significantly faster.

- Trade-off: While the smaller model is faster, it also has a smaller capacity and might not perform as well on complex tasks requiring a larger knowledge base.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA A40_48GB

- Quick and Efficient Text Generation: With its impressive speed, Llama3 8B is perfect for tasks requiring quick and efficient text generation, such as:

- Content Creation: Generate blog posts, marketing materials, or even creative fiction.

- Code Completion: Assist with coding by suggesting completions and generating code snippets.

- Summarization and Translation: Summarize lengthy documents or translate text between languages.

- Local Deployment for Faster Response Times: If you need to deploy an LLM locally for faster response times, Llama3 8B is a great option, especially if you're working with tasks that don't need the vast knowledge of a 70B model.

Workarounds for Llama3 70B on NVIDIA A40_48GB

- Quantization: While F16 quantization data is unavailable for Llama3 70B on A4048GB, experimenting with Q4KM quantization is a good starting point. It's worth noting that the available speed data for Q4K_M indicates significant performance improvements.

- GPU Memory Optimization: You can potentially optimize your GPU memory usage by adjusting batch sizes and sequence lengths. This might help improve the performance of larger models like Llama3 70B.

- Exploring Other Devices: Consider exploring other GPUs with higher memory capacity, such as the NVIDIA A100 or H100, which might be better suited for handling larger models like Llama3 70B.

Performance Analysis: Model Processing Speed Benchmarks

Model Processing Speed Benchmarks: NVIDIA A40_48GB and Llama3 8B

The A4048GB GPU is not only designed for token generation but also for efficient model processing. Here are the benchmarks for Llama3 8B on the A4048GB:

| Model | Quantization | Model Processing Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 3240.95 |

| Llama 3 8B | F16 | 4043.05 |

Key Observations:

- Faster Model Processing with F16: Interestingly, F16 quantization seems to yield faster model processing speeds on the A40_48GB for Llama3 8B.

- Impressive Performance: Both F16 and Q4KM quantization deliver highly impressive model processing speeds, indicating the A40_48GB is a powerful choice for LLM applications.

Understanding Quantization: A Simplified Explanation

Quantization is a technique used to shrink the size of large language models (LLMs) without sacrificing too much performance. Think of it like compressing a large video file to fit it on your phone—you're reducing the size while maintaining the essence of the content.

Here's how it works:

- Original Model: The original LLM has weights (numbers that determine the model's behavior) stored as 32-bit floating point numbers.

- Quantization: We convert these weights to smaller data types, like 16-bit floating point (F16) or 8-bit integers (Q4KM). This significantly reduces the size of the model.

- Performance Impact: The compressed model can run on less memory, potentially leading to faster processing and token generation speeds. However, some accuracy might be lost during quantization.

Why Quantization Matters:

- Smaller Model Sizes: Allows you to run LLMs on devices with limited memory, such as laptops or mobile phones.

- Faster Processing: Reduced memory footprint leads to faster computations, improving overall speed.

- Reduced Bandwidth Requirements: Smaller models require less bandwidth for transferring data, enhancing performance.

FAQ: Common Questions About LLMs and Devices

1. What is LLMs?

LLMs are large language models, a type of artificial intelligence that can understand and generate human-like text. They are trained on vast amounts of text data and can perform tasks like:

- Text Generation

- Translation

- Summarization

- Code Generation

2. Why choose NVIDIA A40_48GB for LLMs?

The A40_48GB is a high-performance GPU designed for demanding tasks like ML and deep learning. Its large 48GB memory allows it to handle large models like Llama3 8B and 70B.

3. What other devices can I use for LLMs?

Besides the A40_48GB, other GPUs like the NVIDIA A100 or H100 offer even higher memory capacity and performance. Consider your specific model size and processing needs when choosing a device.

4. Is it better to use Q4KM or F16 quantization?

It depends on your specific needs and priorities. Q4KM generally offers better performance, but F16 might be a good choice if you need more accuracy or are working with devices that don't support Q4KM.

5. How do I get started with LLMs on my device?

There are several open-source and commercial tools available for running LLMs locally. You can find detailed guides and instructions on platforms like GitHub.

Keywords:

LLM, Large Language Model, Llama3, Llama3 8B, Llama3 70B, NVIDIA, A4048GB, GPU, token generation, processing speed, quantization, Q4K_M, F16, performance, benchmarks, use cases, recommendations, workarounds, local deployment, developer.