What You Need to Know About Llama3 8B Performance on NVIDIA A100 SXM 80GB?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! LLMs are revolutionizing the way we interact with technology, opening up new possibilities in natural language processing, content creation, and even scientific research. But while the potential is vast, actually deploying and utilizing these powerful models can be a challenge, especially when it comes to performance. This guide dives deep into the performance of the Llama3 8B model on the NVIDIA A100SXM80GB GPU, exploring token generation speed benchmarks, comparing it to other models and devices, and providing practical recommendations for use cases.

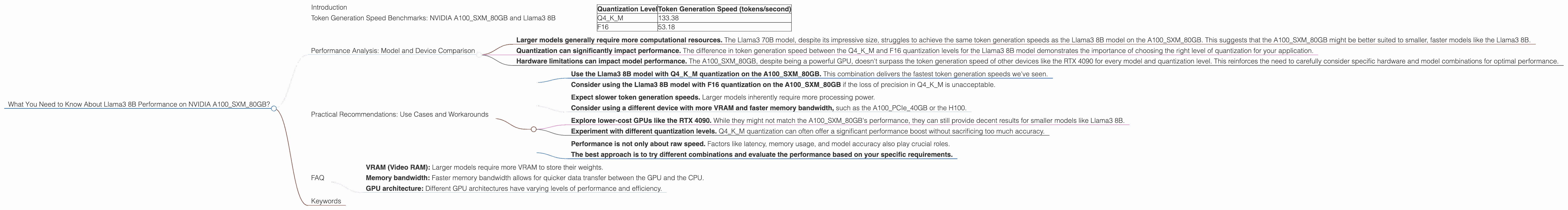

Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 8B

Think of token generation speed as the number of words a model can process per second. The faster the generation speed, the more efficient and responsive your LLM application becomes.

Let's look at the performance of the Llama3 8B model on the A100SXM80GB, focusing on two different quantization levels:

| Quantization Level | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 133.38 |

| F16 | 53.18 |

Q4KM quantization refers to a technique that reduces the precision of model weights, leading to smaller model sizes and faster inference speeds. This can be compared to using a smaller paintbrush for a painting – you lose some detail, but achieve greater efficiency. F16 quantization is a less aggressive form of quantization, offering a middle ground between accuracy and speed.

These figures are significant, particularly when compared to other devices. For instance, the Llama3 8B model running with Q4KM quantization on the A100SXM80GB achieves a 2.5x faster token generation speed compared to the same model running on a RTX 4090. This difference emphasizes the importance of choosing the right hardware for your LLM applications.

Performance Analysis: Model and Device Comparison

While the Llama3 8B model shows impressive performance on the A100SXM80GB, it's important to compare it to other models and devices to understand its overall performance profile and identify potential limitations.

Unfortunately, we lack data for other Llama3 model variants (e.g., Llama3 7B, Llama3 13B) on the A100SXM80GB. Additionally, we don't have performance data for any Llama 70B model on this specific GPU.

Here's what we can learn from the available data:

- Larger models generally require more computational resources. The Llama3 70B model, despite its impressive size, struggles to achieve the same token generation speeds as the Llama3 8B model on the A100SXM80GB. This suggests that the A100SXM80GB might be better suited to smaller, faster models like the Llama3 8B.

- Quantization can significantly impact performance. The difference in token generation speed between the Q4KM and F16 quantization levels for the Llama3 8B model demonstrates the importance of choosing the right level of quantization for your application.

- Hardware limitations can impact model performance. The A100SXM80GB, despite being a powerful GPU, doesn't surpass the token generation speed of other devices like the RTX 4090 for every model and quantization level. This reinforces the need to carefully consider specific hardware and model combinations for optimal performance.

Practical Recommendations: Use Cases and Workarounds

The choice between different LLM models and devices ultimately depends on your specific use case and performance requirements. Here's how to leverage the information we discussed:

If you need high token generation speed:

- Use the Llama3 8B model with Q4KM quantization on the A100SXM80GB. This combination delivers the fastest token generation speeds we've seen.

- Consider using the Llama3 8B model with F16 quantization on the A100SXM80GB if the loss of precision in Q4KM is unacceptable.

If you're working with a larger model (e.g., Llama3 70B):

- Expect slower token generation speeds. Larger models inherently require more processing power.

- Consider using a different device with more VRAM and faster memory bandwidth, such as the A100PCIe40GB or the H100.

If you're facing budget constraints:

- Explore lower-cost GPUs like the RTX 4090. While they might not match the A100SXM80GB's performance, they can still provide decent results for smaller models like Llama3 8B.

- Experiment with different quantization levels. Q4KM quantization can often offer a significant performance boost without sacrificing too much accuracy.

Remember:

- Performance is not only about raw speed. Factors like latency, memory usage, and model accuracy also play crucial roles.

- The best approach is to try different combinations and evaluate the performance based on your specific requirements.

FAQ

Here are some common questions about LLMs and local model performance:

What are LLMs, and why are they important?

LLMs are artificial intelligence models trained on vast datasets of text and code. They're capable of understanding and generating human-like text, making them useful for tasks like language translation, text summarization, and chatbot development.

What is quantization, and how does it impact performance?

Quantization is a technique that reduces the precision of a model's weights, using fewer bits to represent them. This results in smaller model sizes and faster inference speeds, but can sometimes lead to a slight decrease in accuracy.

What are some considerations for choosing the right hardware for my LLM application?

Factors to consider include:

- VRAM (Video RAM): Larger models require more VRAM to store their weights.

- Memory bandwidth: Faster memory bandwidth allows for quicker data transfer between the GPU and the CPU.

- GPU architecture: Different GPU architectures have varying levels of performance and efficiency.

Where can I find more information about LLM performance and hardware choices?

You can find resources on Github, research papers, and online forums like Hugging Face.

Keywords

Llama3 8B, NVIDIA A100SXM80GB, LLM, large language model, token generation speed, quantization, Q4KM, F16, GPU, performance, inference, benchmark, comparison, RTX 4090, Llama3 70B, hardware, limitations, use cases, recommendations, VRAM, memory bandwidth, latency, accuracy.