What You Need to Know About Llama3 8B Performance on NVIDIA A100 PCIe 80GB?

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and advancements popping up faster than you can say "Transformer." But what good is a powerful LLM if you can't run it on your own hardware? That's where the NVIDIA A100PCIe80GB comes in, a beast of a GPU designed to handle the heavy lifting of local LLM inference.

This article dives deep into the performance of the Llama3 8B model on this specific GPU, exploring how different quantization levels affect speed, and providing practical insights for developers looking to harness the power of LLMs on their own machines. Whether you're a seasoned AI engineer or just starting to explore the world of LLMs, we've got you covered.

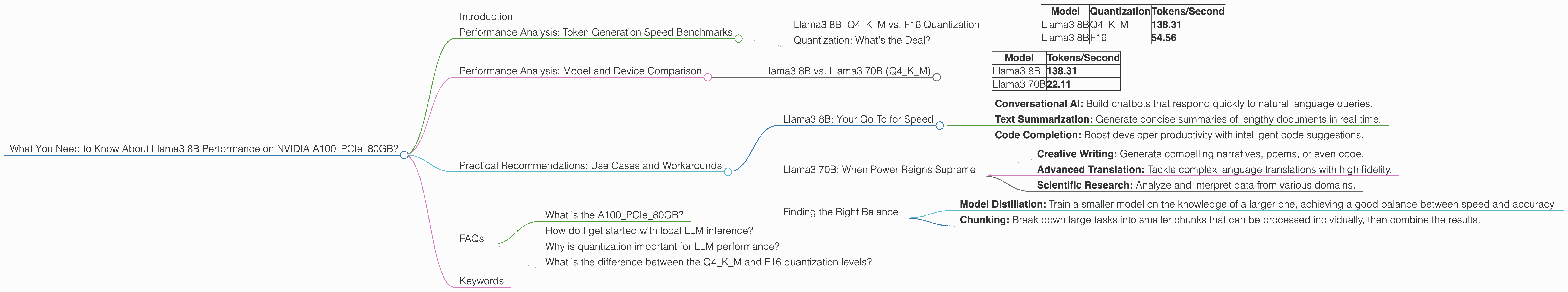

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B: Q4KM vs. F16 Quantization

Let's start with the heart of the matter – how fast can the Llama3 8B model generate text on an NVIDIA A100PCIe80GB? We'll focus on two different quantization levels:

- Q4KM: This quantization technique uses 4 bits to represent each parameter, significantly reducing memory footprint and boosting performance.

- F16: This is a standard 16-bit floating point representation, offering a balance between accuracy and speed.

Take a peek at these numbers:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

Woah! The Q4KM quantization unleashes a speed boost of over 2.5 times compared to the F16 version. That's like going from a scooter to a supersonic jet!

Quantization: What's the Deal?

Think of quantization as a clever way to compress data. By cleverly grouping similar values together, we use fewer bits to represent them, effectively shrinking the model size and saving precious memory. This allows faster processing, but might sacrifice a tiny bit of precision. Imagine it like using a smaller suitcase for your trip – you might have to be a bit more selective about what you pack, but you'll be able to travel much faster!

So, why the huge difference? The A100 GPU is hungry for data, and the smaller Q4KM model size allows it to chomp through tokens at a much faster rate.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B (Q4KM)

Let's see how the Llama3 8B model stacks up against its bigger brother, the Llama3 70B model, both using Q4KM quantization.

| Model | Tokens/Second |

|---|---|

| Llama3 8B | 138.31 |

| Llama3 70B | 22.11 |

As expected, the smaller Llama3 8B model runs significantly faster. Think of it like comparing a nimble sports car to a powerful, but lumbering, truck. Both get you where you need to go, but the sports car zips around corners with ease.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: Your Go-To for Speed

Looking for a fast and efficient LLM for your project? The Llama3 8B model with Q4KM quantization on the A100PCIe80GB is your best bet.

- Conversational AI: Build chatbots that respond quickly to natural language queries.

- Text Summarization: Generate concise summaries of lengthy documents in real-time.

- Code Completion: Boost developer productivity with intelligent code suggestions.

Llama3 70B: When Power Reigns Supreme

While the Llama3 70B model might not be as speedy, it packs a punch in terms of accuracy and complexity. This makes it ideal for:

- Creative Writing: Generate compelling narratives, poems, or even code.

- Advanced Translation: Tackle complex language translations with high fidelity.

- Scientific Research: Analyze and interpret data from various domains.

Finding the Right Balance

If you need the speed of Llama3 8B but crave the power of Llama3 70B, consider these strategies:

- Model Distillation: Train a smaller model on the knowledge of a larger one, achieving a good balance between speed and accuracy.

- Chunking: Break down large tasks into smaller chunks that can be processed individually, then combine the results.

FAQs

What is the A100PCIe80GB?

The A100PCIe80GB is a high-performance GPU designed specifically for AI and machine learning workloads. It boasts impressive processing power, a massive 80GB of memory, and advanced features like Tensor Cores, making it a powerhouse for running LLMs locally.

How do I get started with local LLM inference?

There are several options available for running LLMs locally. Popular libraries like llama.cpp and transformers provide easy-to-use interfaces and support for various models and GPUs.

Why is quantization important for LLM performance?

Quantization is a critical technique for optimizing LLM performance, especially on resource-constrained devices. By reducing the size of the model, it enables faster inference and lower memory consumption.

What is the difference between the Q4KM and F16 quantization levels?

Q4KM uses a 4-bit representation for each parameter, resulting in a smaller model and faster processing speed. F16 uses a standard 16-bit representation, offering a balance between accuracy and speed.

Keywords

LLM, Large Language Model, Llama3, Llama3 8B, Llama3 70B, NVIDIA A100PCIe80GB, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmark, GPU, Inference, Local, AI, Machine Learning, Deep Learning, Conversational AI, Text Summarization, Code Completion, Creative Writing, Advanced Translation, Scientific Research, Model Distillation, Chunking.