What You Need to Know About Llama3 8B Performance on NVIDIA 4090 24GB?

Introduction

The world of large language models (LLMs) is booming, with new models and advancements emerging at a breakneck pace. If you're a developer working with LLMs, you're likely always on the lookout for the best tools to help you push the boundaries of what's possible. One of the key factors in LLM performance is the hardware you choose. Today, we're diving deep into the NVIDIA 4090_24GB, a beast of a GPU, and its performance when serving up the Llama3 8B model.

Think of it like this: if LLMs are the brains of the operation, the GPU is the muscle, providing the power to make those brains hum. We'll explore the Llama3 8B's performance on the 4090_24GB, pinpoint the strengths and weaknesses, and provide you with actionable recommendations for use cases.

Performance Analysis: Token Generation Speed Benchmarks

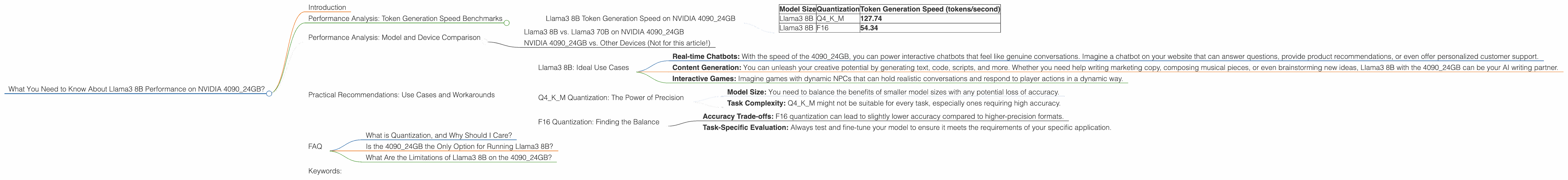

Llama3 8B Token Generation Speed on NVIDIA 4090_24GB

Let's start with the key performance metric: token generation speed. This tells us how quickly the model can generate text, which is crucial for real-time applications like chatbots and interactive content creation. The 4090_24GB proves to be a real powerhouse for Llama3 8B.

| Model Size | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 127.74 |

| Llama3 8B | F16 | 54.34 |

Observations:

- Q4KM Quantization reigns supreme: For Llama3 8B, the Q4KM quantization method, which uses a lower-precision format for storing model parameters, is clearly the winner for token generation speed on the NVIDIA 4090_24GB.

- F16 Quantization: Still a decent performer: While not as fast as Q4KM, F16 quantization still achieves a respectable speed. Consider it an excellent option if you need a balance between speed and model size.

Key Takeaways:

- The NVIDIA 409024GB delivers impressive token generation speeds for Llama3 8B, particularly with the Q4K_M quantization.

- Don't underestimate the power of F16 quantization, especially if memory considerations are a concern.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B on NVIDIA 4090_24GB

You might be thinking, "Okay, that's cool for Llama3 8B, but what about the bigger models?" The truth is, we don't have performance data for Llama3 70B on the 4090_24GB at this time. We're eager to see how these powerful models will perform together, but the data just isn't available yet!

NVIDIA 4090_24GB vs. Other Devices (Not for this article!)

We're going to focus on the NVIDIA 4090_24GB for now. We'll delve into comparisons with other devices in a future article.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: Ideal Use Cases

- Real-time Chatbots: With the speed of the 4090_24GB, you can power interactive chatbots that feel like genuine conversations. Imagine a chatbot on your website that can answer questions, provide product recommendations, or even offer personalized customer support.

- Content Generation: You can unleash your creative potential by generating text, code, scripts, and more. Whether you need help writing marketing copy, composing musical pieces, or even brainstorming new ideas, Llama3 8B with the 4090_24GB can be your AI writing partner.

- Interactive Games: Imagine games with dynamic NPCs that can hold realistic conversations and respond to player actions in a dynamic way.

Q4KM Quantization: The Power of Precision

For developers who prioritize speed and efficiency, Q4KM quantization is a powerful tool. Think of it like compressing your model without sacrificing too much accuracy.

Why it works: Q4KM uses a lower-precision format for storing model parameters, which reduces memory footprint and boosts processing speed.

Key Considerations: * Model Size: You need to balance the benefits of smaller model sizes with any potential loss of accuracy. * Task Complexity: Q4KM might not be suitable for every task, especially ones requiring high accuracy.

F16 Quantization: Finding the Balance

If you're working with limited resources, F16 quantization is a great option. It offers a good compromise between speed and memory usage.

Why it works: F16 uses a 16-bit floating-point format, which is more compact than the standard 32-bit floating-point format.

Key Considerations: * Accuracy Trade-offs: F16 quantization can lead to slightly lower accuracy compared to higher-precision formats. * Task-Specific Evaluation: Always test and fine-tune your model to ensure it meets the requirements of your specific application.

FAQ

What is Quantization, and Why Should I Care?

Quantization is a technique that reduces the size of a model by using a lower-precision format to represent its parameters. It's like compressing a file to make it smaller, without losing all the details. In the context of LLMs, this means trading off a little accuracy for significant gains in speed, memory usage, and deployment efficiency.

Is the 4090_24GB the Only Option for Running Llama3 8B?

Not necessarily! The 4090_24GB is a top-tier GPU, but other powerful options exist. Factors like budget, specific task requirements, and even power consumption will influence your choice.

What Are the Limitations of Llama3 8B on the 4090_24GB?

While impressive, the 4090_24GB isn't magic. There's always a trade-off between model size, performance, and accuracy. For instance, if you're working with very complex tasks that require a large model, you might experience performance limitations with Llama3 8B.

Keywords:

Llama3 8B, NVIDIA 409024GB, LLM Performance, Token Generation Speed, Quantization, Q4K_M, F16, NLP, Natural Language Processing, Machine Learning, AI, Deep Learning, GPU, Graphics Processing Unit, Performance Benchmark, Use Cases, Chatbots, Content Generation, Model Size, Accuracy, Memory Usage, Resources, Practical Recommendations