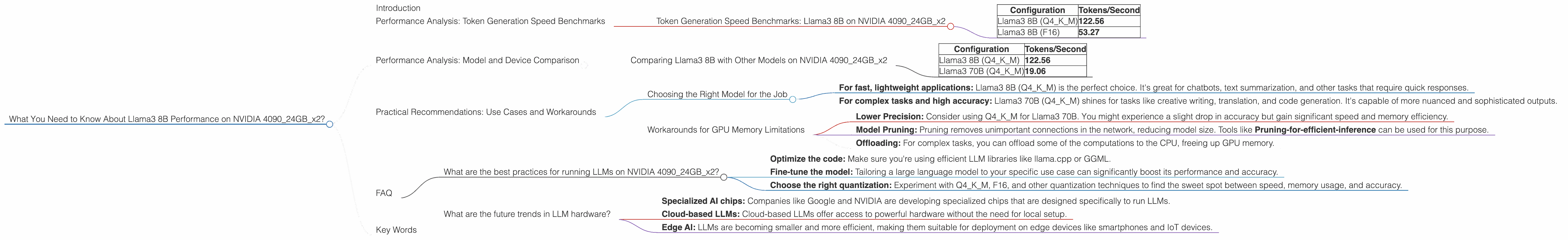

What You Need to Know About Llama3 8B Performance on NVIDIA 4090 24GB x2?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging constantly. If you're a developer or AI enthusiast, you're likely eager to explore these cutting-edge models and see what they can do. One of the biggest questions on everyone's mind is: How do these models perform on different hardware?

This article dives deep into the performance of the Llama3 8B model on a powerful NVIDIA 409024GBx2 setup. We'll explore token generation speed, compare the model's performance with other LLM configurations, and provide practical recommendations for using this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 409024GBx2

Let's start with the fundamental metric for LLM performance: token generation speed. This measures how quickly a model can produce text output. Here's a breakdown of the results for Llama3 8B on our NVIDIA 409024GBx2 beast:

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B (Q4KM) | 122.56 |

| Llama3 8B (F16) | 53.27 |

What Does this Mean?

- Q4KM (Quantization): This configuration represents the Llama3 8B model with 4-bit quantization for the key, value, and matrix weights. Think of quantization as squeezing a large model into a smaller space, making it more efficient without losing too much accuracy. It's a bit like compressing a movie file – you get the same picture, but it takes up less storage space.

- F16 (Half Precision): This configuration uses 16-bit floating point precision for the weights, which is less precise than Q4KM but can be faster in some cases.

The Takeaway:

The Q4KM configuration of Llama3 8B on the NVIDIA 409024GBx2 setup delivers a blazing-fast token generation speed of 122.56 tokens per second. It's more than twice as fast as the F16 configuration. This speed translates to a smoother and more responsive experience when interacting with the LLM.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B with Other Models on NVIDIA 409024GBx2

It's always fascinating to see how different LLM configurations stack up against each other. Here's a quick comparison between Llama3 8B and its larger sibling, Llama3 70B, on our trusty NVIDIA 409024GBx2.

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B (Q4KM) | 122.56 |

| Llama3 70B (Q4KM) | 19.06 |

The Numbers Don't Lie:

Llama3 8B (Q4KM) is over 6 times faster than Llama3 70B (Q4KM) on the same hardware. This is largely due to the smaller size of the 8B model.

Think of it this way: Imagine trying to fit a whole library of books into a small backpack. The larger the library, the more books you have to squeeze in, and the harder it is to move. Likewise, larger LLMs require more processing power, impacting their speed.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for the Job

The choice between Llama3 8B and Llama3 70B depends on your specific needs and resources:

- For fast, lightweight applications: Llama3 8B (Q4KM) is the perfect choice. It's great for chatbots, text summarization, and other tasks that require quick responses.

- For complex tasks and high accuracy: Llama3 70B (Q4KM) shines for tasks like creative writing, translation, and code generation. It's capable of more nuanced and sophisticated outputs.

Workarounds for GPU Memory Limitations

You might encounter situations where the NVIDIA 409024GBx2 setup simply doesn't have enough memory to run a larger LLM like Llama3 70B (F16). Here are some workarounds:

- Lower Precision: Consider using Q4KM for Llama3 70B. You might experience a slight drop in accuracy but gain significant speed and memory efficiency.

- Model Pruning: Pruning removes unimportant connections in the network, reducing model size. Tools like Pruning-for-efficient-inference can be used for this purpose.

- Offloading: For complex tasks, you can offload some of the computations to the CPU, freeing up GPU memory.

FAQ

What are the best practices for running LLMs on NVIDIA 409024GBx2?

- Optimize the code: Make sure you're using efficient LLM libraries like llama.cpp or GGML.

- Fine-tune the model: Tailoring a large language model to your specific use case can significantly boost its performance and accuracy.

- Choose the right quantization: Experiment with Q4KM, F16, and other quantization techniques to find the sweet spot between speed, memory usage, and accuracy.

What are the future trends in LLM hardware?

- Specialized AI chips: Companies like Google and NVIDIA are developing specialized chips that are designed specifically to run LLMs.

- Cloud-based LLMs: Cloud-based LLMs offer access to powerful hardware without the need for local setup.

- Edge AI: LLMs are becoming smaller and more efficient, making them suitable for deployment on edge devices like smartphones and IoT devices.

Key Words

Llama3 8B, NVIDIA 409024GBx2, performance benchmarks, token generation speed, quantization, Q4KM, F16, LLM, large language models, GPU, GPU memory, practical recommendations, use cases, workarounds, fine-tuning, pruning, offloading, cloud-based LLMs, edge AI.