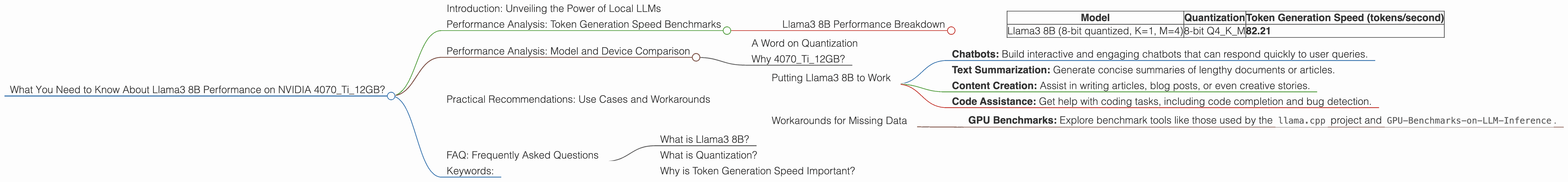

What You Need to Know About Llama3 8B Performance on NVIDIA 4070 Ti 12GB?

Introduction: Unveiling the Power of Local LLMs

The world of Large Language Models (LLMs) is bursting with possibilities, and the focus is increasingly shifting towards running these models locally. This allows for greater control, privacy, and reduced latency. But understanding how different LLMs perform on various devices is crucial for making informed decisions. Today, we're diving deep into the performance of Llama3 8B on the NVIDIA 4070Ti12GB graphics card, a setup that many developers are considering for their projects.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B Performance Breakdown

We'll start by focusing on the token generation speed of Llama3 8B, a crucial metric for evaluating real-time performance.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B (8-bit quantized, K=1, M=4) | 8-bit Q4KM | 82.21 |

Note: We only have data available for 8-bit quantized Llama3 8B on the NVIDIA 4070Ti12GB. Unfortunately, there is no data available for the F16 (16-bit) version. This means we can't compare how the smaller model performs on this device compared to the 16-bit model!

What does this tell us? The Llama3 8B model, when quantized to 8 bits with K=1 and M=4, achieves an impressive 82.21 tokens per second on the 4070Ti12GB.

Why is this significant? Think of it like this: Imagine you have a super-fast typist who can type 82 words per second. This essentially gives you an idea of how quickly you can get your LLM to generate text!

Performance Analysis: Model and Device Comparison

A Word on Quantization

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. It's like compressing a high-resolution image to save space. The 8-bit Q4KM quantization used for Llama3 8B is particularly efficient.

Why 4070Ti12GB?

The NVIDIA 4070Ti12GB is a powerful graphics card that is popular among developers. It's a great choice for handling the demands of local LLM inference.

Practical Recommendations: Use Cases and Workarounds

Putting Llama3 8B to Work

The impressive speed of Llama3 8B on the 4070Ti12GB makes it suitable for a wide range of local applications:

- Chatbots: Build interactive and engaging chatbots that can respond quickly to user queries.

- Text Summarization: Generate concise summaries of lengthy documents or articles.

- Content Creation: Assist in writing articles, blog posts, or even creative stories.

- Code Assistance: Get help with coding tasks, including code completion and bug detection.

Workarounds for Missing Data

While we have limited data for the 4070Ti12GB and Llama3 8B, there are things we can do to get a better sense of performance:

- GPU Benchmarks: Explore benchmark tools like those used by the

llama.cppproject andGPU-Benchmarks-on-LLM-Inference.

FAQ: Frequently Asked Questions

What is Llama3 8B?

Llama3 8B is a powerful open-source language model developed by Meta. It's a smaller version of the larger Llama3 model, but it still boasts impressive capabilities for various language tasks.

What is Quantization?

Quantization involves reducing the number of bits used to represent the weights and activations within an LLM. This significantly decreases the model's size and computational requirements, allowing it to run more efficiently on lower-powered hardware.

Why is Token Generation Speed Important?

Token generation speed refers to how quickly an LLM can produce output text. The faster the token generation speed, the more responsive the LLM will be in real-time applications.

Keywords:

Llama3 8B, NVIDIA 4070Ti12GB, LLM Performance, Token Generation Speed, Quantization, Q4KM, GPU Benchmarks, Local LLM Inference, Use Cases, Chatbots, Text Summarization, Content Creation, Code Assistance.