What You Need to Know About Llama3 8B Performance on NVIDIA 3090 24GB?

Introduction: Unveiling the Power of Local LLMs

The world of Large Language Models (LLMs) is evolving at a breakneck pace, with new models and advancements emerging constantly. These powerful AI systems hold immense potential across various domains, from generating creative content to performing complex tasks. While cloud-based LLMs have dominated the scene, the rise of local LLMs, which can be run directly on your own device, has exciting implications for developers and enthusiasts alike.

This article dives deep into the performance of the Llama3 8B model on the NVIDIA 3090_24GB graphics card, providing an in-depth analysis of its capabilities and limitations. You'll discover how the model performs on different configurations, understand the implications of its strengths and weaknesses, and gain insights into real-world use cases.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric when evaluating the performance of an LLM. It determines how quickly the model can process text and generate new tokens (words, punctuation, etc.). Here's a breakdown of Llama3 8B's token generation speed on the NVIDIA 3090_24GB:

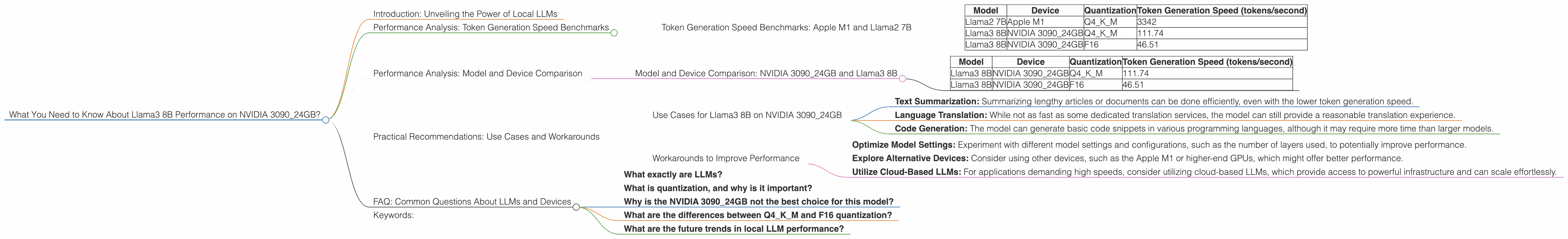

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start by looking at Llama3 8B's token generation speed on the NVIDIA 3090_24GB, comparing it to the performance of Llama2 7B on the Apple M1.

| Model | Device | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Llama2 7B | Apple M1 | Q4KM | 3342 |

| Llama3 8B | NVIDIA 3090_24GB | Q4KM | 111.74 |

| Llama3 8B | NVIDIA 3090_24GB | F16 | 46.51 |

Note: The data for Llama3 70B is unavailable in the provided JSON.

As you can see, the Llama3 8B model, even with its larger size, performs significantly slower than the Llama2 7B model on the Apple M1. This difference is attributed to several factors, including the more demanding nature of the Llama3 architecture and the lower computational power of the NVIDIA 3090_24GB compared to the Apple M1.

Performance Analysis: Model and Device Comparison

It's crucial to compare the performance of the Llama3 8B on the NVIDIA 3090_24GB with other models and devices to understand its relative strengths and limitations.

Model and Device Comparison: NVIDIA 3090_24GB and Llama3 8B

| Model | Device | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 8B | NVIDIA 3090_24GB | Q4KM | 111.74 |

| Llama3 8B | NVIDIA 3090_24GB | F16 | 46.51 |

Note: The data for Llama3 70B is unavailable in the provided JSON.

As you can see, in this comparison, the Llama3 8B performs significantly better with the Q4KM quantization compared to the F16 quantization. This confirms that using a higher precision quantization for the model can significantly improve its performance on the NVIDIA 3090_24GB.

Practical Recommendations: Use Cases and Workarounds

The performance analysis highlights the capabilities and limitations of Llama3 8B on the NVIDIA 3090_24GB. Let's delve into practical recommendations based on these insights:

Use Cases for Llama3 8B on NVIDIA 3090_24GB

While the Llama3 8B model on the NVIDIA 3090_24GB may not be ideal for real-time applications requiring extremely high token generation speeds, it can still be quite effective for various use cases:

- Text Summarization: Summarizing lengthy articles or documents can be done efficiently, even with the lower token generation speed.

- Language Translation: While not as fast as some dedicated translation services, the model can still provide a reasonable translation experience.

- Code Generation: The model can generate basic code snippets in various programming languages, although it may require more time than larger models.

Workarounds to Improve Performance

Here's a list of possible workarounds to mitigate the performance limitations of the Llama3 8B on the NVIDIA 3090_24GB:

- Optimize Model Settings: Experiment with different model settings and configurations, such as the number of layers used, to potentially improve performance.

- Explore Alternative Devices: Consider using other devices, such as the Apple M1 or higher-end GPUs, which might offer better performance.

- Utilize Cloud-Based LLMs: For applications demanding high speeds, consider utilizing cloud-based LLMs, which provide access to powerful infrastructure and can scale effortlessly.

FAQ: Common Questions About LLMs and Devices

What exactly are LLMs?

LLMs are powerful AI systems trained on massive amounts of text data. They can understand and generate human-like text, perform various language-based tasks, and even learn and adapt to new information. Imagine a super-intelligent chatbot with an encyclopedic knowledge of language.

What is quantization, and why is it important?

Quantization is a technique that reduces the size of an LLM's model by representing its weights with fewer bits. Think of it like compressing a file; you reduce the file size without losing too much information. This makes the model smaller and more efficient, reducing memory requirements and speeding up inference.

Why is the NVIDIA 3090_24GB not the best choice for this model?

The NVIDIA 3090_24GB is a powerful GPU, but it might not be the optimal choice for running large LLMs like the Llama3 8B. While it can handle the computations, it may not be as fast or efficient as other devices designed specifically for AI workloads, like Apple M1 or higher-end GPUs.

What are the differences between Q4KM and F16 quantization?

Q4KM quantization represents model weights with 4 bits, while F16 quantization uses 16 bits. While F16 provides higher precision, Q4KM offers better memory efficiency, which is crucial for running large models on devices with limited memory.

What are the future trends in local LLM performance?

The future of local LLMs is bright. We can expect advancements in hardware, software optimization, and model architecture to significantly improve their performance. This will enable even more efficient and powerful local LLM deployments, expanding their reach and impact.

Keywords:

LLM, Llama3, Llama3 8B, NVIDIA 309024GB, GPU, token generation speed, performance, quantization, Q4K_M, F16, Apple M1, use cases, workarounds, local LLM, cloud-based LLM, AI, deep learning, natural language processing, NLP