What You Need to Know About Llama3 8B Performance on NVIDIA 3090 24GB x2?

Introduction

The world of Large Language Models (LLMs) is constantly evolving, with new models and architectures emerging regularly. These models, capable of generating human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way, are increasingly becoming part of our daily lives. Many people are excited about the potential of LLMs, and are eager to explore their capabilities, but one question that often arises is: how do they perform on different devices?

This article dives deep into the performance of the Llama3 8B model on a powerful NVIDIA 309024GBx2 setup. We'll analyze token generation speed, compare it with different configurations, and offer practical recommendations for use cases. Whether you're a developer, an AI enthusiast, or simply curious about the speed of these powerful language models, this article will provide you with a comprehensive understanding of Llama3 8B's performance on this specific hardware setup.

Performance Analysis: Token Generation Speed Benchmarks

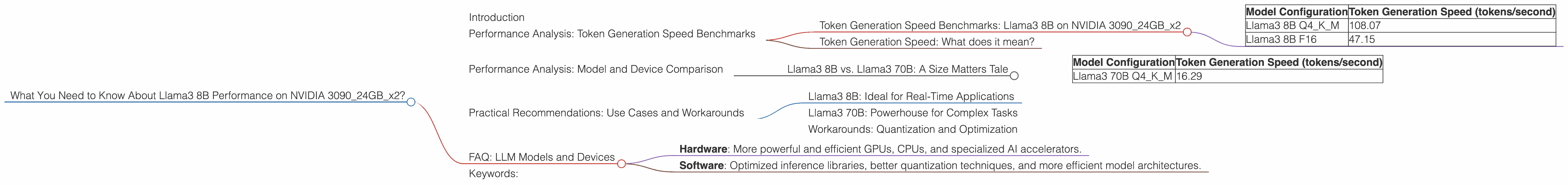

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 309024GBx2

The ability to generate tokens quickly is crucial for real-time applications like chatbots, interactive storytelling, and code generation. Let's look at the token generation speed of Llama3 8B on the NVIDIA 309024GBx2:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 108.07 |

| Llama3 8B F16 | 47.15 |

This table shows that Llama3 8B with Q4KM quantization boasts a much higher token generation speed compared to the F16 configuration. This is because Q4KM quantization compresses the model's weight parameters, resulting in a smaller model size. This smaller size allows for faster processing and lower memory usage, leading to improved performance, especially with the "K" and "M" operations (Kernal and Matrix multiplication) that are crucial for text generation. We'll explore quantization in more detail later.

Token Generation Speed: What does it mean?

Imagine trying to write a 1000-word blog post using a pen and paper versus using a high-speed word processor. You'd finish the blog post much faster with the word processor because it's more efficient. Similarly, the faster the token generation speed, the quicker the LLM can process and generate text.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: A Size Matters Tale

While Llama3 8B performs well on this hardware, it's interesting to compare it to its larger sibling, Llama3 70B, which boasts a much bigger parameter count.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM | 16.29 |

Even with the Q4KM quantization, the larger model size of Llama3 70B significantly impacts the token generation speed. This is because the model processes a larger amount of information, requiring more computational power to generate outputs. Think of it this way: Llama3 8B is like a smaller, nimble sports car, while Llama3 70B is a powerful, but heavier luxury sedan. Both are capable, but in a race, the nimble sports car would win.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: Ideal for Real-Time Applications

The fast token generation speed of Llama3 8B on this hardware makes it ideal for real-time applications such as chatbots, interactive stories, and basic question answering. Its speed allows for quick responses, creating a more seamless user experience.

Llama3 70B: Powerhouse for Complex Tasks

While Llama3 70B is slower, its larger size enables it to handle complex tasks such as text summarization, code generation, and creative writing with greater accuracy and depth.

Workarounds: Quantization and Optimization

If you need to optimize the performance of a larger model like Llama3 70B, you can consider using quantization techniques, which we will explore in more detail below. Quantization aims to reduce the model's size by using fewer bits to represent its weights, leading to faster inference and reduced memory usage.

FAQ: LLM Models and Devices

Here are some common questions about LLMs and the devices they run on:

Q: What is quantization?

A: Quantization is a technique used to reduce the size and memory footprint of LLM models. Instead of using 32-bit floating-point numbers to represent the model's weights, quantization techniques use fewer bits, such as 16-bit, 8-bit, or even 4-bit. This smaller representation reduces the size of the model, making it faster to load and process, and requiring less memory.

Q: Why does model size impact performance?

A: A larger model means more information to process, which requires more computing power. This translates to a slower token generation speed. Imagine a phone with a tiny processor trying to run a complex video game versus a gaming PC with a powerful processor: the gaming PC would offer a much smoother and faster experience.

Q: Can I run LLMs on my personal computer?

A: Yes, you can! While high-end GPUs like the NVIDIA 3090 series provide excellent performance, many LLMs can be run on less powerful devices, especially smaller models like Llama3 8B. You can find resources online that guide you through installing and running LLMs on your personal computer.

Q: Will LLMs continue to get faster?

A: Absolutely! The field of LLM research is advancing rapidly, and advancements in hardware and software are constantly pushing the boundaries of performance. We can expect to see even faster LLMs in the future, thanks to advancements in areas like:

- Hardware: More powerful and efficient GPUs, CPUs, and specialized AI accelerators.

- Software: Optimized inference libraries, better quantization techniques, and more efficient model architectures.

Keywords:

Llama3 8B, NVIDIA 309024GBx2, LLM, performance, token generation speed, quantization, model size, real-time applications, chatbots, interactive stories, question answering, GPU, hardware, software, AI, inference, optimization