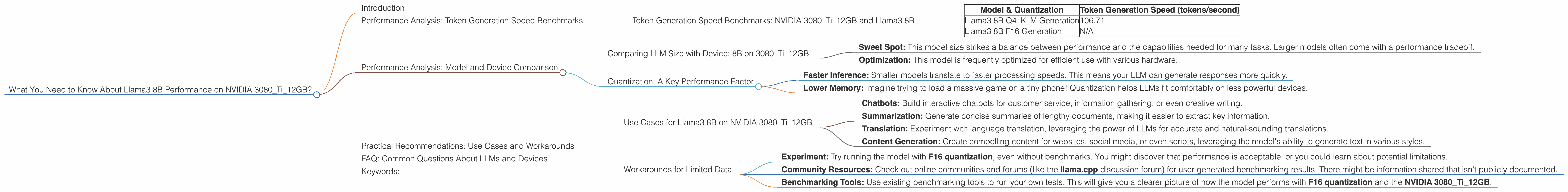

What You Need to Know About Llama3 8B Performance on NVIDIA 3080 Ti 12GB?

Introduction

The world of large language models (LLMs) is exploding, with new models popping up every day. But how do you know which model is right for you, and what kind of hardware you need to run it? This article dives deep into the performance of Llama3 8B on a NVIDIA 3080Ti12GB, offering insights for developers looking to build and deploy their own LLM applications. This deep dive will focus on the speed at which these models generate tokens, offering crucial information for choosing the right LLM and GPU for your project.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

The NVIDIA 3080Ti12GB stands as a robust GPU, commonly used for its performance and affordability. To understand its capabilities with Llama3 8B, we'll examine token generation speed, a metric that measures how quickly the model can generate new text.

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM Generation | 106.71 |

| Llama3 8B F16 Generation | N/A |

Llama3 8B with Q4KM quantization achieves a token generation speed of 106.71 tokens per second on the NVIDIA 3080Ti12GB.

Important Note: No data is available for the F16 quantization of Llama3 8B on this GPU. This could be due to a lack of benchmarks or the model's limitations in running with this specific configuration.

Performance Analysis: Model and Device Comparison

Comparing LLM Size with Device: 8B on 3080Ti12GB

Let's delve into the world of LLMs and how they fare on specific GPUs. The NVIDIA 3080Ti12GB offers a substantial amount of memory and processing power, but it's important to understand how it handles different LLM sizes.

We'll focus on comparing the performance of **Llama3 8B on this device. Why?

Sweet Spot: This model size strikes a balance between performance and the capabilities needed for many tasks. Larger models often come with a performance tradeoff.

Optimization: This model is frequently optimized for efficient use with various hardware.

Quantization: A Key Performance Factor

Quantization is a technique that reduces the size of a model (and memory requirements) by converting its large numbers (like 32-bit floating-point numbers) to smaller ones (like 4-bit or 8-bit integers). Think of it like squeezing a giant file into a more manageable size.

Here's why this is important:

- Faster Inference: Smaller models translate to faster processing speeds. This means your LLM can generate responses more quickly.

- Lower Memory: Imagine trying to load a massive game on a tiny phone! Quantization helps LLMs fit comfortably on less powerful devices.

Important Note: The data available shows that Llama3 8B with Q4KM quantization performs well on the NVIDIA 3080Ti12GB but with no information available for the F16 quantization, we can only speculate about its performance.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 3080Ti12GB

The NVIDIA 3080Ti12GB, especially combined with the Q4KM quantization of Llama3 8B, can be suitable for:

Chatbots: Build interactive chatbots for customer service, information gathering, or even creative writing.

Summarization: Generate concise summaries of lengthy documents, making it easier to extract key information.

Translation: Experiment with language translation, leveraging the power of LLMs for accurate and natural-sounding translations.

Content Generation: Create compelling content for websites, social media, or even scripts, leveraging the model's ability to generate text in various styles.

Workarounds for Limited Data

The lack of data on F16 quantization for Llama3 8B on the NVIDIA 3080Ti12GB might be concerning. Here are some workarounds:

- Experiment: Try running the model with F16 quantization, even without benchmarks. You might discover that performance is acceptable, or you could learn about potential limitations.

- Community Resources: Check out online communities and forums (like the llama.cpp discussion forum) for user-generated benchmarking results. There might be information shared that isn't publicly documented.

- Benchmarking Tools: Use existing benchmarking tools to run your own tests. This will give you a clearer picture of how the model performs with F16 quantization and the NVIDIA 3080Ti12GB.

FAQ: Common Questions About LLMs and Devices

Q: What is an LLM?

A: An LLM, or Large Language Model, is a sophisticated type of artificial intelligence program trained on vast amounts of text data. It can understand and generate human-like text, making it powerful for tasks like translation, writing, and summarization.

Q: Why is quantization important?

A: Quantization shrinks the size of an LLM, making it faster and more memory-efficient. It's like compressing a large file to fit on a smaller hard drive, making it easier to use.

Q: How do I choose the right GPU for an LLM?

A: Consider the model size you need, the memory available on the GPU, and the speed requirements for your application. Also, factor in the cost and availability of different GPUs.

Q: Where can I find more information about LLM performance?

A: Online communities (like the llama.cpp discussion forum), GitHub repositories, and research papers are great sources of information. Look for benchmarks and performance tests relevant to your specific LLM and GPU.

Q: Can I run LLMs on my laptop's CPU?

A: You can, but CPUs are generally less efficient than GPUs for LLM tasks, especially for large models. You might see slower processing speeds and longer wait times for responses.

Keywords:

Large Language Models, LLMs, Llama3 8B, NVIDIA 3080Ti12GB, Token Generation Speed, Quantization, Q4KM, F16, Benchmarking, Performance Analysis, GPU, Chatbots, Summarization, Translation, Content Generation, llm.cpp,