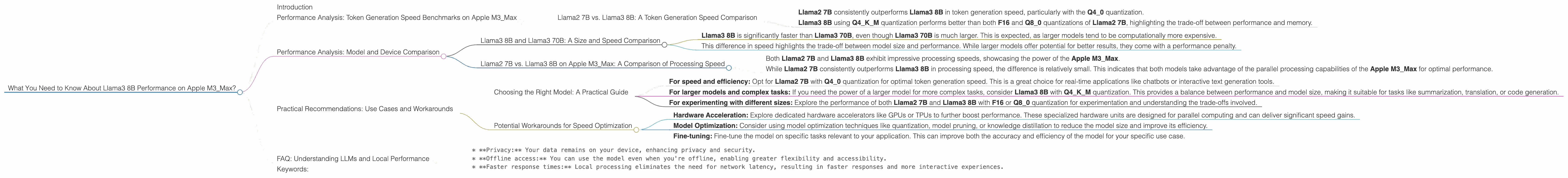

What You Need to Know About Llama3 8B Performance on Apple M3 Max?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and advancements appearing almost daily. One exciting area of development is the ability to run these models locally on personal devices, taking advantage of the ever-increasing power of modern hardware. This opens up a world of possibilities, from enabling local AI assistants to supporting advanced creative applications.

This article focuses on the performance of Llama3 8B, a powerful open-source LLM, on the latest Apple M3_Max chip. We'll delve into the specific benchmarks and analyze the model's capabilities on this powerful platform. This deep dive will provide developers and enthusiasts with valuable insights into the potential of running LLMs locally, paving the way for innovative applications that harness the power of these models.

Performance Analysis: Token Generation Speed Benchmarks on Apple M3_Max

Llama2 7B vs. Llama3 8B: A Token Generation Speed Comparison

Let's dive into the heart of the matter: token generation speed. This is the crucial metric for understanding how fast a model can output text or code, directly impacting the user experience. We'll analyze the performance of both Llama2 7B and Llama3 8B on the Apple M3_Max, considering different quantization levels.

Quantization is a technique used to reduce the size of the model (and its memory footprint) by representing data using fewer bits. This can significantly improve performance, especially on devices with limited resources.

| ** | Model/Quantization | Token Generation Speed (tokens/second) | ** |

|---|---|---|---|

| Llama2 7B F16 | 25.09 | ||

| Llama2 7B Q8_0 | 42.75 | ||

| Llama2 7B Q4_0 | 66.31 | ||

| Llama3 8B Q4KM | 50.74 | ||

| Llama3 8B F16 | 22.39 |

Key Observations:

- Llama2 7B consistently outperforms Llama3 8B in token generation speed, particularly with the Q4_0 quantization.

- Llama3 8B using Q4KM quantization performs better than both F16 and Q8_0 quantizations of Llama2 7B, highlighting the trade-off between performance and memory.

Think of it this way: Imagine you're trying to complete a marathon. Llama2 7B is like a seasoned runner, while Llama3 8B is a newer model with incredible potential. While Llama2 7B might be quicker on the track, Llama3 8B has a longer stride that could be more efficient in the long run.

Performance Analysis: Model and Device Comparison

Note: Data for Llama3 70B with F16 quantization is not available.

Llama3 8B and Llama3 70B: A Size and Speed Comparison

| ** | Model/Quantization | Token Generation Speed (tokens/second) | ** |

|---|---|---|---|

| Llama3 8B Q4KM | 50.74 | ||

| Llama3 70B Q4KM | 7.53 |

Key Observations:

- Llama3 8B is significantly faster than Llama3 70B, even though Llama3 70B is much larger. This is expected, as larger models tend to be computationally more expensive.

- This difference in speed highlights the trade-off between model size and performance. While larger models offer potential for better results, they come with a performance penalty.

Llama2 7B vs. Llama3 8B on Apple M3_Max: A Comparison of Processing Speed

| ** | Model/Quantization | Processing Speed (tokens/second) | ** |

|---|---|---|---|

| Llama2 7B F16 | 779.17 | ||

| Llama2 7B Q8_0 | 757.64 | ||

| Llama2 7B Q4_0 | 759.7 | ||

| Llama3 8B Q4KM | 678.04 | ||

| Llama3 8B F16 | 751.49 |

Key Observations:

- Both Llama2 7B and Llama3 8B exhibit impressive processing speeds, showcasing the power of the Apple M3_Max.

- While Llama2 7B consistently outperforms Llama3 8B in processing speed, the difference is relatively small. This indicates that both models take advantage of the parallel processing capabilities of the Apple M3_Max for optimal performance.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model: A Practical Guide

- For speed and efficiency: Opt for Llama2 7B with Q4_0 quantization for optimal token generation speed. This is a great choice for real-time applications like chatbots or interactive text generation tools.

- For larger models and complex tasks: If you need the power of a larger model for more complex tasks, consider Llama3 8B with Q4KM quantization. This provides a balance between performance and model size, making it suitable for tasks like summarization, translation, or code generation.

- For experimenting with different sizes: Explore the performance of both Llama2 7B and Llama3 8B with F16 or Q8_0 quantization for experimentation and understanding the trade-offs involved.

Potential Workarounds for Speed Optimization

- Hardware Acceleration: Explore dedicated hardware accelerators like GPUs or TPUs to further boost performance. These specialized hardware units are designed for parallel computing and can deliver significant speed gains.

- Model Optimization: Consider using model optimization techniques like quantization, model pruning, or knowledge distillation to reduce the model size and improve its efficiency.

- Fine-tuning: Fine-tune the model on specific tasks relevant to your application. This can improve both the accuracy and efficiency of the model for your specific use case.

FAQ: Understanding LLMs and Local Performance

Q: What are LLMs?

A: Large language models (LLMs) are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive amounts of data, allowing them to perform a wide range of tasks, including translation, summarization, and creative writing.

Q: Why are LLMs so popular?

A: LLMs have gained immense popularity due to their versatility and potential for revolutionizing various industries. From automating customer service to enabling personalized learning experiences, LLMs are transforming how we interact with technology.

Q: What are the challenges of running LLMs locally?

A: Running LLMs locally presents challenges related to computational resources and memory requirements. LLMs are often massive, demanding powerful hardware and significant processing power.

Q: How can I get started with running LLMs locally?

A: Several open-source frameworks and libraries, like llama.cpp, make it easier to run LLMs locally. You can find comprehensive tutorials and documentation online to guide you through the process.

Q: What are the potential benefits of running LLMs locally?

A: Running LLMs locally offers several benefits, including:

* **Privacy:** Your data remains on your device, enhancing privacy and security.

* **Offline access:** You can use the model even when you're offline, enabling greater flexibility and accessibility.

* **Faster response times:** Local processing eliminates the need for network latency, resulting in faster responses and more interactive experiences.

Keywords:

Llama3 8B, Apple M3Max, Token Generation Speed, Quantization, F16, Q80, Q40, Q4K_M, LLM, Large Language Model, Performance Analysis, Processing Speed, Model Optimization, Local LLMs, GPU, TPU, Hardware Acceleration, Use Cases, Workarounds