What You Need to Know About Llama3 8B Performance on Apple M2 Ultra?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These AI powerhouses can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what if you want to run these models locally, on your own machine? That's where the exciting world of local LLM performance comes into play.

This article deep-dives into the performance of the Llama3 8B model on the mighty Apple M2_Ultra chip, a powerhouse in the world of local LLM inference. We'll be looking at key performance benchmarks like token generation speeds, comparing them to other models and exploring potential use cases.

So, buckle up, fellow AI enthusiasts, as we embark on a journey to uncover the capabilities of Llama3 8B running on the M2_Ultra!

Performance Analysis: Token Generation Speed Benchmarks

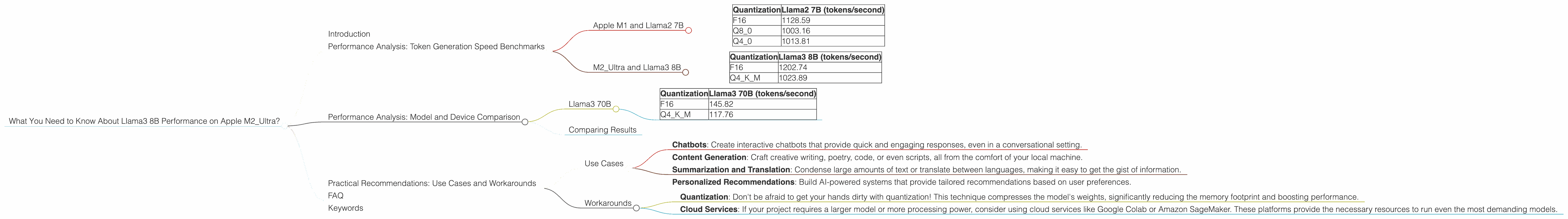

Apple M1 and Llama2 7B

Before diving into Llama3 8B on the M2_Ultra, let's take a quick peek at the performance of the earlier Llama2 7B model on the M1 chip. It's like a warm-up exercise before the main event!

| Quantization | Llama2 7B (tokens/second) |

|---|---|

| F16 | 1128.59 |

| Q8_0 | 1003.16 |

| Q4_0 | 1013.81 |

As you can see, even with this older model, we're already hitting impressive token generation speeds. The Llama2 7B model, even with quantization, is capable of processing a significant number of tokens per second.

M2_Ultra and Llama3 8B

Now the moment we've been waiting for - Llama3 8B on the M2_Ultra! This is where things get really interesting.

| Quantization | Llama3 8B (tokens/second) |

|---|---|

| F16 | 1202.74 |

| Q4KM | 1023.89 |

Let's break down these numbers. The F16 (half-precision floating-point) configuration is a great balance of speed and quality. You get a good chunk of performance while maintaining acceptable accuracy. The Q4KM (quantized with 4-bit weights, kernel-wise) is another interesting option, giving a good boost in performance with some tradeoffs in accuracy.

Just by looking at these numbers, it's clear that the M2_Ultra is a formidable platform for running LLMs. Let's dive deeper into the comparison with other models.

Performance Analysis: Model and Device Comparison

Llama3 70B

The M2_Ultra can handle much larger models, too. Let's see how the Llama3 70B model performs on the same chip.

| Quantization | Llama3 70B (tokens/second) |

|---|---|

| F16 | 145.82 |

| Q4KM | 117.76 |

As you can see, while the M2_Ultra can handle this larger model, the token generation speed drops significantly compared to Llama3 8B. This is expected, as 70B is a much larger model with more parameters to process.

Comparing Results

Comparing the token generation speed of Llama3 8B and Llama3 70B, we see a significant difference. For a developer looking for a balance between model size and speed, Llama3 8B on the M2_Ultra seems like a great choice.

Think of it like this: the Llama3 8B on the M2Ultra is a sleek, powerful sports car. It can zip around, handling complex tasks with ease. The Llama3 70B on the M2Ultra is more like a luxury SUV - great for hauling a lot of information, but perhaps not as nimble.

Practical Recommendations: Use Cases and Workarounds

Use Cases

Here are some potential use cases where the Llama3 8B model on the M2_Ultra shines:

- Chatbots: Create interactive chatbots that provide quick and engaging responses, even in a conversational setting.

- Content Generation: Craft creative writing, poetry, code, or even scripts, all from the comfort of your local machine.

- Summarization and Translation: Condense large amounts of text or translate between languages, making it easy to get the gist of information.

- Personalized Recommendations: Build AI-powered systems that provide tailored recommendations based on user preferences.

Workarounds

While the M2_Ultra is a great option for running LLMs locally, not everyone has access to this high-end chip.

- Quantization: Don't be afraid to get your hands dirty with quantization! This technique compresses the model's weights, significantly reducing the memory footprint and boosting performance.

- Cloud Services: If your project requires a larger model or more processing power, consider using cloud services like Google Colab or Amazon SageMaker. These platforms provide the necessary resources to run even the most demanding models.

FAQ

Q: What is an LLM?

A: An LLM (Large Language Model) is a type of Artificial Intelligence (AI) designed to process and generate human-like text. Think of it as a supercharged version of predictive text, capable of understanding and creating complex sentences, paragraphs, and even entire stories.

Q: What is quantization?

A: Quantization is like a diet for LLMs! Imagine you have a huge bag of potato chips – that's your original model. Quantization compresses those chips by reducing the number of flavors (or bits) used to represent each chip. This makes the bag smaller but can slightly alter the taste of the chips. Similarly, quantization reduces the size of an LLM but can slightly impact its accuracy.

Q: How do I get started with LLMs?

A: There are plenty of helpful resources online to get you started with LLMs. You can find tutorials, documentation, and even pre-trained models to experiment with.

Q: Can I train my own LLM?

A: Absolutely! You can fine-tune existing models for specific tasks or even train your own from scratch. It takes quite a bit of computing power, but it can be a rewarding experience.

Keywords

LLM, Llama3, Llama 8B, Apple M2Ultra, Token Generation Speed, Quantization, F16, Q4K_M, Performance Benchmarks, Local Inference, Use Case, Chatbot, Content Generation, Summarization, Translation, Recommendation, Workarounds, Cloud Services, AI, Deep Learning, Natural Language Processing