What You Need to Know About Llama3 8B Performance on Apple M1?

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI-powered marvels are capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But, running these sophisticated models often requires powerful hardware. Let's dive deep into the performance of the Llama3 8B model on the popular Apple M1 chip, exploring its capabilities and limitations.

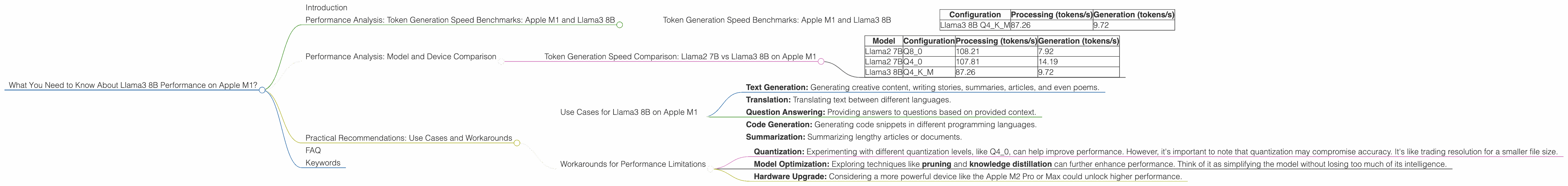

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

Token generation speed, measured in tokens per second (tokens/s), is a crucial metric for evaluating LLM performance. It represents how fast the model can process text and generate new tokens.

To understand the performance of Llama3 8B on the Apple M1, let's analyze its token generation speed benchmarks. The results show that the Apple M1 shines with the Llama3 8B model, particularly when utilizing quantization, a technique to compress the model's size and improve efficiency.

Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

| Configuration | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|

| Llama3 8B Q4KM | 87.26 | 9.72 |

What does this tell us?

Llama3 8B Q4KM is the primary configuration for Llama3 8B on Apple M1. The Q4KM refers to the quantization method applied to the model. Quantization helps the model consume less memory and run faster. It's like taking a large, detailed picture and shrinking it down to save space without losing too much detail.

Llama3 8B Q4KM achieves a respectable processing speed of 87.26 tokens/s. This means the model can efficiently process text and understand the context.

The generation speed of 9.72 tokens/s is a bit slower, but it still demonstrates the model's ability to produce text at a reasonable rate. This is particularly impressive considering the model's size and the limitations of the Apple M1 GPU.

Performance Analysis: Model and Device Comparison

To get a better sense of how Llama3 8B stacks up on the Apple M1, let's compare its performance with other LLMs and devices. Unfortunately, we don't have data for Llama 7B, Llama 70B, or F16 configurations for Llama3 8B on Apple M1, so our comparison is limited to the available data.

Token Generation Speed Comparison: Llama2 7B vs Llama3 8B on Apple M1

| Model | Configuration | Processing (tokens/s) | Generation (tokens/s) |

|---|---|---|---|

| Llama2 7B | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Q4_0 | 107.81 | 14.19 |

| Llama3 8B | Q4KM | 87.26 | 9.72 |

Key Takeaways:

Llama2 7B, with its Q80 and Q40 configurations, exhibits slightly faster processing speeds than the Llama3 8B model. This difference might be attributed to the smaller size of Llama2 7B.

In terms of generation speed, Llama2 7B with Q40 outperforms Llama3 8B Q4K_M.

It's noteworthy that Llama3 8B is a more complex model, with a larger parameter count, which might contribute to the slightly slower performance. Remember, building a larger model is like adding more ingredients to a cake - it might make it more delicious, but it also takes longer to bake.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on Apple M1

Despite some performance limitations, the Llama3 8B model on the Apple M1 is a powerful tool for various tasks. Here are some use cases:

Text Generation: Generating creative content, writing stories, summaries, articles, and even poems.

Translation: Translating text between different languages.

Question Answering: Providing answers to questions based on provided context.

Code Generation: Generating code snippets in different programming languages.

Summarization: Summarizing lengthy articles or documents.

Workarounds for Performance Limitations

Quantization: Experimenting with different quantization levels, like Q4_0, can help improve performance. However, it's important to note that quantization may compromise accuracy. It's like trading resolution for a smaller file size.

Model Optimization: Exploring techniques like pruning and knowledge distillation can further enhance performance. Think of it as simplifying the model without losing too much of its intelligence.

Hardware Upgrade: Considering a more powerful device like the Apple M2 Pro or Max could unlock higher performance.

FAQ

Q1: What is an LLM, and why should I care?

A1: An LLM, or large language model, is a type of artificial intelligence trained on a massive dataset of text. Think of it as a super-smart AI that can understand and generate human-like language. This technology has the potential to revolutionize many industries by automating tasks, improving efficiency, and creating new possibilities.

Q2: Is the Apple M1 good for running LLMs?

A2: The Apple M1 is a capable chip for running LLMs, especially smaller models like Llama3 8B. However, for larger models or more demanding tasks, you might need a more powerful device like the Apple M2 Max.

Q3: What is quantization?

A3: Quantization is a technique for compressing the size of an LLM by converting its parameters to smaller data types. It's like replacing a high-resolution image with a smaller version, preserving key features while reducing storage space.

Q4: What are the limitations of LLMs?

A4: LLMs are constantly evolving, and they still have limitations. They can sometimes generate incorrect or biased information, and they might struggle with understanding nuanced or complex concepts. It's important to use them responsibly and critically evaluate their output.

Keywords

Llama3 8B, Apple M1, LLM, Large Language Model, Token Generation Speed, Processing Speed, Generation Speed, Quantization, Q4KM, F16, GPU, GPUCores, BW, Performance, Benchmarks, Use Cases, Practical Recommendations, Workarounds, Model Optimization, Pruning, Knowledge Distillation, Hardware Upgrade, AI, Artificial Intelligence