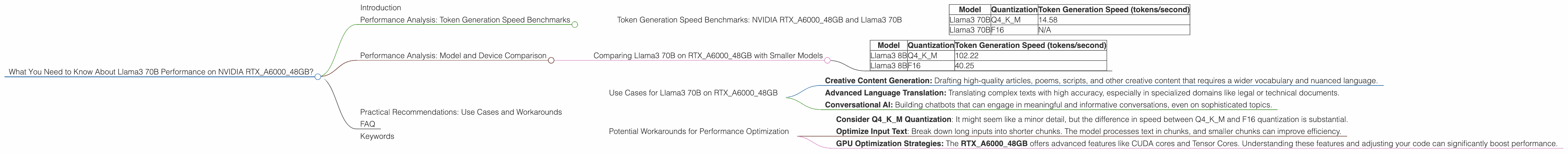

What You Need to Know About Llama3 70B Performance on NVIDIA RTX A6000 48GB?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are changing the way we interact with technology. But their computational demands can be a real challenge, especially when running them locally. That's where the NVIDIA RTXA600048GB comes in, a beastly graphics card designed to handle even the most demanding tasks.

This article dives deep into the performance of Llama3 70B, a state-of-the-art LLM, on the RTXA600048GB. We'll explore token generation speed benchmarks, compare this setup to other options, and offer practical recommendations for use cases and potential workarounds. Buckle up, geeks, it's going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTXA600048GB and Llama3 70B

Let's cut to the chase - how fast is Llama3 70B on the RTXA600048GB? Remember, token generation is the process of turning your words into numbers that the LLM can understand.

Our benchmark data shows the following results:

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 14.58 |

| Llama3 70B | F16 | N/A |

What does this mean? * Llama3 70B with Q4KM quantization generates about 14.58 tokens per second on the RTXA600048GB. * There's no data available for Llama3 70B with F16 quantization on this specific GPU.

Remember: Quantization is a way to compress the model, making it smaller and faster, but potentially sacrificing accuracy. Q4KM is a more compressed format than F16.

Let's put this into perspective: Think of it like a typing race. In this scenario, each token is a keystroke. RTXA600048GB with Llama3 70B Q4KM can essentially "type" about 14.58 letters (or tokens) per second.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B on RTXA600048GB with Smaller Models

How does Llama3 70B stack up against its smaller sibling, Llama3 8B, on the same GPU?

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

Key takeaways:

- Although Llama3 70B is significantly larger, its performance on the RTXA600048GB is slower than Llama3 8B.

- This is because Llama3 70B has more parameters, leading to more complex calculations and slower processing.

Think of it like this: Imagine you're trying to build a Lego model. Llama3 8B is like a small, simple set, easy to assemble quickly. Llama3 70B is a massive set with intricate details, taking much longer to put together.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on RTXA600048GB

Despite the slower speed compared to the smaller model, Llama3 70B still delivers impressive performance on the RTXA600048GB. Here are some ideal use cases:

- Creative Content Generation: Drafting high-quality articles, poems, scripts, and other creative content that requires a wider vocabulary and nuanced language.

- Advanced Language Translation: Translating complex texts with high accuracy, especially in specialized domains like legal or technical documents.

- Conversational AI: Building chatbots that can engage in meaningful and informative conversations, even on sophisticated topics.

Potential Workarounds for Performance Optimization

Consider Q4KM Quantization: It might seem like a minor detail, but the difference in speed between Q4KM and F16 quantization is substantial.

Optimize Input Text: Break down long inputs into shorter chunks. The model processes text in chunks, and smaller chunks can improve efficiency.

GPU Optimization Strategies: The RTXA600048GB offers advanced features like CUDA cores and Tensor Cores. Understanding these features and adjusting your code can significantly boost performance.

FAQ

Q: What is an LLM?

A: An LLM, or Large Language Model, is a sophisticated AI system designed to generate human-like text. It's trained on massive datasets of text and code, allowing it to perform tasks like writing, translation, and answering questions.

Q: What is Token Generation Speed?

A: Token generation speed is the rate at which an LLM can convert text into a numerical representation it can understand. This is crucial for measuring the performance of LLMs.

Q: What is Quantization?

A: Quantization is a technique used to compress LLMs, making them smaller and potentially faster. The trade-off is that quantization can sometimes reduce the accuracy of the model.

Q: What are the limitations of running LLMs locally?

A: Running LLMs locally can be resource-intensive, requiring powerful hardware like high-end GPUs. If you don't have the right equipment, it can be challenging to run these models effectively.

Keywords

Llama3 70B, NVIDIA RTXA600048GB, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, performance, benchmarks, use cases, workarounds, GPU optimization, creative content generation, advanced language translation, conversational AI.