What You Need to Know About Llama3 70B Performance on NVIDIA RTX 6000 Ada 48GB?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But with great power comes great... computational resource needs. That's where the magic of local LLM models comes into play, allowing you to leverage the processing power of your own device.

In this deep dive, we'll be focusing on the performance of the Llama3 70B model on the NVIDIA RTX6000Ada_48GB GPU. This powerful combination unlocks new possibilities for running advanced LLMs locally, enabling you to explore the world of language generation without relying on cloud services.

Performance Analysis: Token Generation Speed Benchmarks

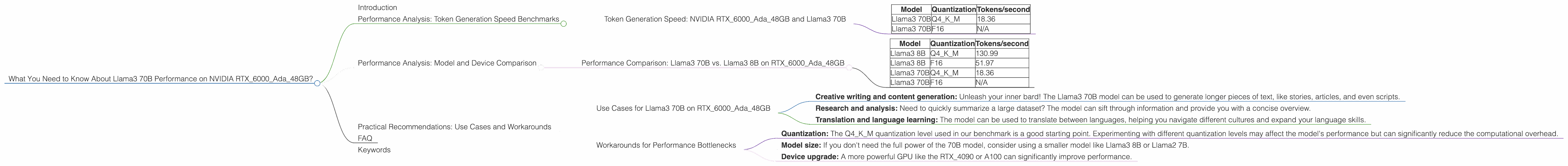

Token Generation Speed: NVIDIA RTX6000Ada_48GB and Llama3 70B

Let's cut to the chase - how many tokens can this dynamic duo spit out per second?

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 70B | Q4KM | 18.36 |

| Llama3 70B | F16 | N/A |

- Q4KM is a type of quantization that reduces the size of the model by representing numbers with fewer bits, making it more efficient for devices with limited memory.

Token generation speed: This metric tells us how fast a model can produce text. Think of it like the speed of a typist - the higher the tokens per second, the faster the model can churn out words.

A quick analogy: Imagine you're writing a novel. If you can type 10 words per minute, it might take you a long time to finish. But with a faster typing speed, you could churn out those chapters much faster.

Key takeaway: While the Llama3 70B model on the RTX6000Ada_48GB GPU has a pretty good token generation speed for a 70B model, it still falls short compared to smaller models. This is expected, as larger models inherently require more processing power.

Performance Analysis: Model and Device Comparison

Performance Comparison: Llama3 70B vs. Llama3 8B on RTX6000Ada_48GB

Here's a quick comparison of Llama3 70B and its smaller sibling, Llama3 8B, on the same GPU:

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 130.99 |

| Llama3 8B | F16 | 51.97 |

| Llama3 70B | Q4KM | 18.36 |

| Llama3 70B | F16 | N/A |

Key takeaway: As expected, the smaller 8B model performs significantly better in terms of token generation speed. This is because it has a much smaller footprint and requires less computational power to process.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on RTX6000Ada_48GB

Even though the Llama3 70B model on the RTX6000Ada_48GB GPU might not be blazing fast, it's still a powerful combination for certain use cases. Here are some ideas:

- Creative writing and content generation: Unleash your inner bard! The Llama3 70B model can be used to generate longer pieces of text, like stories, articles, and even scripts.

- Research and analysis: Need to quickly summarize a large dataset? The model can sift through information and provide you with a concise overview.

- Translation and language learning: The model can be used to translate between languages, helping you navigate different cultures and expand your language skills.

Workarounds for Performance Bottlenecks

If you're facing performance bottlenecks with the Llama3 70B model, here are some strategies to consider:

- Quantization: The Q4KM quantization level used in our benchmark is a good starting point. Experimenting with different quantization levels may affect the model's performance but can significantly reduce the computational overhead.

- Model size: If you don't need the full power of the 70B model, consider using a smaller model like Llama3 8B or Llama2 7B.

- Device upgrade: A more powerful GPU like the RTX_4090 or A100 can significantly improve performance.

FAQ

Q: What is an LLM, and why should developers care?

A: LLMs are artificial intelligence models trained on massive datasets of text. Think of them as super-smart language users, capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. For developers, LLMs open doors to new applications, allowing you to build AI-powered products that can understand and interact with language in unprecedented ways.

Q: What does quantization mean, and how does it impact performance?

A: Quantization is a technique that reduces the size of an LLM by representing each number with fewer bits. This helps the model to run more efficiently on devices with limited memory and processing power. Imagine it like compressing a video file - you lose some detail, but the file becomes much smaller and easier to download.

Q: Which device is best for running LLMs locally?

A: There's no one-size-fits-all answer. The best device depends on your specific needs and the size of the LLM you're using. For smaller models, even a powerful CPU might be sufficient. However, for larger models, a dedicated GPU will significantly improve performance.

Keywords

Llama3 70B, NVIDIA RTX6000Ada_48GB, LLM, local LLM, performance, token generation speed, quantization, GPU, deep dive, benchmarks, data analysis, use cases, workarounds, developers, geeks, AI, natural language processing, AI models