What You Need to Know About Llama3 70B Performance on NVIDIA RTX 5000 Ada 32GB?

Introduction: Dive Deep into the World of Local LLMs

The world of Large Language Models (LLMs) is getting bigger, better, and... well, larger! While the cloud offers powerful and accessible LLMs like ChatGPT and Bard, running these models locally on your own machine offers control, privacy, and a whole lot of fun. But, can your hardware handle the heat?

In this deep dive, we're specifically focusing on the NVIDIA RTX5000Ada_32GB and its performance with Llama3 70B, a cutting-edge LLM that's eager to make its mark. We'll explore the intricate details of token generation speed and dissect the differences between various quantized configurations. Let's dive in!

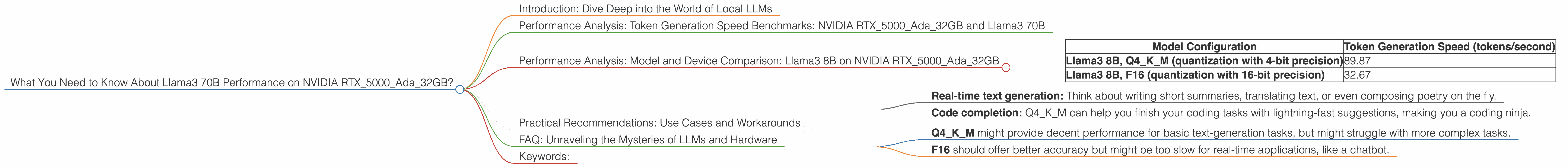

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 70B

Unfortunately, we don't have the performance numbers for Llama3 70B on the NVIDIA RTX5000Ada32GB just yet. The data we have focuses on Llama3 8B. This is like trying to fit a 70-passenger bus into a parking spot meant for a compact car! We need more data to see how the RTX5000Ada32GB performs with this heavyweight LLM.

However, we can still analyze the performance of Llama3 8B, offering valuable insights into the capabilities of this GPU and how it might handle the larger 70B model.

Performance Analysis: Model and Device Comparison: Llama3 8B on NVIDIA RTX5000Ada_32GB

For comparison, we'll look at the performance of Llama3 8B with different quantization levels, which essentially reduces model size for smoother running on less powerful hardware. Think of it like squeezing a giant sandwich to make it fit in your lunchbox.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B, Q4KM (quantization with 4-bit precision) | 89.87 |

| Llama3 8B, F16 (quantization with 16-bit precision) | 32.67 |

Looking at these numbers, we can see that Llama3 8B with Q4KM is significantly faster than the F16 version. This is because Q4KM uses a more compressed representation of the model, which allows the GPU to process information more efficiently. In plain English, the Q4KM version is like a turbocharged engine for your LLM!

Practical Recommendations: Use Cases and Workarounds

The Q4KM configuration of Llama3 8B on the RTX5000Ada_32GB could be a great choice for tasks that require quick processing and low latency, like:

- Real-time text generation: Think about writing short summaries, translating text, or even composing poetry on the fly.

- Code completion: Q4KM can help you finish your coding tasks with lightning-fast suggestions, making you a coding ninja.

However, it's important to note that the accuracy and quality of the results might be affected by the reduced precision of the quantized model. If you need the highest level of accuracy, the F16 version might be a better option, even though it will be slower.

For running Llama3 70B on the RTX5000Ada_32GB, we need to wait for the performance data to be available. However, based on the Llama3 8B numbers, we can make some educated guesses:

- Q4KM might provide decent performance for basic text-generation tasks, but might struggle with more complex tasks.

- F16 should offer better accuracy but might be too slow for real-time applications, like a chatbot.

FAQ: Unraveling the Mysteries of LLMs and Hardware

Q: What is quantization?

A: Quantization is like a diet for your LLM. By using fewer bits (e.g., 4-bit instead of 16-bit) to represent the model's parameters, you can make it smaller and faster, even if it sacrifices some accuracy.

Q: What does "Q4KM" mean?

A: It's a specific type of quantization technique that uses 4-bit precision for model parameters. "K-M" indicates that it focuses on the key ("K") and matrix ("M") components for faster processing.

Q: How can I get Llama3 70B running on my RTX5000Ada_32GB?

A: Stay tuned! We'll update this article as soon as the performance data becomes available. In the meantime, you can find information on how to download and run Llama3 on the llama.cpp GitHub repository.

Q: Why should I care about local LLMs?

A: Local LLMs offer privacy, customization, and offline access, making them perfect for projects where data privacy is critical or when you need to work without an internet connection. They also allow for greater control over the model's behavior.

Keywords:

Llama3 70B, NVIDIA RTX5000Ada32GB, LLM, Local LLMs, Performance, Token Generation Speed, Quantization, Q4K_M, F16, GPU, Model Size, Accuracy, Use Cases, Practical Recommendations