What You Need to Know About Llama3 70B Performance on NVIDIA RTX 4000 Ada 20GB?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what if you want to run these models locally on your own machine? That's where performance benchmarks come in, helping us understand how different devices handle these computationally demanding tasks.

Today, we're diving deep into the performance of Llama3 70B on the NVIDIA RTX4000Ada_20GB graphics card. This exploration will shed light on the real-world capabilities of Llama3 70B and help you determine if this setup is a good fit for your projects. Let's get our geek on and delve into the numbers!

Performance Analysis: Token Generation Speed Benchmarks

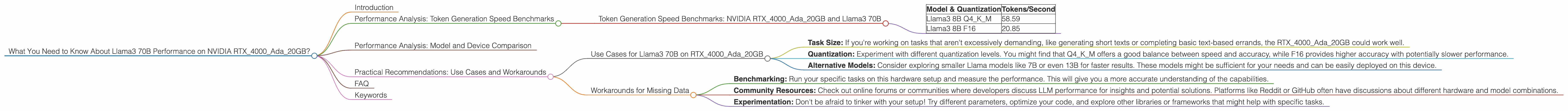

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 70B

First things first, let's take a look at how fast the NVIDIA RTX4000Ada_20GB can spit out tokens when running the Llama3 70B model. Unfortunately, no data is available for the Llama3 70B model on this particular hardware configuration. 😔

Here's what we do have:

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 58.59 |

| Llama3 8B F16 | 20.85 |

This means that the RTX4000Ada_20GB can handle the Llama3 8B model with varying degrees of performance depending on the chosen quantization.

What is Quantization? Imagine you have a picture with millions of colors. You can compress the image by reducing the number of colors, making the file smaller. Quantization does the same with LLMs! It uses a "shorter" version of the model, which means less data is used, but it might decrease accuracy slightly.

Q4KM represents 4-bit quantization using a technique called K-Means clustering, while F16 stands for half-precision floating point (16-bit) numbers.

Based on the available data, it's clear that Q4KM quantization yields a significantly faster token generation speed compared to F16.

Performance Analysis: Model and Device Comparison

Important: It's essential to note that comparing different models and devices can be tricky. The performance of an LLM is influenced by multiple factors, including the underlying architecture, training data, and even the tasks being executed.

While we don't have data for Llama3 70B on the RTX4000Ada20GB, the available numbers for Llama3 8B provide a glimpse into the capabilities of this hardware. We can see that the RTX4000Ada20GB is capable of pushing out a significant number of tokens per second, even for the 8B model, which makes it potentially well-suited for medium-sized tasks.

Practical Recommendations: Use Cases and Workarounds

While the lack of specific data for Llama3 70B on the RTX4000Ada_20GB poses a challenge, we can still draw some practical conclusions.

Use Cases for Llama3 70B on RTX4000Ada_20GB

The RTX4000Ada_20GB is a powerful GPU, so it likely has the potential to handle tasks with Llama3 70B. However, some considerations apply:

- Task Size: If you're working on tasks that aren't excessively demanding, like generating short texts or completing basic text-based errands, the RTX4000Ada_20GB could work well.

- Quantization: Experiment with different quantization levels. You might find that Q4KM offers a good balance between speed and accuracy, while F16 provides higher accuracy with potentially slower performance.

- Alternative Models: Consider exploring smaller Llama models like 7B or even 13B for faster results. These models might be sufficient for your needs and can be easily deployed on this device.

Workarounds for Missing Data

If you need to work with Llama3 70B on the RTX4000Ada_20GB, here are some strategies:

- Benchmarking: Run your specific tasks on this hardware setup and measure the performance. This will give you a more accurate understanding of the capabilities.

- Community Resources: Check out online forums or communities where developers discuss LLM performance for insights and potential solutions. Platforms like Reddit or GitHub often have discussions about different hardware and model combinations.

- Experimentation: Don't be afraid to tinker with your setup! Try different parameters, optimize your code, and explore other libraries or frameworks that might help with specific tasks.

FAQ

Here are some frequently asked questions about using LLMs on your device:

1. Why do I need to worry about token generation speed?

Faster token generation means faster results. It's like the difference between typing a message in seconds or minutes. For example, you can generate a full blog post in under a minute with a fast system, rather than waiting hours for the same result.

2. What are other factors that affect LLM performance besides token generation speed?

Apart from how quickly tokens are generated, the memory available, the CPU's performance, and even software optimization can influence the overall experience.

3. What if I don't have an RTX4000Ada_20GB?

Don't worry! Other hardware options exist for running LLMs locally. Consider exploring GPUs like the RTX 3090, the RTX 4070, or even powerful CPUs for a cost-effective approach.

4. How do I choose the right LLM for my needs?

It depends on the task at hand! Smaller models like 7B or 13B might suffice for simple tasks, while larger models like 70B are ideal for more complex tasks that require deep understanding. It all comes down to your specific requirements and available computing power.

5. How can I learn more about LLMs and their performance?

There are tons of resources available! Follow online communities, explore open-source projects like llama.cpp, read research papers, and experiment with different models and devices. The field of LLMs is constantly evolving, so stay curious and keep learning!

Keywords

Llama3, 70B, NVIDIA, RTX4000Ada20GB, LLM, model, performance, benchmarks, token generation, speed, quantization, Q4K_M, F16, use cases, practical recommendations, workarounds, GPU, CPU, memory, optimization, open-source, community, experimentation, research, development