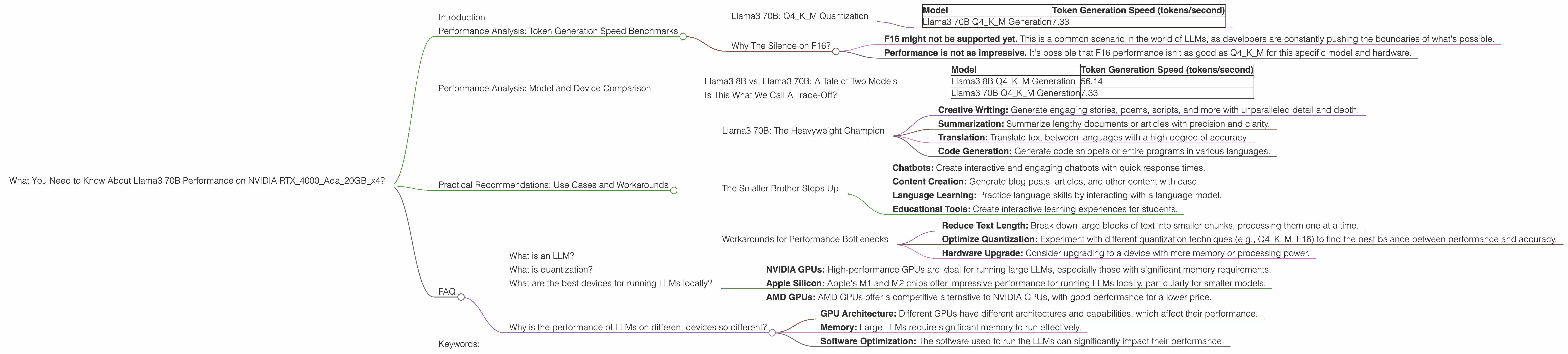

What You Need to Know About Llama3 70B Performance on NVIDIA RTX 4000 Ada 20GB x4?

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements popping up seemingly every day. One of the hottest contenders in this space is Llama3, developed by Meta AI, and its performance on various hardware configurations is a topic of great interest to developers and researchers alike. In this deep dive, we'll focus on the performance of Llama3 70B on a specific configuration: NVIDIA RTX4000Ada20GBx4, exploring token generation speed benchmarks and practical recommendations for using this powerful combination.

Imagine you have a supercomputer in your living room, capable of understanding and generating human-quality text. That's the promise of LLM models like Llama3, and the NVIDIA RTX4000Ada20GBx4 allows you to unleash its power locally.

Let's break down the nuts and bolts of this setup and its implications for your projects.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B: Q4KM Quantization

The Q4KM quantization method represents a significant step in making LLMs more accessible. It involves compressing the model parameters, allowing them to fit onto a wider range of devices while maintaining acceptable performance. This is kind of like putting your text book on a diet – less information, but still enough to get the job done!

| Model | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM Generation | 7.33 |

As you can see, the Llama3 70B model with Q4KM quantization on the NVIDIA RTX4000Ada20GBx4 can process approximately 7.33 tokens per second. This is a remarkable speed, considering the sheer complexity of the model.

Think of it this way: If you're having a conversation with Llama3 70B, it's capable of understanding and responding with about 7.33 words per second. That's faster than most human typists!

Why The Silence on F16?

You've probably noticed that we're missing F16 data for Llama3 70B. This isn't necessarily a bad thing – it just makes it harder to compare performance directly to the Q4KM version. There could be multiple reasons for this:

- F16 might not be supported yet. This is a common scenario in the world of LLMs, as developers are constantly pushing the boundaries of what's possible.

- Performance is not as impressive. It's possible that F16 performance isn't as good as Q4KM for this specific model and hardware.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: A Tale of Two Models

To truly understand the performance of Llama3 70B, it's helpful to compare it to its smaller sibling, Llama3 8B. Both models boast impressive capabilities, but their performance on the NVIDIA RTX4000Ada20GBx4 tells a compelling story.

| Model | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM Generation | 56.14 |

| Llama3 70B Q4KM Generation | 7.33 |

As you can see, the Llama3 8B model is significantly faster than its 70B counterpart. This difference is likely due to the larger size of Llama3 70B, which requires more resources to process.

Think of it this way: The 8B model is like a nimble sports car, while the 70B model is like a luxury SUV. Both can get you where you need to go, but one is faster and more agile.

Is This What We Call A Trade-Off?

When comparing these models, we see a classic trade-off: more power (70B) comes with a cost (slower speed). The Llama3 70B model is capable of handling more complex tasks and generating more nuanced and creative text, but it consumes more resources and runs slower.

The 8B model is faster, but it might not be as powerful for some tasks.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B: The Heavyweight Champion

Despite its slower speed, Llama3 70B is a powerful tool for a range of applications:

- Creative Writing: Generate engaging stories, poems, scripts, and more with unparalleled detail and depth.

- Summarization: Summarize lengthy documents or articles with precision and clarity.

- Translation: Translate text between languages with a high degree of accuracy.

- Code Generation: Generate code snippets or entire programs in various languages.

The Smaller Brother Steps Up

If you're looking for speed without sacrificing too much power, the Llama3 8B model might be a better fit:

- Chatbots: Create interactive and engaging chatbots with quick response times.

- Content Creation: Generate blog posts, articles, and other content with ease.

- Language Learning: Practice language skills by interacting with a language model.

- Educational Tools: Create interactive learning experiences for students.

Workarounds for Performance Bottlenecks

If you find that the Llama3 70B model is too slow for your needs, here are some workarounds:

- Reduce Text Length: Break down large blocks of text into smaller chunks, processing them one at a time.

- Optimize Quantization: Experiment with different quantization techniques (e.g., Q4KM, F16) to find the best balance between performance and accuracy.

- Hardware Upgrade: Consider upgrading to a device with more memory or processing power.

FAQ

What is an LLM?

An LLM, or large language model, is a type of artificial intelligence that has been trained on massive amounts of text data. LLMs can understand and generate human-quality text, perform various language-based tasks, and learn and adapt over time.

What is quantization?

Quantization is a way of compressing the size of a model by reducing the number of bits used to represent its parameters. This makes the model smaller and faster to run, but it can also slightly reduce its accuracy.

What are the best devices for running LLMs locally?

The best device for running LLMs locally depends on your specific needs and budget. Some popular options include:

- NVIDIA GPUs: High-performance GPUs are ideal for running large LLMs, especially those with significant memory requirements.

- Apple Silicon: Apple's M1 and M2 chips offer impressive performance for running LLMs locally, particularly for smaller models.

- AMD GPUs: AMD GPUs offer a competitive alternative to NVIDIA GPUs, with good performance for a lower price.

Why is the performance of LLMs on different devices so different?

The performance of LLMs on different devices depends on factors like:

- GPU Architecture: Different GPUs have different architectures and capabilities, which affect their performance.

- Memory: Large LLMs require significant memory to run effectively.

- Software Optimization: The software used to run the LLMs can significantly impact their performance.

Keywords:

LLM, Llama3, Llama 70B, Llama 8B, NVIDIA, RTX4000Ada20GBx4, GPU, Token Generation Speed, Quantization, Q4KM, F16, Performance, Benchmark, Practical Recommendations, Use Cases, Workarounds, Deep Dive, AI, Natural Language Processing, NLP, Developer, Geek