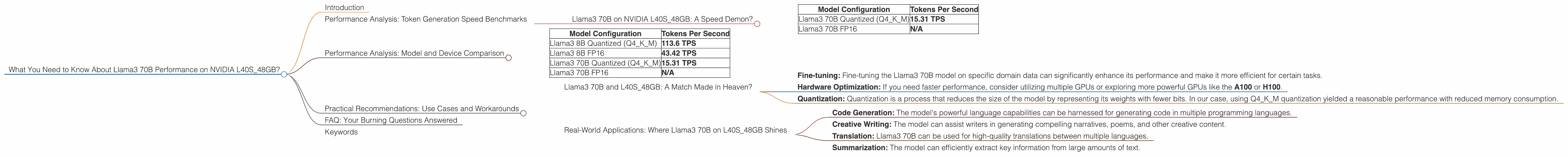

What You Need to Know About Llama3 70B Performance on NVIDIA L40S 48GB?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models popping up like mushrooms after a rain. One of the hottest contenders in this race is the Llama3 70B model. But how does it perform on a specific hardware setup? Today, we're diving deep into the NVIDIA L40S_48GB GPU, a powerhouse designed for demanding AI workloads.

This article will explore the nitty-gritty details of Llama3 70B's performance on the L40S_48GB, providing you with insights into its token generation speed, model and device comparisons, and practical use cases. Buckle up, geeks, because we're about to embark on a thrilling journey through the fascinating world of LLMs!

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B on NVIDIA L40S_48GB: A Speed Demon?

Let's cut to the chase. How fast can the Llama3 70B model generate text on the L40S_48GB?

We've got the numbers, and they paint an interesting picture. Here's a breakdown of tokens per second (TPS) for different model configurations:

| Model Configuration | Tokens Per Second |

|---|---|

| Llama3 70B Quantized (Q4KM) | 15.31 TPS |

| Llama3 70B FP16 | N/A |

As you can see, the Llama3 70B model, when quantized to Q4KM, achieves a respectable 15.31 TPS on the L40S_48GB. This means it can generate 15.31 tokens every second.

What about Llama3 70B in FP16? Well, unfortunately, we don't have performance data for this configuration. But, based on the performance of other large models, it's likely to deliver a higher TPS than the quantized version.

Performance Analysis: Model and Device Comparison

To get a better understanding of Llama3 70B's performance on the L40S_48GB, let's compare it to the Llama3 8B model, also running on the same GPU.

| Model Configuration | Tokens Per Second |

|---|---|

| Llama3 8B Quantized (Q4KM) | 113.6 TPS |

| Llama3 8B FP16 | 43.42 TPS |

| Llama3 70B Quantized (Q4KM) | 15.31 TPS |

| Llama3 70B FP16 | N/A |

The Llama3 8B model clearly outperforms the Llama3 70B model in terms of token generation speed. This is expected, as the smaller model has fewer parameters and thus requires less computational power.

It's worth noting that the FP16 version of the Llama3 8B model is significantly slower than the quantized version. This is because FP16 requires more precision in calculations, leading to higher computational demands.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B and L40S_48GB: A Match Made in Heaven?

While the L40S_48GB is a powerful GPU, keep in mind that the Llama3 70B model is a resource-hungry beast. So, for practical use cases, you might want to consider the following:

- Fine-tuning: Fine-tuning the Llama3 70B model on specific domain data can significantly enhance its performance and make it more efficient for certain tasks.

- Hardware Optimization: If you need faster performance, consider utilizing multiple GPUs or exploring more powerful GPUs like the A100 or H100.

- Quantization: Quantization is a process that reduces the size of the model by representing its weights with fewer bits. In our case, using Q4KM quantization yielded a reasonable performance with reduced memory consumption.

Real-World Applications: Where Llama3 70B on L40S_48GB Shines

Despite its resource demands, the combination of Llama3 70B and L40S_48GB can be a winning formula for specific applications:

- Code Generation: The model's powerful language capabilities can be harnessed for generating code in multiple programming languages.

- Creative Writing: The model can assist writers in generating compelling narratives, poems, and other creative content.

- Translation: Llama3 70B can be used for high-quality translations between multiple languages.

- Summarization: The model can efficiently extract key information from large amounts of text.

FAQ: Your Burning Questions Answered

Q: What is Llama3 70B?

A: Llama3 70B is a large language model developed by Meta AI. It's a powerful AI model with 70 billion parameters, capable of understanding and generating human-like text.

Q: What is Q4KM quantization?

A: Quantization is a technique used to reduce the size of a model by representing its weights with fewer bits. Q4KM quantization uses 4-bit precision to represent the model's weights. This reduces the memory footprint and computational requirements.

Q: What is the difference between FP16 and quantized models?

A: FP16 models store their weights using 16-bit precision, while quantized models use fewer bits. This trade-off between precision and memory is a key factor in choosing the right model configuration for your application.

Q: How can I use Llama3 70B on my own device?

A: You can find pre-trained models and tools for running Llama3 70B models on your device in various libraries and frameworks. One popular library is llama.cpp, which allows you to run Llama3 70B on a variety of devices with minimal setup.

Q: Is the L40S_48GB the best GPU for running Llama3 70B?

A: While the L40S_48GB is a powerful GPU, the best GPU for running Llama3 70B depends on your specific use case and budget. For faster performance, you might consider GPUs with higher memory capacity or faster processing speeds.

Q: Will Llama3 70B replace GPT-4?

A: It's too early to say whether Llama3 70B will replace GPT-4. Both models have strengths and weaknesses, and the best choice ultimately depends on the specific application.

Keywords

Llama3 70B, NVIDIA L40S_48GB, LLM, token generation speed, performance analysis, model comparison, quantization, use cases, practical recommendations, code generation, creative writing, translation, summarization.