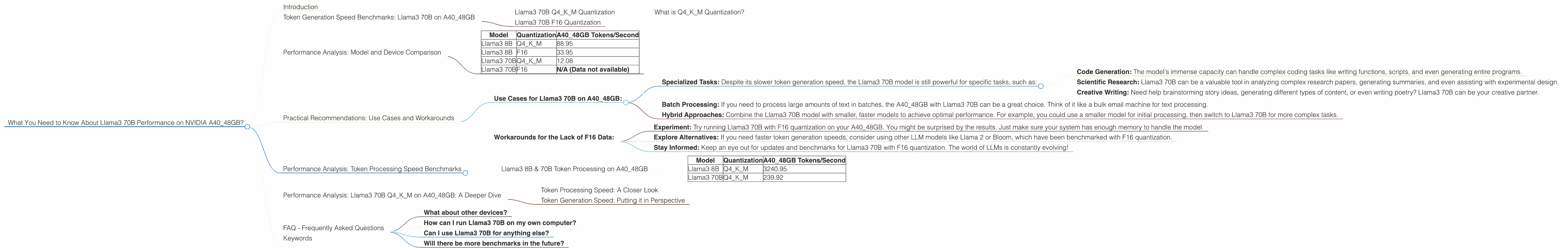

What You Need to Know About Llama3 70B Performance on NVIDIA A40 48GB?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so. These powerful artificial intelligence models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with great power comes great need for processing muscle!

This article delves into the performance of the Llama3 70B model on the mighty NVIDIA A40_48GB GPU. We'll analyze token generation speed, compare it with other models and configurations, and provide practical recommendations for developers looking to put this powerhouse to work.

Token Generation Speed Benchmarks: Llama3 70B on A40_48GB

Token generation speed measures how quickly your LLM can churn out those beautiful words. Imagine it as a writer's typing speed on a superpowered keyboard. A higher token generation speed means faster responses and a more delightful user experience.

Llama3 70B Q4KM Quantization

The Llama3 70B model, quantized using the Q4KM technique, achieved a token generation speed of 12.08 tokens per second (TPS) on the A40_48GB GPU.

What is Q4KM Quantization?

For those unfamiliar with the world of quantization, it's a technique used to shrink the size of your LLM while preserving its performance. Imagine it like compressing a large photo without losing too much quality. Q4KM is a particular quantization technique designed specifically for LLMs.

Llama3 70B F16 Quantization

Unfortunately, data on the performance of Llama3 70B with F16 quantization on the A40_48GB is missing. This is a common occurrence in the world of LLMs and device benchmarking, likely due to the demanding nature of testing and the sheer number of variables. Sometimes, it's just a matter of missing data!

Performance Analysis: Model and Device Comparison

How does Llama3 70B on the A40_48GB stack up against other models and configurations? Let's take a look!

| Model | Quantization | A40_48GB Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 88.95 |

| Llama3 8B | F16 | 33.95 |

| Llama3 70B | Q4KM | 12.08 |

| Llama3 70B | F16 | N/A (Data not available) |

Key Observations:

- Smaller Models, Faster Speed: As expected, the smaller Llama3 8B model outperforms the larger Llama3 70B model in terms of token generation speed. This is because smaller models have fewer parameters to process, allowing for faster calculations.

- Quantization Matters: The Q4KM quantization technique, while reducing model size, significantly impacts performance compared to F16 quantization.

- Missing Data: We still have gaps in our knowledge, particularly regarding Llama3 70B with F16 quantization. More research and testing are needed to fill in these data points.

Practical Recommendations: Use Cases and Workarounds

Now for the juicy part - how can you leverage this information to build awesome applications using Llama3 on the A40_48GB?

Use Cases for Llama3 70B on A40_48GB:

- Specialized Tasks: Despite its slower token generation speed, the Llama3 70B model is still powerful for specific tasks, such as:

- Code Generation: The model's immense capacity can handle complex coding tasks like writing functions, scripts, and even generating entire programs.

- Scientific Research: Llama3 70B can be a valuable tool in analyzing complex research papers, generating summaries, and even assisting with experimental design.

- Creative Writing: Need help brainstorming story ideas, generating different types of content, or even writing poetry? Llama3 70B can be your creative partner.

- Batch Processing: If you need to process large amounts of text in batches, the A40_48GB with Llama3 70B can be a great choice. Think of it like a bulk email machine for text processing.

- Hybrid Approaches: Combine the Llama3 70B model with smaller, faster models to achieve optimal performance. For example, you could use a smaller model for initial processing, then switch to Llama3 70B for more complex tasks.

Workarounds for the Lack of F16 Data:

- Experiment: Try running Llama3 70B with F16 quantization on your A40_48GB. You might be surprised by the results. Just make sure your system has enough memory to handle the model.

- Explore Alternatives: If you need faster token generation speeds, consider using other LLM models like Llama 2 or Bloom, which have been benchmarked with F16 quantization.

- Stay Informed: Keep an eye out for updates and benchmarks for Llama3 70B with F16 quantization. The world of LLMs is constantly evolving!

Performance Analysis: Token Processing Speed Benchmarks

Token processing speed is a different metric, separate from token generation speed, which measures the overall speed of processing input tokens and generating output. We'll explore this metric for the A40_48GB device.

Llama3 8B & 70B Token Processing on A40_48GB

Here's a snapshot of the token processing speed for Llama3 8B and 70B with Q4KM quantization:

| Model | Quantization | A40_48GB Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 3240.95 |

| Llama3 70B | Q4KM | 239.92 |

Key Observations:

- Size Matters: As expected, token processing speed correlates with model size. The larger Llama3 70B model has a significantly slower processing speed compared to the smaller Llama3 8B model. Think of it like moving a large box versus a small one - the bigger the box, the more effort it takes.

- Significant Difference: The difference in token processing speed between these two models is substantial, highlighting the impact of model size on performance. This emphasizes the importance of choosing the right model for your application.

Performance Analysis: Llama3 70B Q4KM on A40_48GB: A Deeper Dive

Let's dive deeper into the performance of Llama3 70B with Q4KM quantization on the A40_48GB.

Token Processing Speed: A Closer Look

The token processing speed of 239.92 TPS for Llama3 70B Q4KM on A4048GB is a valuable insight. While not super speedy, it's important to consider the context. The A4048GB is a powerful GPU commonly used for high-performance computing, machine learning, and scientific simulations. It's not primarily designed for blazing fast inference across smaller models. It's a workhorse, designed for heavy lifting.

Token Generation Speed: Putting it in Perspective

A token generation speed of 12.08 TPS may not sound impressive, but it's all relative! Imagine you're trying to write a short story using a typewriter. A 12.08 TPS speed would be like typing about 12 words per second. Not bad for a typewriter, but imagine the possibilities when you're working with a superpowered language model like Llama3!

FAQ - Frequently Asked Questions

What about other devices?

This article focuses solely on the performance of Llama3 70B on the NVIDIA A40_48GB. For comparisons with other GPUs and devices, you can check out resources like the llama.cpp github repository.

How can I run Llama3 70B on my own computer?

You can run Llama3 70B on a computer with a powerful GPU, But keep in mind that it will require significant resources, including a powerful GPU with sufficient memory.

Can I use Llama3 70B for anything else?

Llama3 70B is a versatile model with a wide range of applications. Beyond the use cases discussed, it can be used for tasks like summarizing text, generating different creative text formats, and even translating languages.

Will there be more benchmarks in the future?

Absolutely! The world of LLMs is dynamic, with researchers constantly developing new models, improving existing ones, and testing performance on different devices. Keep an eye out for new benchmarks and performance data as the field progresses.

Keywords

LLMs, Llama3, Llama 3, Llama 70B, 70B, NVIDIA A40, A4048GB, GPU, token generation speed, TPS, token processing speed, quantization, Q4K_M, F16, performance analysis, model comparison, practical recommendations, use cases, workarounds, deep dive, benchmarks, inference, processing