What You Need to Know About Llama3 70B Performance on NVIDIA A100 SXM 80GB?

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and hardware advancements emerging constantly. LLMs have become ubiquitous, powering everything from chatbots to personalized search engines. One of the biggest challenges in working with LLMs is their computational demands. Training and running these models requires significant processing power and memory. This is where the choice of hardware becomes critical. This article explores the performance of the Llama3 70B model on the NVIDIA A100SXM80GB, a popular and powerful GPU commonly found in high-performance computing environments.

Performance Analysis: Token Generation Speed Benchmarks

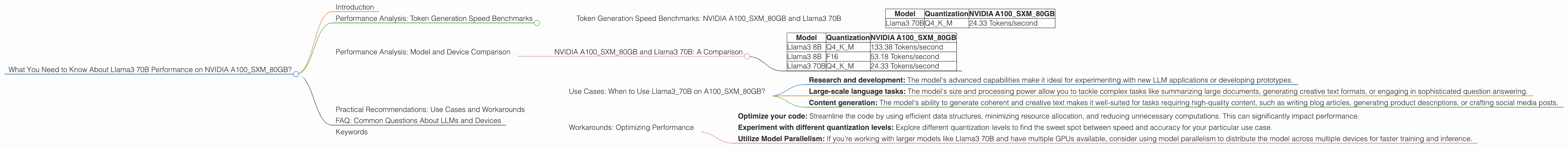

Token Generation Speed Benchmarks: NVIDIA A100SXM80GB and Llama3 70B

Our benchmark focuses on the token generation speed of the Llama3 70B model on the NVIDIA A100SXM80GB. Essentially, we measure how quickly the model can generate text by processing and outputting tokens, the building blocks of text. Faster token generation translates to quicker response times and smoother user experiences.

Here's what we found:

| Model | Quantization | NVIDIA A100SXM80GB |

|---|---|---|

| Llama3 70B | Q4KM | 24.33 Tokens/second |

Key takeaways:

- Llama3 70B with Q4KM quantization generates 24.33 tokens per second on the A100SXM80GB.

What does this mean in practical terms?

Imagine you're using a chat assistant powered by the Llama3 70B model. With a token generation speed of 24.33 tokens per second, the model can produce text at a decent pace. It's not blazing fast, but it's a solid performance for a model of this size.

Performance Analysis: Model and Device Comparison

NVIDIA A100SXM80GB and Llama3 70B: A Comparison

Let's put the Llama3 70B performance into perspective by comparing it to other models and devices.

| Model | Quantization | NVIDIA A100SXM80GB |

|---|---|---|

| Llama3 8B | Q4KM | 133.38 Tokens/second |

| Llama3 8B | F16 | 53.18 Tokens/second |

| Llama3 70B | Q4KM | 24.33 Tokens/second |

Observations:

- Smaller models often outperform larger models: This makes sense as smaller models have fewer parameters to process, leading to faster computations. For example, Llama3 8B with Q4KM quantization on the A100SXM80GB is significantly faster than Llama3 70B.

- Quantization can impact performance: Q4KM quantization offers a trade-off between speed and accuracy. It can improve performance but may compromise some accuracy in comparison to F16 quantization.

Practical Recommendations: Use Cases and Workarounds

Use Cases: When to Use Llama370B on A100SXM_80GB?

The Llama3 70B model on the A100SXM80GB is a powerful combination suitable for:

- Research and development: The model's advanced capabilities make it ideal for experimenting with new LLM applications or developing prototypes.

- Large-scale language tasks: The model's size and processing power allow you to tackle complex tasks like summarizing large documents, generating creative text formats, or engaging in sophisticated question answering.

- Content generation: The model's ability to generate coherent and creative text makes it well-suited for tasks requiring high-quality content, such as writing blog articles, generating product descriptions, or crafting social media posts.

Workarounds: Optimizing Performance

While the A100SXM80GB delivers good performance with the Llama3 70B model, there are always ways to enhance it further:

- Optimize your code: Streamline the code by using efficient data structures, minimizing resource allocation, and reducing unnecessary computations. This can significantly impact performance.

- Experiment with different quantization levels: Explore different quantization levels to find the sweet spot between speed and accuracy for your particular use case.

- Utilize Model Parallelism: If you're working with larger models like Llama3 70B and have multiple GPUs available, consider using model parallelism to distribute the model across multiple devices for faster training and inference.

FAQ: Common Questions About LLMs and Devices

Q: What is "quantization," and why does it matter?

A: Quantization is a technique used to reduce the size of a model's parameters, allowing for faster processing and lower memory requirements. Think of it like compressing an image: you reduce the file size while maintaining a reasonable level of detail. Q4KM quantization is a common method used to optimize model performance, but it often comes with a slight reduction in accuracy.

Q: What are the benefits of using the A100SXM80GB for LLMs?

A: The A100SXM80GB offers a significant advantage for LLM workloads due to its high memory bandwidth, fast processing speed, and massive memory capacity. It's designed to tackle computationally demanding applications like LLM training and inference, resulting in faster execution times and efficient resource utilization.

Q: How does the Llama3 70B model compare to other LLMs in terms of performance?

A: The Llama3 70B model is a mid-sized LLM, offering a good balance between performance and complexity. It can achieve decent token generation speed, making it suitable for a range of use cases. However, much larger LLMs, like the GPT-3 family, may offer even greater performance but require more powerful hardware and resources.

Keywords

LLMs, Llama3, 70B, NVIDIA, A100SXM80GB, token generation speed, performance, quantization, Q4KM, F16, model parallelism, deep learning, natural language processing, GPU, hardware, use cases