What You Need to Know About Llama3 70B Performance on NVIDIA A100 PCIe 80GB?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI systems are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But before you can unleash the full potential of LLMs, you need to understand how they perform on different hardware. This deep dive will explore the performance of the Llama3 70B model on the NVIDIA A100PCIe80GB GPU, a popular choice for demanding AI tasks.

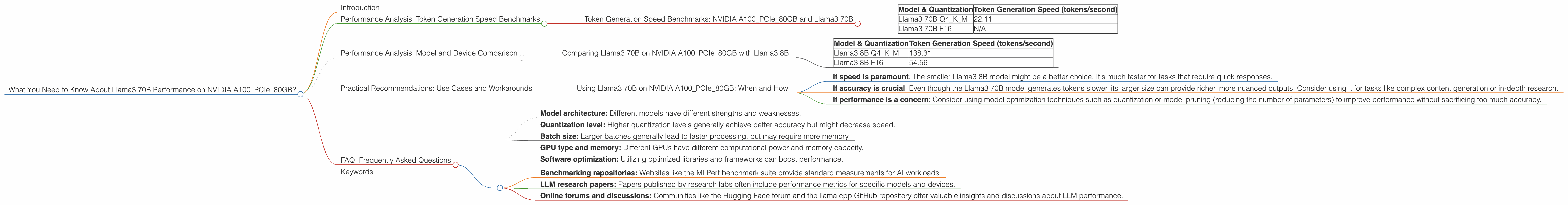

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A100PCIe80GB and Llama3 70B

Let's dive into the heart of the matter: how fast can the Llama3 70B model generate text on the NVIDIA A100PCIe80GB GPU? The numbers speak for themselves:

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM | 22.11 |

| Llama3 70B F16 | N/A |

What's Q4KM and F16? These refer to different quantization methods used to compress the model's weights, making it smaller and faster to run. Q4KM (a.k.a. "4-bit quantization") is a more aggressive method, using just 4 bits to store each weight. F16 uses 16 bits and provides higher accuracy but might be slower.

- Think of it this way: Imagine you're describing a friend's hairstyle. You could use a detailed description (like "short, layered, with blonde highlights"). That’s like F16, more accurate but longer. Or you could use a short description (like "she's got short hair and a blonde streak"). That's like Q4KM, faster, but less detailed.

Llama3 70B Q4KM on the NVIDIA A100PCIe80GB GPU generates around 22 tokens per second. This might seem slow, but it's important to remember that LLMs are complex beasts. It's not just about how fast they generate tokens, but also about the quality and meaningfulness of the output.

Why No F16 Data? Unfortunately, the data we have does not include the performance of the F16 version of Llama3 70B on the NVIDIA A100PCIe80GB GPU. It's possible that data wasn't collected yet or isn't publicly available.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B on NVIDIA A100PCIe80GB with Llama3 8B

Comparing the Llama3 70B with its smaller sibling, the Llama3 8B, reveals an interesting trend.

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 138.31 |

| Llama3 8B F16 | 54.56 |

The Llama3 8B Q4KM version on the NVIDIA A100PCIe80GB GPU is significantly faster, reaching 138.31 tokens per second. This makes sense, considering the smaller model has fewer parameters to process, resulting in a smoother workflow.

Why are smaller models faster? It’s like comparing a bicycle and a car. The bicycle (smaller model) is nimble and quick to maneuver, whereas the car (larger model) needs more effort to accelerate.

Practical Recommendations: Use Cases and Workarounds

Using Llama3 70B on NVIDIA A100PCIe80GB: When and How

So, should you use the Llama3 70B model on the NVIDIA A100PCIe80GB GPU? Here are some things to keep in mind:

- If speed is paramount: The smaller Llama3 8B model might be a better choice. It's much faster for tasks that require quick responses.

- If accuracy is crucial: Even though the Llama3 70B model generates tokens slower, its larger size can provide richer, more nuanced outputs. Consider using it for tasks like complex content generation or in-depth research.

- If performance is a concern: Consider using model optimization techniques such as quantization or model pruning (reducing the number of parameters) to improve performance without sacrificing too much accuracy.

FAQ: Frequently Asked Questions

Q: What are some other factors that affect LLM performance?

A: Several factors can influence LLM performance, including:

- Model architecture: Different models have different strengths and weaknesses.

- Quantization level: Higher quantization levels generally achieve better accuracy but might decrease speed.

- Batch size: Larger batches generally lead to faster processing, but may require more memory.

- GPU type and memory: Different GPUs have different computational power and memory capacity.

- Software optimization: Utilizing optimized libraries and frameworks can boost performance.

Q: Where can I find more information about LLM benchmarks and performance data?

*A: * Numerous resources are available:

- Benchmarking repositories: Websites like the MLPerf benchmark suite provide standard measurements for AI workloads.

- LLM research papers: Papers published by research labs often include performance metrics for specific models and devices.

- Online forums and discussions: Communities like the Hugging Face forum and the llama.cpp GitHub repository offer valuable insights and discussions about LLM performance.

Q: What's the future of LLMs and their performance?

A: The world of LLMs is rapidly evolving, with researchers continuously pushing the boundaries of what's possible. We can expect even more powerful models, optimized hardware, and improved techniques for deploying LLMs in real-world applications.

Keywords:

Llama3, 70B, NVIDIA, A100PCIe80GB, LLM, performance, token generation speed, speed, accuracy, quantization, Q4KM, F16, model architecture, batch size, GPU, memory, software optimization, benchmark, research papers, online forums, future of LLMs, AI.